Sampling in Driverless AI¶

Data Sampling¶

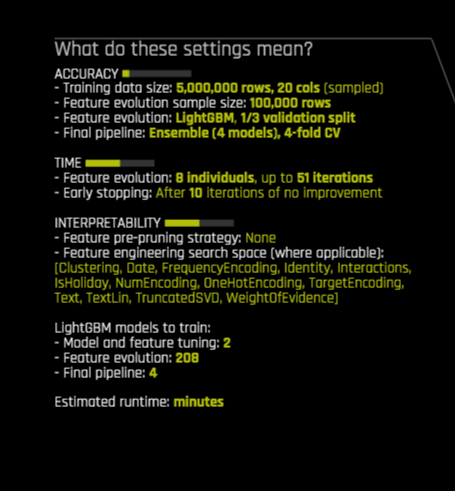

Driverless AI does not perform any type of data sampling unless the dataset is big or highly imbalanced (for improved accuracy). What is considered big is dependent on your accuracy setting and the statistical_threshold_data_size_large parameter in the config.toml file or in the Expert Settings. You can see if the data will be sampled by viewing the Experiment Preview when you set up the experiment. In the experiment preview below, I can see that my data was sampled down to 5 million rows for the final model, and to 100k rows for the feature evolution part of the experiment.

If Driverless AI decides to sample the data based on these settings and the data size, then Driverless AI performs the following types of sampling at the start of (and/or throughout) the experiment:

Random sampling for regression problems

Stratified sampling for classification problems

Imbalanced sampling for binary problems where the target distribution is considered imbalanced and imbalanced sampling methods are enabled (

imbalance_sampling_methodnot set to"off")

Imbalanced Model Sampling Methods¶

Imbalanced sampling techniques can help in binary classification use cases with highly imbalanced outcomes (churn, fraud, rare event modeling, etc.)

In Driverless AI, imbalanced sampling is an optional technique that is implemented with a special built-in custom recipe called Imbalanced Model. Two Imbalanced Models are available: ImbalancedLightGBMModel and ImbalancedXGBoostGBMModel. Both perform repeated stratified sampling (bagging) inside their fit() method in an attempt to speed up modeling and to improve the resolution of the decision boundary between the two classes. Because these models are presented a training dataset with a different prior than the original data, they require a probability correction that is performed as part of postprocessing in the predict() method.

When imbalanced sampling is enabled, no sampling is performed at the start of the experiment for either the feature evolution phase or the final model pipeline. Instead, sampling (with replacement) is performed during model fitting, and the model is presented a more balanced target class distribution than the original data. Because the sample is usually much smaller than the original data, this process can be repeated many times and each internal model’s prediction can be averaged to improve accuracy (bagging). By default, the number of bags is automatically determined, but this value can be specified in expert settings (imbalance_sampling_number_of_bags=-1 means automatic). For "over_under_sampling", each bag can have a slightly different balance between minority and majority classes.

There are multiple settings for imbalanced sampling:

Disabled (

imbalance_sampling_method="off", the default)Automatic (

imbalance_sampling_method="auto"). A combination of the two methods below.Under- and over-sample both minority and majority classes to reach roughly class balance in each sampled bag (

imbalance_sampling_method="over_under_sampling"). If original data has 500:10000 imbalance, this method could sample 1000:1500 samples for the first bag, 500:400 samples for the second bag, and so on.Under-sample the majority class to reach exact class balance in each sampled bag (

imbalance_sampling_method="under_sampling"). Would create 500:500 samples per bag for the same example imbalance ratio . Each bag would then sample the 500 rows from each class with replacement, so each bag is still different.

The amount of imbalance controls how aggressively imbalanced models are used for the experiment (if imbalance_sampling_method is not "off"):

By default, imbalanced is defined as when the majority class is 5 times more common than the minority class (

imbalance_ratio_sampling_threshold=5, configurable). In this case, imbalanced models are added to the list of models for the experiment to select from.By default, heavily imbalanced is defined as when the majority class is 25 times more common than the minority class (

heavy_imbalance_ratio_sampling_threshold=25, configurable). In highly imbalanced cases, imbalanced models are used exclusively.

Notes:

The binary imbalanced sampling techniques and settings described in this section apply only to the Imbalanced Model types listed above.

The data has to be large enough to enable imbalanced sampling: by default,

imbalance_sampling_threshold_min_rows_originalis set to 100,000 rows.If

imbalance_sampling_number_of_bags=-1(automatic) andimbalance_sampling_method="auto", the number of bags will be automatically determined by the experiment’s accuracy settings and by the total size of all bags together, controlled byimbalance_sampling_max_multiple_data_size, which defaults to1. So all bags together will be no larger than 1x the original data by default. For an imbalance of 1:19, each balanced 1:1 sample would be as large as 10% of the data, so it would take up to 10 such 1:1 bags (or approximately 10 if the balance is different or slightly random) to reach that limit. That’s still a bit much for feature evolution and could cost too much slowdown. That’s why the other limit of 3 (by default) for feature evolution exists. Feel free to adjust to your preferences.If

imbalance_sampling_number_of_bags=-1(automatic) andimbalance_sampling_method="over_under_sampling"or"under_sampling", the number of bags will be equal to the experiment’s accuracy settings (accuracy 7 will use 7 bags).The upper limit for the number of bags can be specified separately for feature evolution (

imbalance_sampling_max_number_of_bags_feature_evolution) and globally (i.e., final model) set by (imbalance_sampling_max_number_of_bags) and both will be strictly enforced.Instead of balancing the target class distribution via default value of

imbalance_sampling_target_minority_fraction=-1(same as setting it to 0.5), one can control the target fraction of the minority class. So if the data starts with a 1:1000 imbalance and you wish to model with a 1:9 imbalance, specifyimbalance_sampling_target_minority_fraction=0.1.To force the number of bags to 15 for all models, set

imbalance_sampling_number_of_bags=imbalance_sampling_max_number_of_bags_feature_evolution=imbalance_sampling_max_number_of_bags=15andimbalance_sampling_methodto either “over_under_sampling”`` orunder_sampling, based on your preferences.By default, Driverless AI will notify you if the imbalance ratio (majority to minority) is larger than 2, controlled by

imbalance_ratio_notification_threshold.Each parameter mentioned above is described in detail in the config.toml file, and also displayed in the expert options (on mouse-over hover), and each actual Imbalanced Model displays its parameter in the experiment logs.

Summary:

There are many options to control imbalanced sampling in Driverless AI. In most cases, it suffices to set imbalance_sampling_method="auto" and to compare results with an experiment without imbalanced sampling techniques imbalance_sampling_method="off"

In many cases, however, imbalanced models do not perform better than regular “full” models (especially if the majority class examples are important for the outcome, so any amount of sampling would hurt). It is never a bad idea to try imbalanced sampling techniques though, especially if the dataset is extremely large and highly imbalanced.