Launching Driverless AI¶

Driverless AI is tested on Chrome and Firefox but is supported on all major browsers. For the best user experience, we recommend using Chrome.

After Driverless AI is installed and started, open a browser and navigate to <server>:12345.

The first time you log in to Driverless AI, you will be prompted to read and accept the Evaluation Agreement. You must accept the terms before continuing. Review the agreement, then click I agree to these terms to continue.

Log in by entering unique credentials. For example:

Username: h2oai Password: h2oai

Note that these credentials do not restrict access to Driverless AI; they are used to tie experiments to users. If you log in with different credentials, for example, then you will not see any previously run experiments.

As with accepting the Evaluation Agreement, the first time you log in, you will be prompted to enter your License Key. Click the Enter License button, then paste the License Key into the License Key entry field. Click Save to continue. This license key will be saved in the host machine’s /license folder.

Note: Contact sales@h2o.ai for information on how to purchase a Driverless AI license.

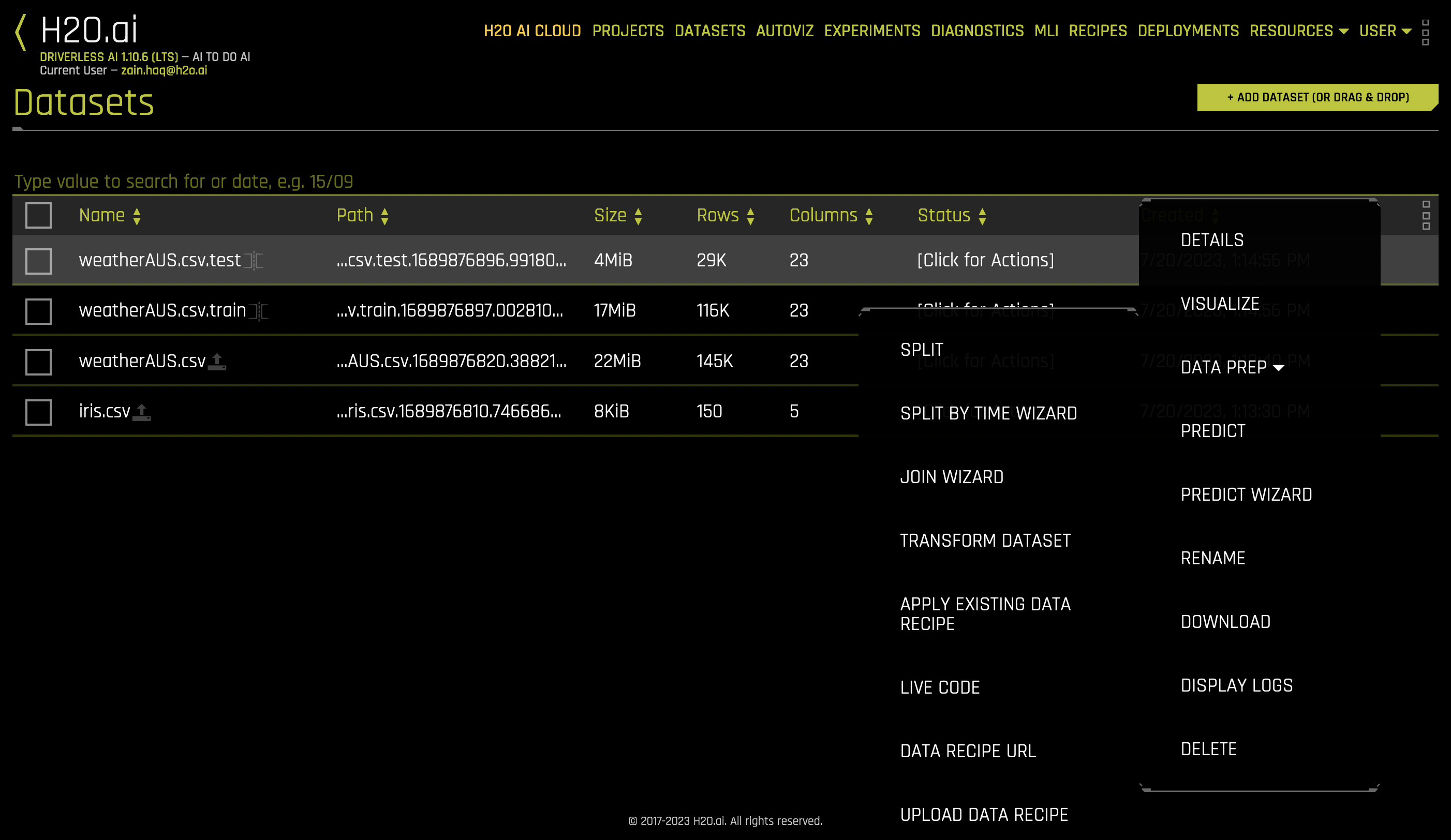

Upon successful completion, you will be ready to add datasets and run experiments.

Server startup files¶

The following is a list of files written by Driverless AI (DAI) on server startup:

pid files: These files are used to instruct later DAI stop Bash scripts on which processes to stop.

Standard output (stdout) log files: These log files are the standard output for different servers (given as prefix).

Standard error (stderr) log files: These log files are standard error for different servers (given as prefix).

TMPDIRdirectories: These are temporary directories used by various packages or servers.uploadsdirectory: This directory is where files are uploaded by the web server.funnelsdirectory: This directory is where certain forked processes store stderr or stdout files.sysdirectory: This directory is used by the system to perform various generic tasks.startup_job_userdirectory: This directory is used by the system to perform various startup tasks.

Note

Server logs and pid files are located in separate directories (server_logs and pids, respectively).

Resources¶

The Resources drop-down menu lets you view system information, download DAI clients, and view DAI-related tutorials and guides.

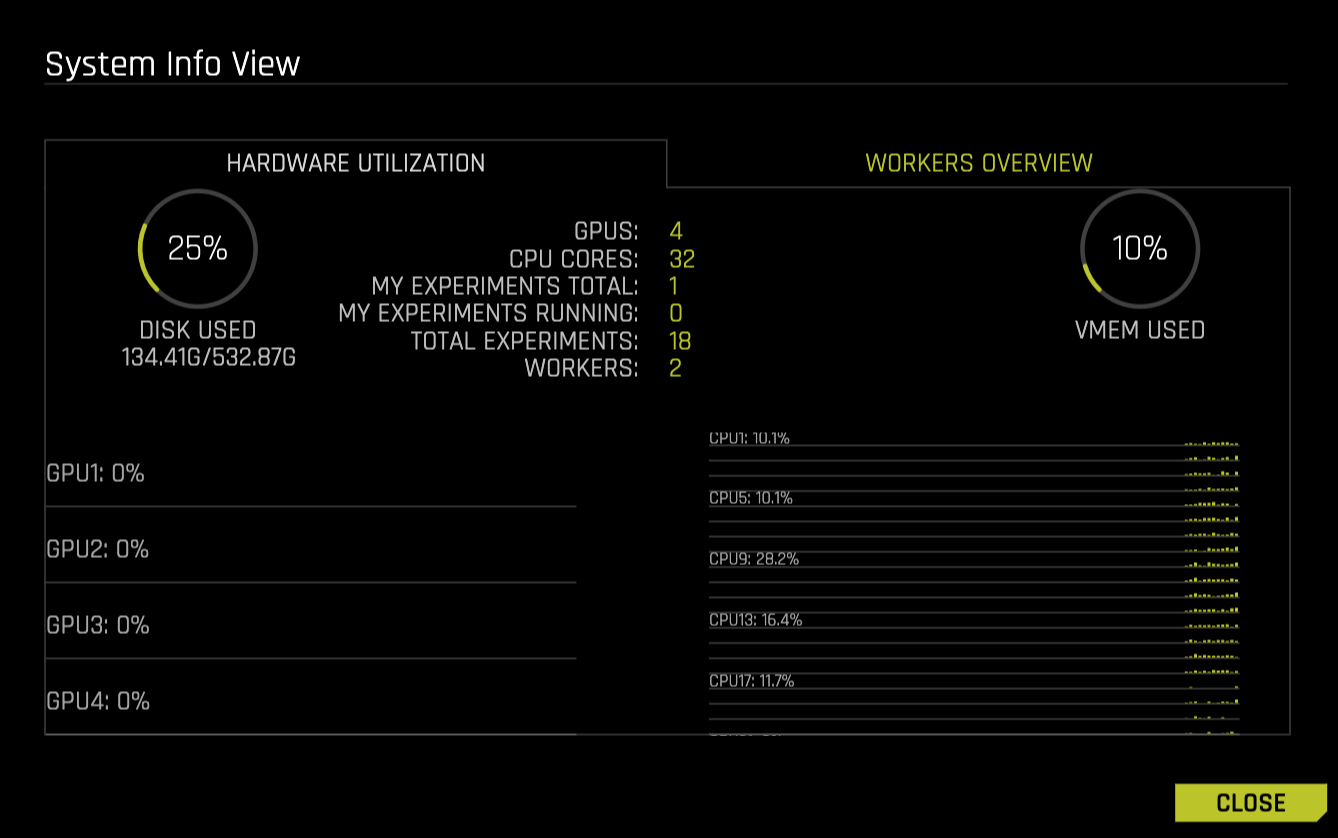

System Info: View information relating to hardware utilization and worker activity. Note that the worker activity tab is only available if Driverless AI was started in multinode mode. For more information on multinode training in DAI, see Multinode Training (Alpha).

Python Client: Download the Driverless AI Python client. For more information, see Python Client.

R Client: Download the Driverless AI R client. For more information, see R Client.

MOJO Java Runtime: Download the MOJO Java Runtime. For more information, see Driverless AI MOJO Scoring Pipeline - Java Runtime (With Shapley contribution).

MOJO Py Runtime: Download the MOJO Python Runtime. For more information, see Driverless AI MOJO Scoring Pipeline - C++ Runtime with Python (Supports Shapley) and R Wrappers.

MOJO R Runtime: Download the MOJO R Runtime. For more information, see Driverless AI MOJO Scoring Pipeline - C++ Runtime with Python (Supports Shapley) and R Wrappers.

Documentation: View the DAI documentation.

About: View version, current user, and license information for your Driverless AI install.

API Token: Click to retrieve an access token for authentication purposes.

User options¶

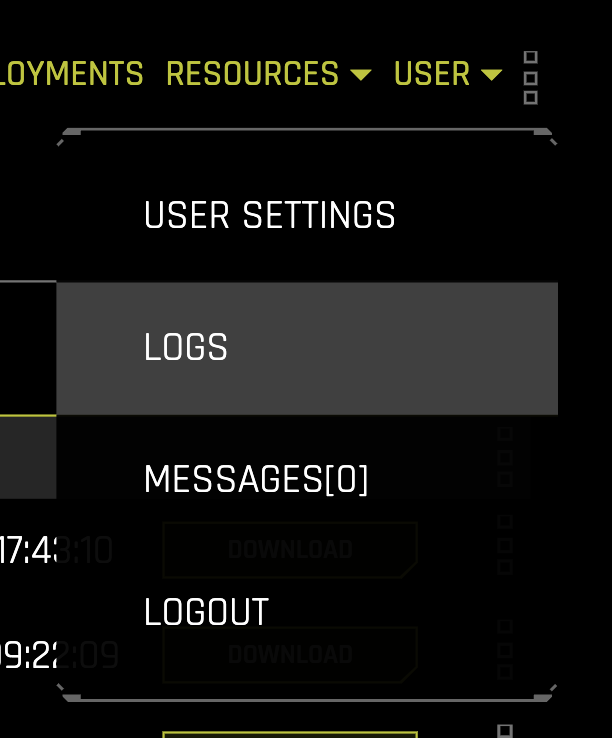

The following list describes the options that are available from the User drop-down menu.

User Settings: Configure various user-specific settings. For more information, see Driverless AI user settings.

Logs: Navigate to the Logs page, from which you can download server logs. Note that you must be an admin user to view the list of server logs.

Messages: View news and announcements relating to Driverless AI.

Logout: Log out of Driverless AI. Note that this option can be disabled by setting

disablelogout == true.