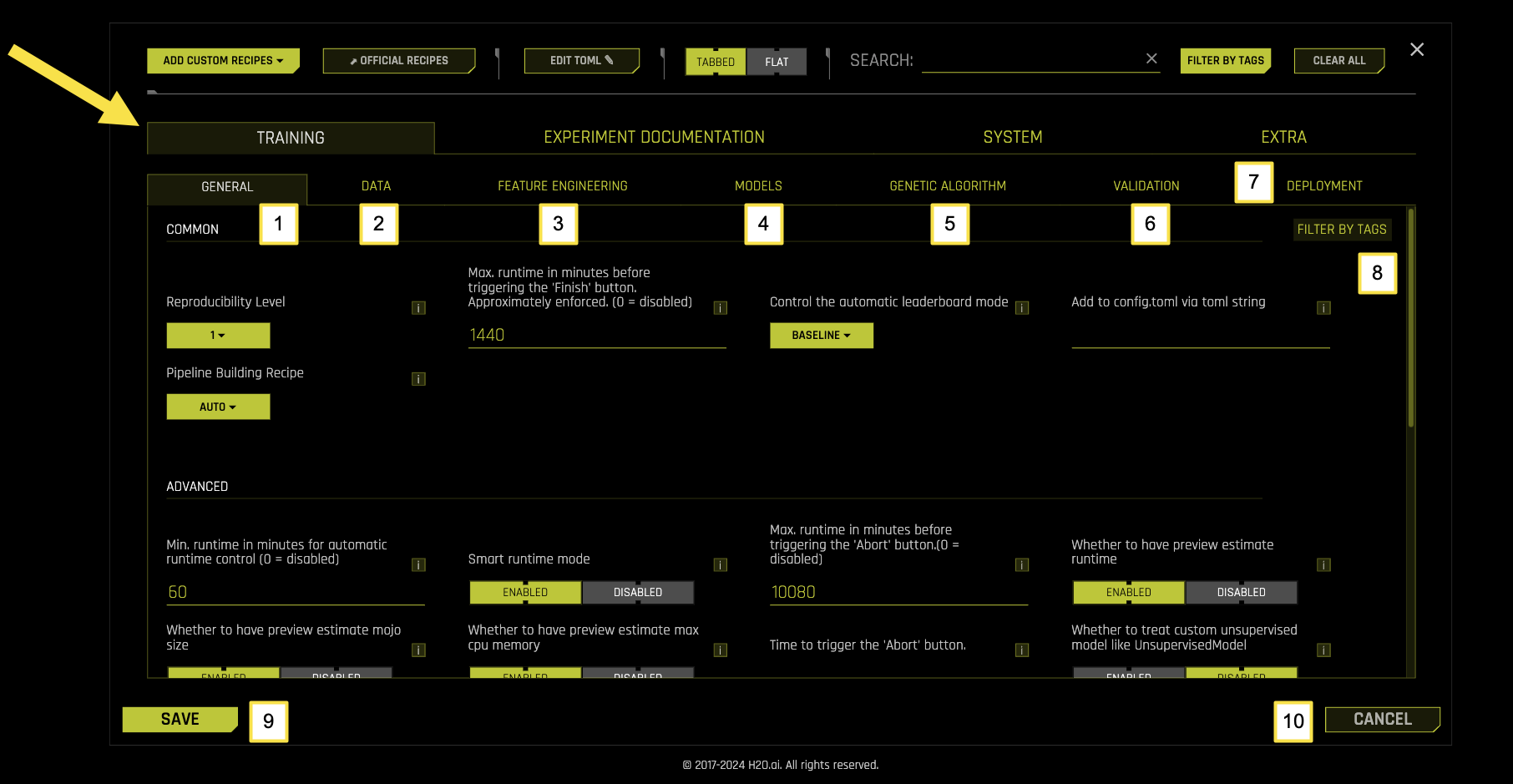

Training Settings

The TRAINING tab under EXPERT SETTINGS in Driverless AI offers detailed options for configuring and customizing the model training process. These settings enable you to fine-tune key elements of how DAI constructs, trains, and optimizes machine learning models for your experiments.

Training settings are organized into seven main sub-categories, each focusing on specific aspects of the training pipeline.

To access these settings, navigate to EXPERT SETTINGS > TRAINING tab from the EXPERIMENT SETUP page.

Refer to the table below for the actions you can take from the TRAINING page:

Training Sub-Categories

Sub-Category |

Description |

|---|---|

[1] General |

Core training parameters including reproducibility, pipeline building, runtime controls, and leaderboard behavior |

[2] Data |

Data preprocessing, sampling, validation, and handling settings |

[3] Feature Engineering |

Feature generation, selection, and transformation configurations |

[4] Models |

Model selection, hyperparameter tuning, and algorithm-specific settings |

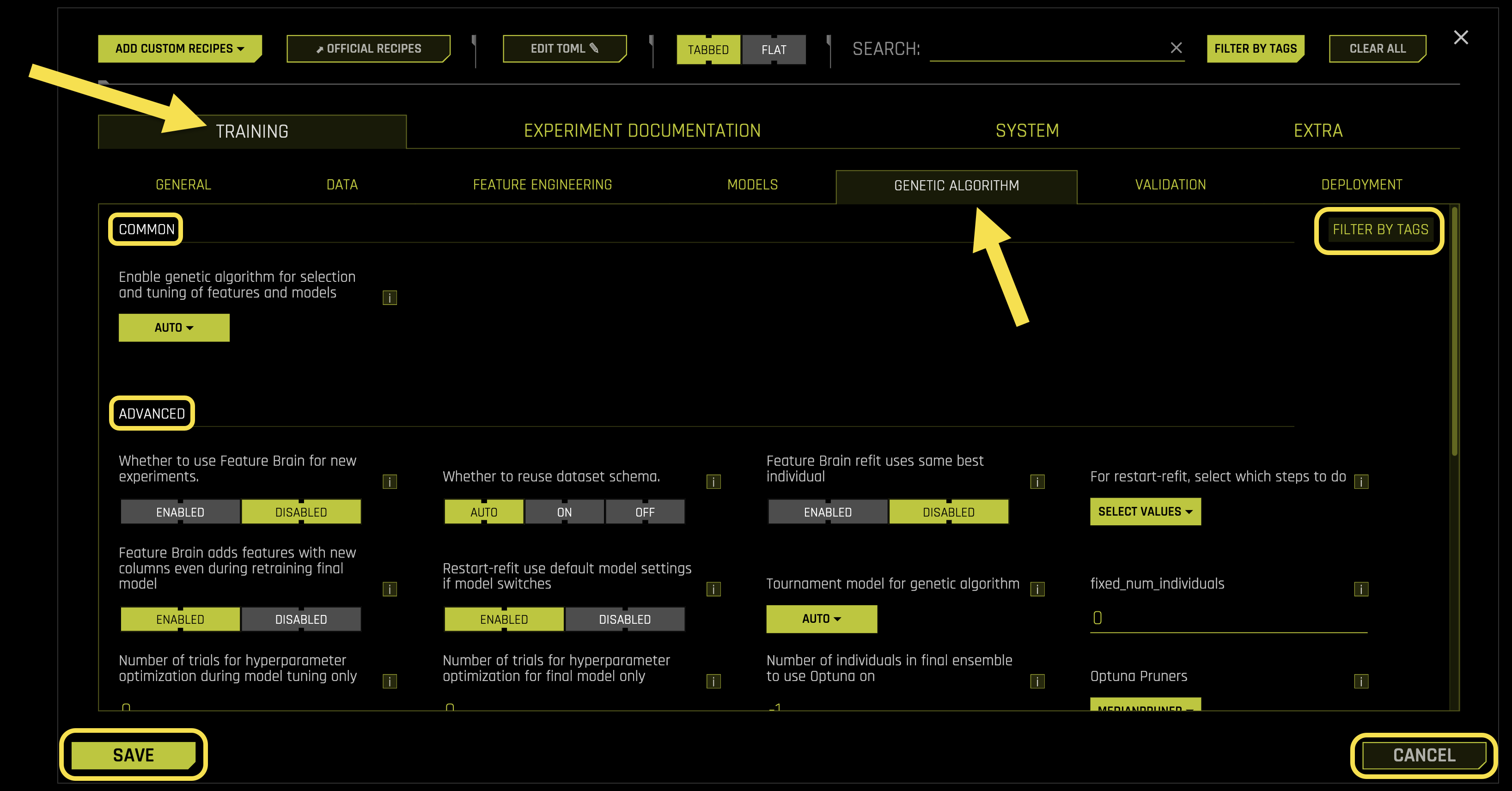

[5] Genetic Algorithm |

Optimization parameters for feature engineering and model selection |

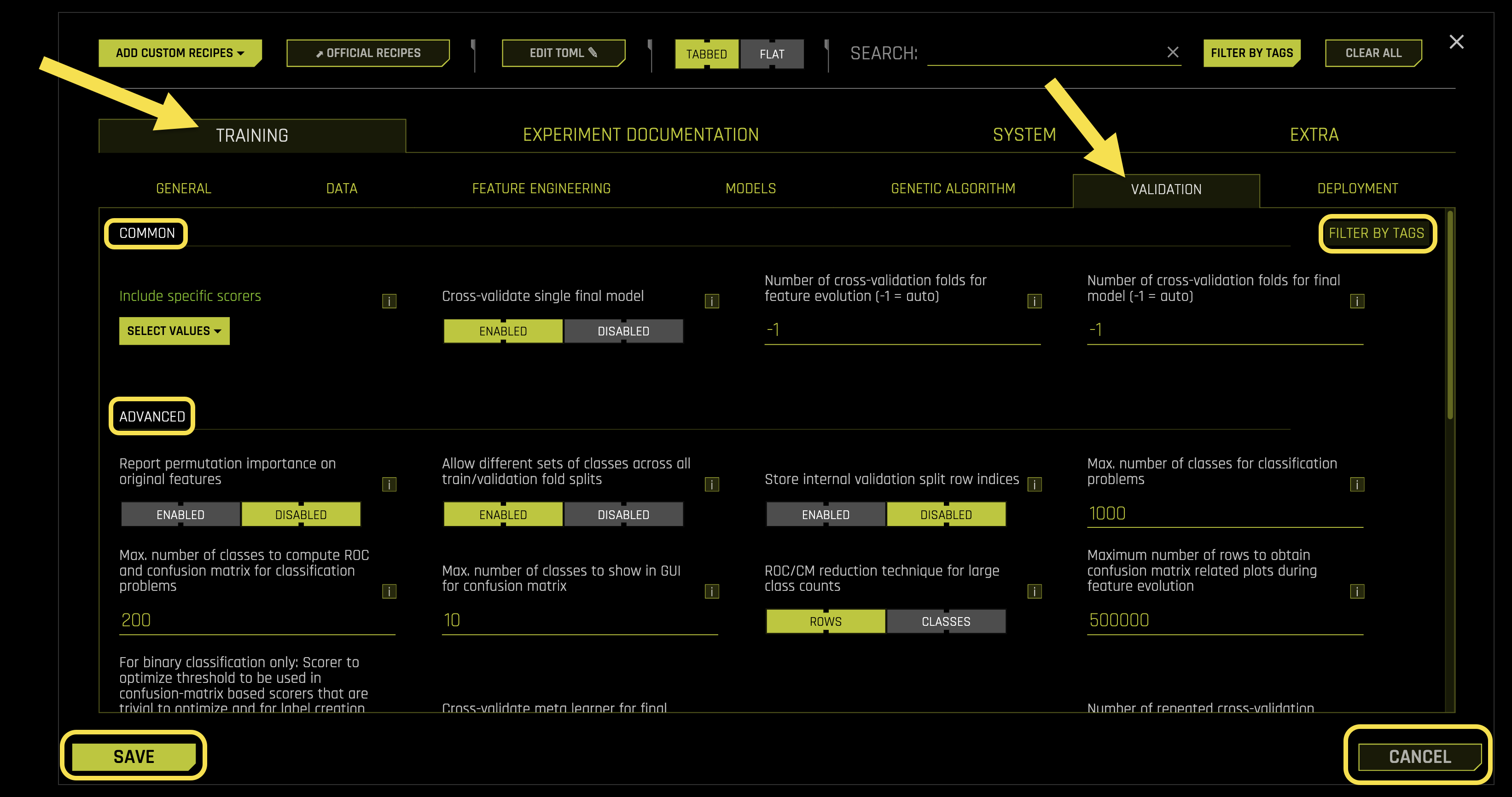

[6] Validation |

Cross-validation, holdout validation, and model evaluation settings |

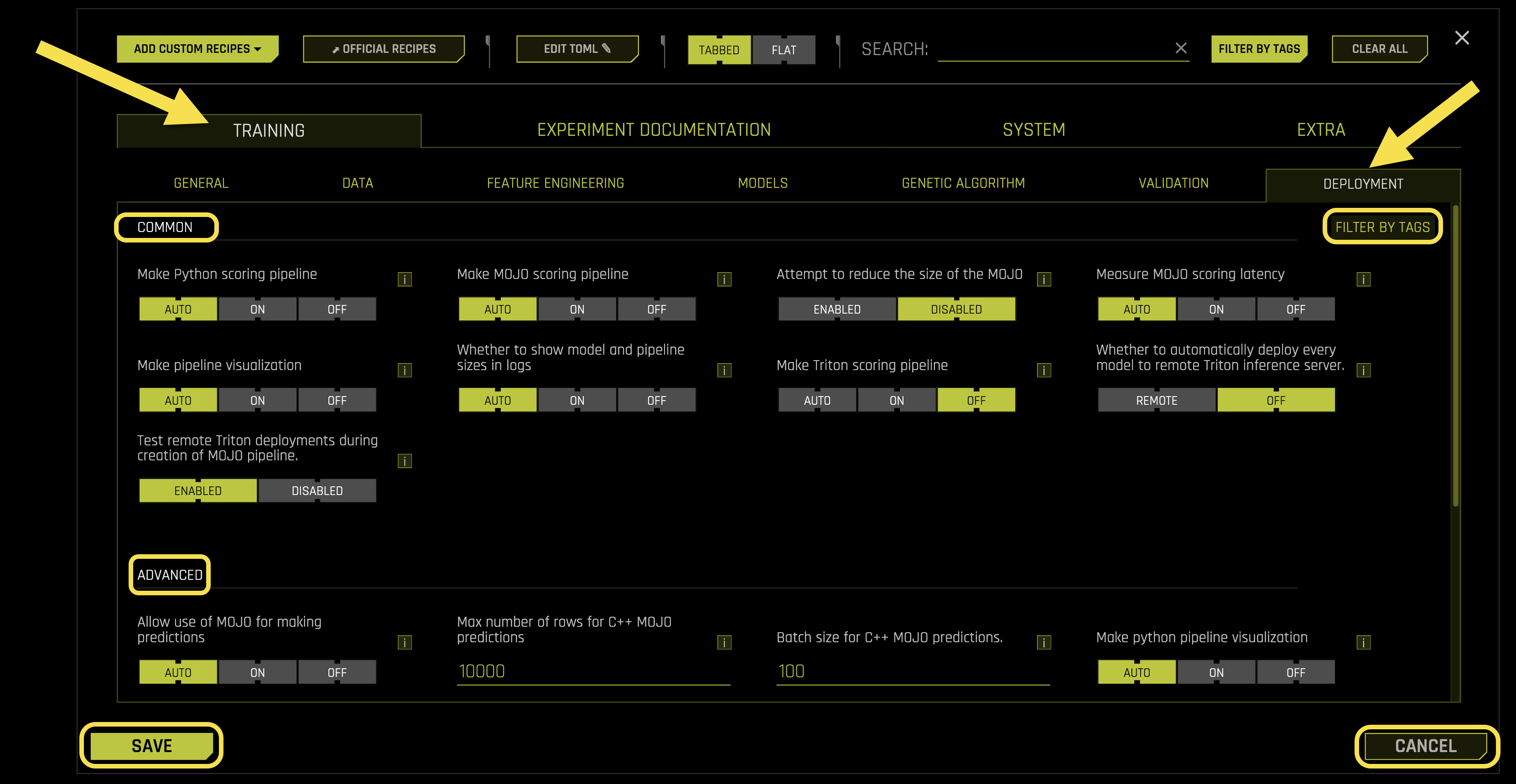

[7] Deployment |

Model deployment, MOJO generation, and scoring pipeline configurations |

[8] Filter by Tags |

Filter and organize training settings using custom tags and labels |

[9] Save |

Save the current training configuration settings |

[10] Cancel |

Cancel changes and return to the previous configuration |

참고

Changes to Training settings are applied when you launch the experiment. Some settings may affect experiment runtime and resource usage.

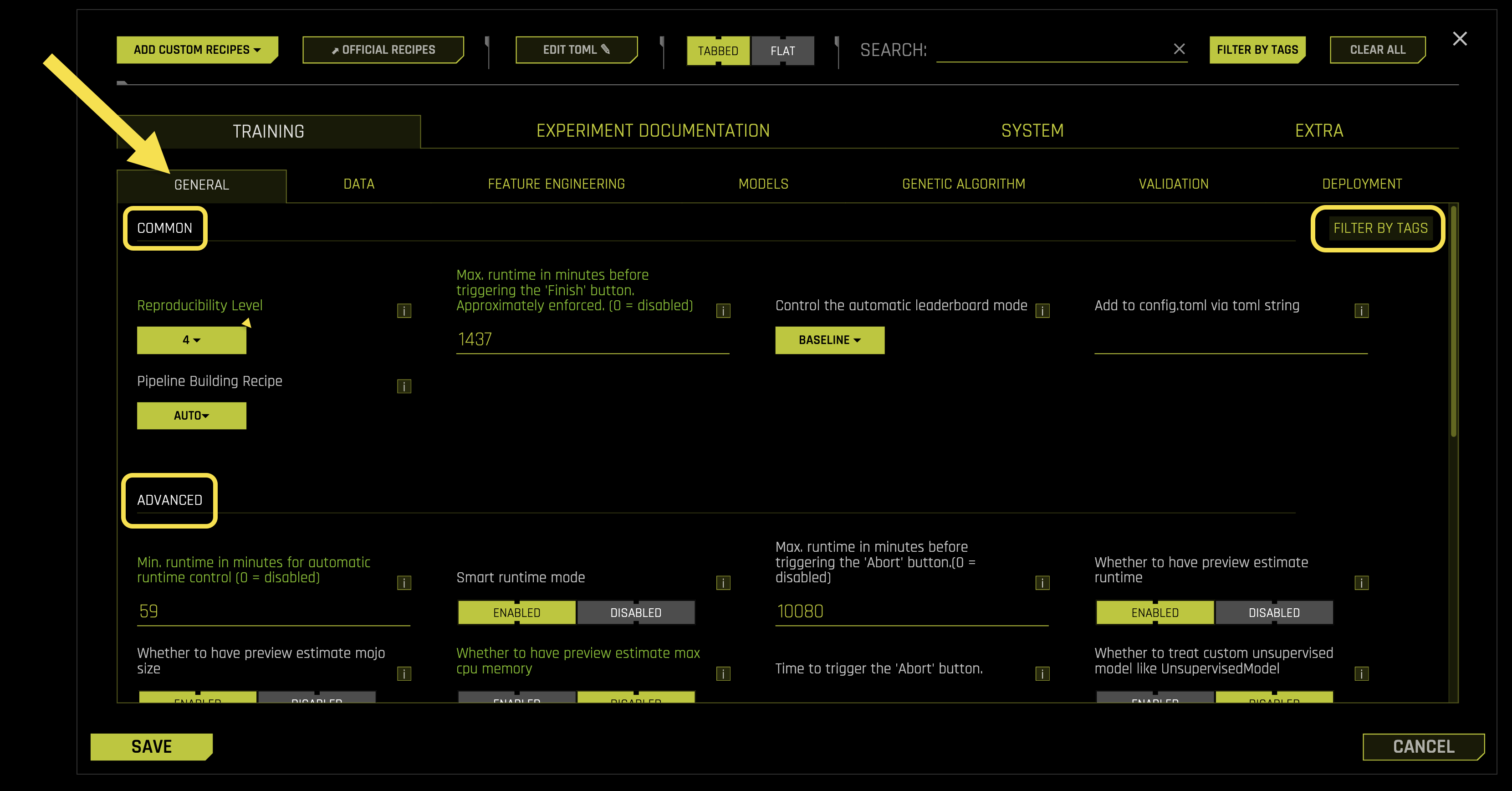

General

The General sub-tab contains core training parameters that control fundamental aspects of the experiment pipeline, reproducibility, and runtime behavior in Driverless AI (DAI).

Common Settings:

- Reproducibility Level

Controls how reproducible your experiments are when using the same data and inputs

How to enable: Check the 《Reproducible》 checkbox in the GUI or set a random seed via the APIs

- Supported levels:

1: Same Operating System (O/S), Central Processing Unit (CPU), and Graphics Processing Unit (GPU)

2: Same O/S and CPU/GPU architecture (different specific hardware allowed)

3: Same O/S and CPU architecture, no GPU required

4: Same O/S only (best effort)

Requires: Integer value (1-4, default: 1)

- Max. runtime in minutes before triggering the 〈Finish〉 button. Approximately enforced. (0 = disabled)

- What it does: If the experiment runs longer than this time limit, Driverless AI will:

Stop feature engineering and model tuning immediately

Build the final modeling pipeline

Create deployment artifacts

Note: This setting ignores model score convergence and predetermined iterations

Limitation: Only works when not in reproducible mode

Requires: Integer value (minutes, default: 1440, 0 = disabled)

- Control the automatic leaderboard mode

What it does: Controls how the leaderboard is automatically populated with models

- Available options:

〈baseline〉: Uses exemplar set with baseline models

〈random〉: Tests 10 random seeds

〈line〉: Creates good model with all features

〈line_all〉: Like 〈line〉 but enables all models/transformers

〈product〉: Performs Cartesian product exploration

Requires: String selection (default: 《baseline》)

- Add to config.toml via toml string

What it does: Allows you to set any configuration parameter using TOML (Tom’s Obvious Minimal Language) format

When to use: When a parameter isn’t available in Expert Mode but you want per-experiment control

Format: Enter TOML parameters as strings separated by newlines

Important: This setting overrides all other configuration choices

Example:

max_runtime_minutes = 120 accuracy = 8 time = 5

Requires: TOML string format

- Pipeline Building Recipe

What it does: Selects a predefined configuration template that overrides GUI settings

- Available recipes:

〈auto〉: Let Driverless AI choose the best recipe

〈compliant〉: Focus on interpretable, compliant models

〈monotonic_gbm〉: Use Gradient Boosting Machines with monotonic constraints

〈kaggle〉: Optimized for Kaggle competitions

〈nlp_model〉: For Natural Language Processing tasks

〈nlp_transformer〉: Advanced NLP with transformers

〈image_model〉: For image classification tasks

〈image_transformer〉: Advanced image processing with transformers

〈unsupervised〉: For unsupervised learning tasks

〈gpus_max〉: Maximize GPU utilization

〈more_overfit_protection〉: Enhanced overfitting protection

〈feature_store_mojo〉: Optimized for feature store deployment

Requires: String selection (default: 《auto》)

Advanced Settings:

- Min. runtime in minutes for automatic runtime control (0 = disabled)

What it does: Automatically sets the maximum runtime based on runtime estimates when preview estimates are enabled

How it works: If set to a non-zero value, Driverless AI will automatically adjust max_runtime_minutes using runtime estimates

To disable: Set to zero to prevent automatic runtime constraints

Requires: Integer value (minutes, default: 60, 0 = disabled)

- Smart runtime mode

What it does: Adjusts the maximum runtime based on the final number of base models

How it works: Helps ensure the entire experiment stops before the time limit by triggering the start of the final model at the right time

Requires: Boolean toggle (default: Enabled)

- Max. runtime in minutes before triggering the 〈Abort〉 button. (0 = disabled)

What it does: Automatically stops the experiment if it runs longer than this time limit

What happens when triggered: Driverless AI saves experiment artifacts created so far for summary and log zip files, but stops creating new artifacts

Requires: Integer value (minutes, default: 10080, 0 = disabled)

- Whether to have preview estimate runtime

What it does: Uses a model trained on many experiments to estimate how long your experiment will take

Limitation: May provide inaccurate estimates for experiment types not included in the training data

Requires: Boolean toggle (default: Enabled)

- Whether to have preview estimate MOJO size

What it does: Uses a model trained on many experiments to estimate the size of the MOJO (Model Object, Optimized) file

Limitation: May provide inaccurate estimates for experiment types not included in the training data

Requires: Boolean toggle (default: Enabled)

- Whether to have preview estimate max CPU memory

What it does: Uses a model trained on many experiments to estimate the maximum CPU memory usage

Limitation: May provide inaccurate estimates for experiment types not included in the training data

Requires: Boolean toggle (default: Enabled)

- Time to trigger the 〈Abort〉 button

What it does: Sets a specific date and time when the experiment should automatically stop

- Time formats accepted:

Date/time string in format: YYYY-MM-DD HH:MM:SS (default timezone: UTC)

Integer seconds since January 1, 1970 00:00:00 UTC (Unix timestamp)

Note: This is separate from the maximum runtime setting above

Requires: Time string or integer (empty by default)

- Whether to treat custom unsupervised model like Unsupervised Model

What it does: Controls how custom unsupervised models are configured

When enabled: You must specify each scorer, pretransformer, and transformer in the expert panel (like supervised experiments)

When disabled: Custom unsupervised models assume the model itself specifies these components

Requires: Boolean toggle (default: Disabled)

- Kaggle username

What it does: Your Kaggle username for automatic submission and scoring of test set predictions

How to get credentials: Visit Kaggle API credentials for setup instructions

Requires: String (empty by default)

- Kaggle key

What it does: Your Kaggle API key for automatic submission and scoring of test set predictions

How to get credentials: Visit Kaggle API credentials for setup instructions

Requires: String (empty by default)

- Kaggle submission timeout in seconds

What it does: Sets the maximum time to wait for Kaggle API calls to return scores for predictions

Requires: Integer value (seconds, default: 120)

- Random seed

What it does: Sets the seed for the random number generator to make experiments reproducible

How it works: Combined with the Reproducibility Level setting above, ensures consistent results

How to enable: Check the 《Reproducible》 checkbox in the GUI or set via the API

Requires: Integer value (default: 1234)

- Min. DAI iterations

What it does: Sets the minimum number of Driverless AI iterations before stopping the feature evolution process

Purpose: Acts as a safeguard against suboptimal early convergence

How it works: Driverless AI must run for at least this many iterations before deciding to stop, even if the score isn’t improving

Requires: Integer value (default: 0)

- Offset for default accuracy knob

What it does: Adjusts the default accuracy knob setting up or down

When to use negative values: If default models are too complex (set to -1, -2, etc.)

When to use positive values: If default models are not accurate enough (set to 1, 2, etc.)

Requires: Integer value (default: 0)

- Offset for default time knob

What it does: Adjusts the default time knob setting up or down

When to use negative values: If default experiments are too slow (set to -1, -2, etc.)

When to use positive values: If default experiments finish too fast (set to 1, 2, etc.)

Requires: Integer value (default: 0)

- Offset for default interpretability knob

What it does: Adjusts the default interpretability knob setting up or down

When to use negative values: If default models are too simple (set to -1, -2, etc.)

When to use positive values: If default models are too complex (set to 1, 2, etc.)

Requires: Integer value (default: 0)

- last_recipe

What it does: Internal helper that remembers if the recipe was changed

Note: This is an advanced setting for internal use

Requires: String (empty by default)

- recipe_dict

What it does: Dictionary to control recipes for each experiment and custom recipes

Use case: Pass hyperparameters to custom recipes

Example format: {〈key1〉: 2, 〈key2〉: 〈value2〉}

Requires: Dictionary format (default: {})

- mutation_dict

What it does: Dictionary to control mutation parameters for the genetic algorithm

Use case: Fine-tune genetic algorithm mutation behavior

Example format: {〈key1〉: 2, 〈key2〉: 〈value2〉}

Requires: Dictionary format (default: {})

- Whether to validate recipe names

What it does: Validates recipe names provided in included lists (like included_models)

When disabled: Logs warnings to server logs and ignores invalid recipe names

Requires: Boolean toggle (default: Enabled)

- Timeout in minutes for testing acceptance of each recipe

What it does: Sets the maximum time to wait for recipe acceptance testing

What happens on timeout: The recipe is rejected if acceptance testing is enabled and times out

Advanced option: You can set timeout for specific recipes using the class’s staticmethod function called acceptance_test_timeout

Note: This timeout doesn’t include time to install required packages

Requires: Float value (minutes, default: 20.0)

- Recipe Activation List

What it does: Lists recipes (organized by type) that are applicable for the experiment

Use case: Especially useful for 《experiment with same params》 where you want to use the same recipe versions as the parent experiment

Format: {《transformers》:[],》models》:[],》scorers》:[],》data》:[],》individuals》:[]}

Requires: Dictionary format (default: {《transformers》:[],》models》:[],》scorers》:[],》data》:[],》individuals》:[]})

Time Series:

- Whether to disable time-based limits when reproducible is set

What it does: Controls whether to disable time limits when reproducibility is enabled

The problem: When reproducible mode is set, experiments may take arbitrarily long for the same dials, features, and models

When disabled: Allows experiment to complete after fixed time while keeping model and feature building reproducible and seeded

Trade-off: Overall experiment behavior may not be reproducible if later iterations would have been used in final model building

When to enable: If you need every seeded experiment with the same setup to generate the exact same final model, regardless of duration

Requires: Boolean toggle (default: Enabled)

- Control the automatic time-series leaderboard mode

What it does: Controls how the time-series leaderboard is automatically populated

- Available options:

〈diverse〉: Explores a diverse set of models built using various expert settings

〈sliding_window〉: Creates a separate model for each (gap, horizon) pair

Requires: String selection (default: 《diverse》)

- Number of periods per model if time_series_leaderboard_mode is 〈sliding_window〉

What it does: Fine-control to limit the number of models built in 〈sliding_window〉 mode

Effect: Larger values lead to fewer models being built

Requires: Integer value (default: 1)

Data

The Data sub-tab manages data preprocessing, sampling strategies, validation methods, and data handling configurations in Driverless AI.

Common Settings:

- Drop constant columns

What it does: Removes columns that have the same value in all rows

Why it helps: Constant columns provide no information for machine learning

Requires: Boolean toggle (default: Enabled)

- Drop ID columns

What it does: Removes columns that appear to be unique identifiers (like customer IDs, transaction IDs)

Why it helps: ID columns can cause overfitting and don’t generalize to new data

Requires: Boolean toggle (default: Enabled)

- Leakage detection

What it does: Checks for data leakage, which occurs when future information accidentally appears in training data

How it works: Uses LightGBM (Light Gradient Boosting Machine) model for detection when possible

Fold column handling: If you have a fold column, leakage detection works without using it

Time series note: This option is automatically disabled for time-series experiments

Requires: String selection (default: 《auto》, options: 《auto》, 《on》, 《off》)

- Data distribution shift detection

What it does: Detects when the statistical distribution of data changes between training and validation/test sets

Why it matters: Distribution shift can cause models to perform poorly on new data

How it works: Uses LightGBM model to detect shifts when possible

Requires: String selection (default: 《auto》, options: 《auto》, 《on》, 《off》)

- Detect duplicate rows

What it does: Identifies duplicate rows in training, validation, and testing datasets

When it runs: After type detection and column dropping, just before the experiment starts

Important: This is informational only - to actually drop duplicate rows, use the 《Drop duplicate rows in training data》 setting below

Performance: Uses sampling for large datasets to avoid memory issues

Requires: Boolean toggle (default: Enabled)

- Don’t drop any columns

What it does: Prevents Driverless AI from dropping any columns (original or derived)

When to use: When you want to keep all columns regardless of their characteristics

Requires: Boolean toggle (default: Disabled)

- Min. number of rows needed to run experiment

What it does: Sets the minimum number of rows required to run experiments

Why it matters: Ensures enough data to create statistically reliable models and avoid small-data failures

Warning: Values lower than 100 might not work properly

Requires: Integer value (default: 100)

- Compute correlation matrix

What it does: Creates correlation matrices for training, validation, and test data and saves them as PDF files

Output: Generates both table and heatmap visualizations saved to disk

Performance warning: Currently single-threaded and becomes very slow with many columns

Requires: Boolean toggle (default: Disabled)

- Data distribution shift detection on transformed features

What it does: Detects distribution shifts in the final model’s transformed features between training and test data

Why it matters: Helps identify if feature transformations are causing distribution changes

How it works: Uses LightGBM model for detection when possible

Requires: String selection (default: 《auto》, options: 《auto》, 《on》, 《off》)

- Include specific data recipes during experiment

What it does: Allows you to include custom data processing recipes during the experiment

Two types of data recipes: * Pre-experiment: Adds new datasets or modifies data outside the experiment (via file/URL) * Run-time: Modifies data during the experiment and Python scoring

This setting applies to: Run-time data recipes (the 2nd type)

Important requirements: Run-time recipes must preserve the same column names for: target, weight_column, fold_column, time_column, and time group columns

Note: Pre-experiment recipes can create any new data but won’t be part of the scoring package

Requires: List format (default: [])

- Drop duplicate rows in training data

What it does: Removes duplicate rows from training data at the start of the experiment

When it runs: At the beginning of Driverless AI, using only the columns you specify

Time limit: Process is limited by drop_duplicate_rows_timeout seconds

- Available options:

〈auto〉: Let Driverless AI decide (default: off)

〈weight〉: Convert duplicates into a weight column

〈drop〉: Remove duplicate rows

〈off〉: Do not drop duplicates

Requires: String selection (default: 《auto》)

- Features to drop, e.g. [《V1》, 《V2》, 《V3》]

What it does: Allows you to specify columns to drop in bulk

Why it’s useful: You can copy-paste large lists instead of selecting each column individually in the GUI

Example: [《V1》, 《V2》, 《V3》] will drop columns named V1, V2, and V3

Requires: List format (default: [])

Advanced Settings:

- Data distribution shift detection drop of features

What it does: Automatically removes features that show high distribution shift between training and test data

Time series note: Automatically disabled for time series experiments

Requires: String selection (default: 《auto》, options: 《auto》, 《on》, 《off》)

- Max allowed feature shift (AUC) before dropping feature

What it does: Sets the threshold for dropping features that show high distribution shift

How it works: Drops features (except ID, text, date/datetime, time, weight columns) when their shift metrics exceed this value

Metrics used: AUC (Area Under Curve), GINI coefficient, or Spearman correlation

AUC explanation: Measures how well a binary classifier can predict whether a feature value belongs to training or test data

Requires: Float value (default: 0.999)

- Leakage detection dropping AUC/R2 threshold

What it does: Sets the threshold for dropping features that show data leakage

How it works: Drops features when their leakage metrics exceed this value

Metrics used: AUC (Area Under Curve) for classification, R² (R-squared) for regression, GINI coefficient, or Spearman correlation

Fold column note: If you have a fold column, features won’t be dropped because leakage testing works without using the fold column

Requires: Float value (default: 0.999)

- Max rows x columns for leakage

What it does: Sets the maximum dataset size (rows × columns) before triggering sampling for leakage checks

Why it’s needed: Large datasets are sampled to avoid memory issues during leakage detection

Sampling method: Uses stratified sampling to maintain data distribution

Requires: Integer value (default: 10000000)

- Enable Wide Rules

What it does: Enables special rules to handle 《wide》 datasets (where number of columns > number of rows)

When to use: Wide datasets can cause memory and performance issues with standard algorithms

Force enable: Setting to 《on》 forces these rules regardless of dataset dimensions

Requires: String selection (default: 《auto》, options: 《auto》, 《on》, 《off》)

- Wide rules factor

What it does: Sets the threshold for automatically enabling wide dataset rules

How it works: If columns > (wide_factor × rows), then wide rules are enabled (when set to 《auto》)

Automatic feature: For datasets where columns > rows, Random Forest is always enabled

Requires: Float value (default: 5.0)

- Max. allowed fraction of uniques for integer and categorical cols

What it does: Sets the maximum fraction of unique values allowed for integer and categorical columns

Why it matters: Columns with too many unique values are treated as ID columns and dropped

Default behavior: Columns with >95% unique values are considered IDs and removed

Requires: Float value (default: 0.95)

- Allow treating numerical as categorical

What it does: Allows numerical columns to be treated as categorical when appropriate

When it’s useful: Integer columns that represent codes (like 1=Male, 2=Female) rather than true numerical values

Why it’s restrictive to disable: Even columns with few categorical levels that happen to be numerical won’t be encoded as categorical

Requires: Boolean toggle (default: Enabled)

- Max. number of unique values for int/float to be categoricals

What it does: Sets the maximum number of unique values for integer/real columns to be treated as categorical

Testing scope: Only applies to the first portion of data (for performance reasons)

Requires: Integer value (default: 50)

- Max. number of unique values for int/float to be categoricals if violates Benford’s Law

What it does: Sets the maximum unique values for columns that violate Benford’s Law

Benford’s Law: A statistical law that describes the distribution of leading digits in many real-world datasets

When it applies: For numerical features that violate Benford’s Law (appear ID-like but aren’t entirely IDs)

Testing scope: Only applies to the first portion of data (for performance reasons)

Requires: Integer value (default: 10000)

- Max. fraction of numeric values to be non-numeric (and not missing) for a column to still be considered numeric

What it does: Allows columns with some non-numeric values to still be treated as numeric

When it helps: Useful for minor data quality issues during experimentation

Production warning: Values > 0 are not recommended for production use

What happens: Non-numeric values are replaced with missing values, so some information is lost

To disable: Set to a negative value

Requires: Float value (default: 0.0)

- Threshold for string columns to be treated as text (0.0 - text, 1.0 - string)

What it does: Determines whether string columns are treated as text (for NLP) or categorical variables

How it works: Uses internal heuristics to score how 《text-like》 a string column is

- Threshold behavior:

Higher values (closer to 1.0): Favor treating strings as categorical variables

Lower values (closer to 0.0): Favor treating strings as text for NLP processing

Override option: Set string_col_as_text_min_relative_cardinality=0.0 to force text treatment despite low unique count

Requires: Float value (default: 0.3)

- Number of pipeline layers

What it does: Sets the number of full pipeline layers in the experiment

Note: This doesn’t include the preprocessing layer when pretransformers are included

Requires: Integer value (default: 1)

- Whether to do hyperopt for leakage/shift

What it does: Enables hyperparameter tuning during leakage/shift detection

When it works: Only active if num_inner_hyperopt_trials_prefinal > 0

When it’s useful: Can help find non-trivial leakage/shift patterns, but usually not necessary

Requires: Boolean toggle (default: Disabled)

- Whether to do hyperopt for leakage/shift for each column

What it does: Enables hyperparameter tuning for each individual column during leakage/shift detection

When it works: Only active if num_inner_hyperopt_trials_prefinal > 0

Requires: Boolean toggle (default: Disabled)

- Max. num. of rows x num. of columns for feature evolution data splits (not for final pipeline)

What it does: Sets the maximum dataset size for feature evolution (the process that determines which features will be derived)

Applies to: Both training and validation/holdout splits during feature evolution

Accuracy dependency: The actual value used depends on your accuracy settings

Requires: Integer value (default: 300000000)

- Max. num. of rows x num. of columns for reducing training data set (for final pipeline)

What it does: Sets the maximum dataset size for training the final pipeline

Purpose: Controls memory usage during final model training

Requires: Integer value (default: 1000000000)

- Limit validation size

What it does: Automatically limits validation data size to control memory usage

How it works: Uses different size limits for feature evolution and final model training

Feature evolution: Uses feature_evolution_data_size (shown as max_rows_feature_evolution in logs)

Final model: Uses final_pipeline_data_size and max_validation_to_training_size_ratio_for_final_ensemble

Requires: Boolean toggle (default: Enabled)

- Max number of columns to check for redundancy in training dataset

What it does: Limits the number of columns checked for redundancy to avoid performance issues

How it works: If the dataset has more columns than this limit, only the first N columns are checked

To disable: Set to 0

Requires: Integer value (default: 1000)

- Limit of dataset size in rows x cols for data when detecting duplicate rows

What it does: Sets the maximum dataset size for duplicate row detection

How it works: If > 0, uses this as the sampling size for duplicate detection

To check all data: Set to 0 to perform checks on datasets of any size

Requires: Integer value (default: 10000000)

- Leakage feature detection AUC/R2 threshold

What it does: Sets the threshold for triggering per-feature leakage detection

How it works: When leakage detection is enabled, if AUC (R² for regression) on original data exceeds this value, per-feature leakage detection is triggered

Requires: Float value (default: 0.95)

- Leakage features per feature detection AUC/R2 threshold

What it does: Sets the threshold for showing features that may have leakage

How it works: Shows features where AUC (R² for regression) for individual feature prediction exceeds this value

Feature dropping: Features are dropped if their AUC/R² exceeds the drop_features_leakage_threshold_auc setting

Requires: Float value (default: 0.8)

Image:

- Image download timeout in seconds

What it does: Sets the maximum time to wait for image downloads when images are provided by URL

Requires: Integer value (seconds, default: 60)

- Max allowed fraction of missing values for image column

What it does: Sets the maximum fraction of missing values allowed for a string column to be considered as image paths

Purpose: Helps identify which string columns contain image URIs

Requires: Float value (default: 0.1)

- Min. fraction of images that need to be of valid types for image column to be used

What it does: Sets the minimum fraction of image URIs that must have valid file extensions for a column to be treated as image data

File types: Valid endings are defined by string_col_as_image_valid_types

Requires: Float value (default: 0.8)

NLP:

- Fraction of text columns out of all features to be considered a text-dominated problem

What it does: Sets the threshold for considering a dataset as text-dominated based on the fraction of text columns

Purpose: Helps Driverless AI determine when to apply NLP-specific processing

Requires: Float value (default: 0.3)

- Fraction of text per all transformers to trigger that text dominated

What it does: Sets the threshold for triggering text-dominated processing based on the fraction of text transformers

Purpose: Additional criterion for determining when to apply NLP-specific processing

Requires: Float value (default: 0.3)

- string_col_as_text_min_relative_cardinality

What it does: Sets the minimum fraction of unique values for string columns to be considered as text (rather than categorical)

Purpose: Helps distinguish between text data and categorical data

Requires: Float value (default: 0.1)

- string_col_as_text_min_absolute_cardinality

What it does: Sets the minimum number of unique values for string columns to be considered as text

Purpose: Absolute threshold for text classification (used if relative threshold isn’t met)

Requires: Integer value (default: 10000)

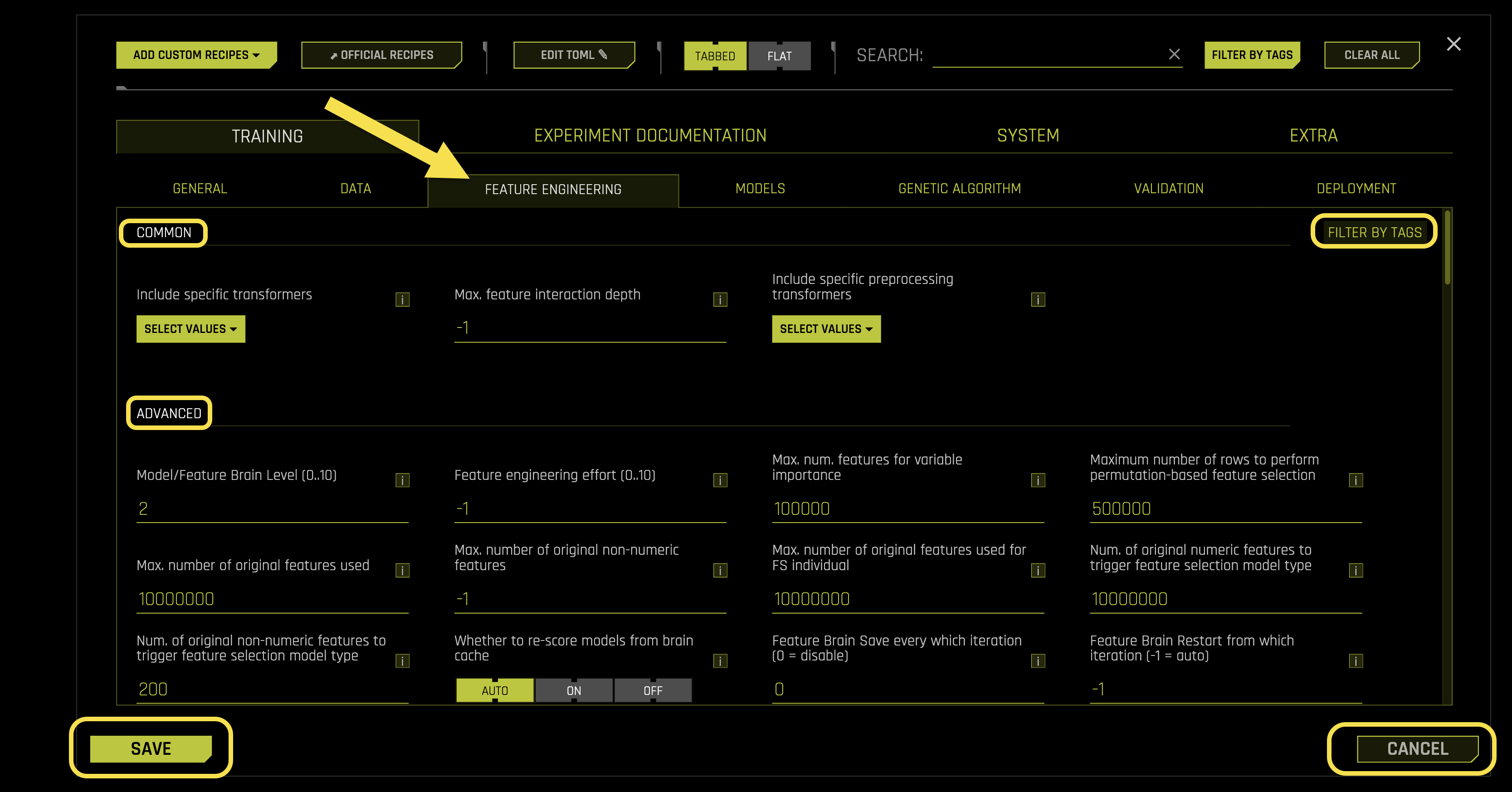

Feature Engineering

The Feature Engineering sub-tab configures feature generation, selection algorithms, and transformation settings in Driverless AI.

Configuration Options:

Common Settings:

- Include specific transformers

What it does: Selects specific transformers (feature engineering algorithms) to use during the experiment

How transformers work: They derive new features from original features using various mathematical and statistical techniques

Example transformers: OriginalTransformer, CatOriginalTransformer, NumCatTETransformer, CVTargetEncodeF, NumToCatTETransformer, ClusterTETransformer

Note: This doesn’t affect preprocessing transformers (controlled by included_pretransformers)

Requires: List format (default: [])

- Max. feature interaction depth

What it does: Controls how many features can be combined together to create new features

Why it matters: Feature interactions (like feature1 + feature2 or feature1 × feature2) can improve predictive performance

How it works: Sets the maximum number of features that can be combined at once to create a single new feature

Mixed feature types: For transformers using both numeric and categorical features, this constrains each type separately, not the total

Trade-off: Higher values may create more predictive models but take longer to process

Auto mode: Set to -1 for automatic selection

Requires: Integer value (default: -1)

- Include specific preprocessing transformers

What it does: Selects transformers to run before the main feature engineering layer

How it works: Preprocessing transformers take original features and create new features that become input for the main transformer layer. In order to do a time series experiment with the GUI/client auto-selecting groups, periods, etc. the dataset must have time column and groups prepared ahead of experiment by user or via a one-time data recipe.

Flexibility: Any BYOR (Bring Your Own Recipe) transformer or native Driverless AI transformer can be used

Example use cases: Interactions, string concatenations, date extractions as preprocessing steps

Pipeline flow: Preprocessing → Main transformers → Final features

Requires: List format (default: [])

Advanced Settings:

- Model/Feature Brain Level (0..10)

What it does: Controls the use of H2O.ai Brain - a local caching system that reuses results from previous experiments

Benefits: Generates more useful features and models by learning from past experiments

Checkpointing: Also controls checkpointing for paused or interrupted experiments

- Cache matching criteria: Driverless AI uses cached results when:

Column names and types match

Experiment type is similar

Classes and class labels exactly match

Basic time series choices match

Cache interpretability is equal or lower

Requires: Integer value (0-10, default: 2)

- Feature engineering effort (0..10)

What it does: Controls how much computational effort to spend on feature engineering

Auto mode (-1): Automatically chooses effort level (usually 5, but 1 for wide datasets)

- Effort levels:

0: Keep only numeric features, focus on model tuning

1: Keep numeric features + frequency-encoded categoricals, focus on model tuning

2: Like level 1 but no text features, some feature tuning before evolution

3: Like level 5 but only tuning during evolution, mixed feature/model tuning

4: Like level 5, slightly more focused on model tuning

5: Default - balanced feature and model tuning

6-7: Like level 5, slightly more focused on feature engineering

Requires: Integer value (-1 to 10, default: -1)

- Max. num. features for variable importance

What it does: Sets the maximum number of features to display in importance tables

Interpretability interaction: When interpretability > 1, features with lower importance than this threshold are automatically removed

Feature pruning: Less important features are pruned to improve model performance

Performance warning: Higher values can reduce performance and increase disk usage for datasets with >100k columns

Requires: Integer value (default: 100000)

- Maximum number of rows to perform permutation-based feature selection

What it does: Sets the maximum number of rows to use for permutation-based feature importance calculations

Sampling method: Uses stratified random sampling to reduce large datasets to this size

Purpose: Controls computational cost while maintaining statistical validity

Requires: Integer value (default: 500000)

- Max. number of original features used

What it does: Limits the number of original columns selected for feature engineering

Selection method: Based on how well target encoding (or frequency encoding) works on categorical and numeric features

Purpose: Reduces final model complexity by selecting only the best original features

Process: Best features are selected first, then used for feature evolution and modeling

Requires: Integer value (default: 10000000)

- Max. number of original non-numeric features

What it does: Limits the number of non-numeric (categorical) columns selected for feature engineering

Feature selection trigger: When exceeded, feature selection is performed on all features

Auto mode (-1): Uses default settings, but can be increased up to 10x for small datasets

Requires: Integer value (default: -1)

- Max. number of original features used for FS individual

What it does: Similar to max_orig_cols_selected, but creates a special genetic algorithm individual with reduced original columns

Purpose: Adds diversity to the genetic algorithm by including individuals with fewer original features

Requires: Integer value (default: 10000000)

- Num. of original numeric features to trigger feature selection model type

What it does: Similar to max_orig_numeric_cols_selected, but for special genetic algorithm individuals

Genetic algorithm: Creates a separate individual using feature selection by permutation importance on original features

Requires: Integer value (default: 10000000)

- Num. of original non-numeric features to trigger feature selection model type

What it does: Similar to max_orig_nonnumeric_cols_selected, but for special genetic algorithm individuals

Genetic algorithm: Creates a separate individual using feature selection by permutation importance on original features

Requires: Integer value (default: 200)

- Whether to re-score models from brain cache

What it does: Controls whether to re-score models from the H2O.ai Brain cache

- Options:

〈auto〉: Smartly keeps scores to avoid re-processing steps

〈on〉: Always re-score models

〈off〉: Never re-score models

Requires: String selection (default: 《auto》, options: 《auto》, 《on》, 《off》)

- Feature Brain Save every which iteration (0 = disable)

What it does: Controls how often to save feature brain iterations for checkpointing

How it works: Saves every N iterations where N is this value, enabling restart/refit from specific iterations

To disable: Set to 0

Requires: Integer value (default: 0)

- Feature Brain Restart from which iteration (-1 = auto)

What it does: Chooses which iteration to restart from when doing restart or re-fit operations

Auto mode (-1): Uses the last best iteration

- Usage steps:

Run an experiment with feature_brain_iterations_save_every_iteration=1 (or another number)

Identify which iteration brain dump you want to restart/refit from

Restart/Refit from the original experiment, setting which_iteration_brain to that number

Important: If restarting from a tuning iteration, this pulls in the entire scored tuning population for feature evolution

Requires: Integer value (default: -1)

- Max. number of engineered features (-1 = auto)

What it does: Limits the maximum number of engineered features allowed per model

Gene control: Controls the number of genes (transformer instances) before features are scored

Pruning behavior: If pruning occurs before scoring, genes are randomly sampled; if after scoring, aggregated gene importances are used

Includes: All possible transformers, including original transformer for numeric features

Auto mode (-1): No restrictions except internally-determined memory and interpretability limits

Requires: Integer value (default: -1)

- Max. number of genes (transformer instances) (-1 = auto)

What it does: Sets the maximum number of genes (transformer instances) per model

Ensemble handling: Applies to each model within the final ensemble if applicable

Includes: All possible transformers, including original transformer for numeric features

Auto mode (-1): No restrictions except internally-determined memory and interpretability limits

Requires: Integer value (default: -1)

- Min. number of genes (transformer instances) (-1 = auto)

What it does: Sets the minimum number of genes (transformer instances) per model

Purpose: Ensures models have at least this many transformer instances

Requires: Integer value (default: -1)

- Limit features by interpretability

What it does: Controls whether to limit feature counts based on the interpretability setting

How it works: Uses features_allowed_by_interpretability to automatically limit features when interpretability is set

Requires: Boolean toggle (default: Enabled)

- Fixed feature interaction depth

What it does: Sets a fixed number of columns to use for each transformer instead of random sampling

Default behavior (0): Uses random sampling from min to max columns

Fixed mode: Choose a specific number of columns to use consistently

Use all columns: Set to match the number of columns to use all available columns (if allowed by transformer)

Hybrid mode (-n): Uses 50% random sampling and 50% fixed selection of n features

Requires: Integer value (default: 0)

- Select target transformation of the target for regression problems

What it does: Chooses how to transform the target variable for regression problems

- Available transformations:

〈auto〉: Let Driverless AI choose automatically

〈identity〉: No transformation (default)

〈identity_noclip〉: No transformation, no clipping

〈center〉: Center the data (subtract mean)

〈standardize〉: Standardize (z-score normalization)

〈unit_box〉: Box-Cox transformation

〈log〉: Logarithmic transformation

〈log_noclip〉: Logarithmic without clipping

〈square〉: Square transformation

〈sqrt〉: Square root transformation

〈double_sqrt〉: Double square root transformation

〈inverse〉: Inverse transformation

〈anscombe〉: Anscombe transformation

〈logit〉: Logit transformation

〈sigmoid〉: Sigmoid transformation

Requires: String selection (default: 《auto》)

- Select all allowed target transformations of the target for regression problems when doing target transformer tuning

What it does: Specifies which target transformations to test during target transformer tuning

When it’s used: Only when target_transformer=〉auto〉 and accuracy >= tune_target_transform_accuracy_switch

Purpose: Allows Driverless AI to automatically select the best target transformation from this list

Requires: List format (default: [〈identity〉, 〈identity_noclip〉, 〈center〉, 〈standardize〉, 〈unit_box〉, 〈log〉, 〈square〉, 〈sqrt〉, 〈double_sqrt〉, 〈anscombe〉, 〈logit〉, 〈sigmoid〉])

- Enable Target Encoding (auto disables for time series)

What it does: Controls whether target encoding techniques are enabled

Target encoding explained: Feature transformations that use target variable information to represent categorical features (CV target encoding, weight of evidence, etc.)

Time series note: Automatically disabled for time series experiments

Requires: String selection (default: 《auto》, options: 《auto》, 《on》, 《off》)

- Enable outer CV for Target Encoding

What it does: Enables outer cross-fold validation for target encoding when GINI coefficient issues are detected

When it triggers: When GINI coefficient flips sign or shows inconsistent signs between training and validation data

How it helps: Uses fold-averaging of lookup tables instead of global lookup tables when GINI performance is poor

Requires: Boolean toggle (default: Enabled)

- Enable outer CV for Target Encoding with overconfidence protection

What it does: Adds overconfidence protection to outer cross-fold validation for target encoding

How it works: Increases the number of outer folds or aborts target encoding when GINI coefficients are inconsistent between training and validation data

Purpose: Prevents overfitting by ensuring consistent performance across different data splits

Requires: String selection (default: 《auto》, options: 《auto》, 《on》, 《off》)

- Enable Lexicographical Label Encoding

What it does: Enables lexicographical (alphabetical) label encoding for categorical features

How it works: Assigns numerical values to categorical labels based on alphabetical order

Requires: String selection (default: 《off》, options: 《auto》, 《on》, 《off》)

- Enable Isolation Forest Anomaly Score Encoding

What it does: Enables Isolation Forest anomaly score encoding for anomaly detection features

Purpose: Creates features that help identify unusual or anomalous data points

Requires: String selection (default: 《off》, options: 《auto》, 《on》, 《off》)

- Enable One HotEncoding (auto enables only for GLM)

What it does: Controls whether one-hot encoding is enabled for categorical features

Auto behavior: Only applied for small datasets and GLM (Generalized Linear Model) algorithms

Requires: String selection (default: 《auto》, options: 《auto》, 《on》, 《off》)

- binner_cardinality_limiter

What it does: Limits the total number of bins created by all BinnerTransformers

Scaling: The limit is scaled by accuracy, interpretability, and dataset size settings

Unlimited: Set to 0 for no limits

Requires: Integer value (default: 50)

- Enable BinnerTransformer for simple numeric binning (auto enables only for GLM/FTRL/TensorFlow/GrowNet)

What it does: Enables simple binning of numeric features for linear models

Auto behavior: Only enabled for GLM/FTRL/TensorFlow/GrowNet algorithms, time-series, or when interpretability >= 6

Why it helps: Exposes more signal for features that aren’t linearly correlated with the target

Interpretability advantage: More interpretable than NumCatTransformer and NumToCatTransformer (which also do target encoding)

Time series support: Works with time series data

Requires: String selection (default: 《auto》, options: 《auto》, 《on》, 《off》)

- Select methods used to find bins for Binner Transformer

What it does: Chooses the method for determining bin boundaries in numeric features

- Available methods:

〈tree〉: Uses XGBoost to find optimal split points

〈quantile〉: Uses quantile-based binning (equal-sized bins)

Fallback behavior: May fall back to quantile-based if too many classes or insufficient unique values

Requires: List format (default: [《tree》], options: [《tree》, 《quantile》])

- Enable automatic reduction of number of bins for Binner Transformer

What it does: Automatically reduces the number of bins during numeric feature binning

Applies to: Both tree-based and quantile-based binning methods

Purpose: Optimizes bin count for better model performance

Requires: Boolean toggle (default: Enabled)

- Select encoding schemes for Binner Transformer

What it does: Chooses how to encode numeric values within bins (one column per bin, plus one for missing values)

- Available schemes:

〈piecewise_linear〉: 0 left of bin, 1 right of bin, linear growth 0→1 inside bin

〈binary〉: 1 inside bin, 0 outside bin

Missing values: Always encoded as binary (0 or 1)

Benefits: Both schemes help models detect non-linear patterns that they might otherwise miss

Requires: List format (default: [《piecewise_linear》, 《binary》], options: [《piecewise_linear》, 《binary》])

- Include Original feature value as part of output of Binner Transformer

What it does: Includes the original feature value as an output feature alongside the binned features

Why it helps: Ensures BinnerTransformer never has less signal than OriginalTransformer

Use case: Important when transformers can be chosen exclusively

Requires: Boolean toggle (default: Enabled)

- Num. Estimators for Isolation Forest Encoding

What it does: Sets the number of trees (estimators) used for Isolation Forest anomaly score encoding

Trade-off: More estimators improve accuracy but increase computational cost

Requires: Integer value (default: 200)

- one_hot_encoding_cardinality_threshold

What it does: Enables one-hot encoding for categorical columns with fewer than this many unique values

Binning limit: One-hot encoding bins are limited to no more than 100 anyway

To disable: Set to 0

Requires: Integer value (default: 50)

- one_hot_encoding_cardinality_threshold_default_use

What it does: Sets the default number of levels to use one-hot encoding instead of other encodings

Automatic reduction: Reduced to 10x less (minimum 2 levels) when OHE columns exceed 500

Note: Total number of bins is reduced for larger datasets independently of this setting

Requires: Integer value (default: 40)

- text_as_categorical_cardinality_threshold

What it does: Treats text columns as categorical columns if cardinality (unique values) is <= this value

To disable: Set to 0 to treat text columns only as text

Requires: Integer value (default: 1000)

- numeric_as_categorical_cardinality_threshold

What it does: Treats numeric columns as categorical columns if cardinality > this value (when num_as_cat is true)

To allow all: Set to 0 to treat all numeric columns as categorical if num_as_cat is True

Requires: Integer value (default: 2)

- numeric_as_ohe_categorical_cardinality_threshold

What it does: Treats numeric columns as categorical for possible one-hot encoding if cardinality > this value (when num_as_cat is true)

To allow all: Set to 0 to treat all numeric columns as categorical for one-hot encoding if num_as_cat is True

Requires: Integer value (default: 2)

- Features to group by, e.g. [《G1》, 《G2》, 《G3》]

What it does: Controls which columns to group by for the CVCatNumEncode Transformer

Auto behavior: Empty list means Driverless AI automatically searches all columns (randomly or by variable importance)

How it works: CVCatNumEncode Transformer groups by these categorical columns and computes numerical aggregations

Requires: List format (default: [])

- Sample from features to group by

What it does: Controls whether to sample from the specified features to group by

- Options:

Enabled: Sample from the given features

Disabled: Always group by all specified features

Requires: Boolean toggle (default: Disabled)

- Aggregation functions (non-time-series) for group by operations

What it does: Specifies which aggregation functions to use for groupby operations in CVCatNumEncode Transformer

Related settings: Works with cols_to_group_by and sample_cols_to_group_by

Available functions: mean, sd (standard deviation), min, max, count

Requires: List format (default: [《mean》, 《sd》, 《min》, 《max》, 《count》], options: [《mean》, 《sd》, 《min》, 《max》, 《count》])

- Number of folds to obtain aggregation when grouping

What it does: Controls how many folds to use for out-of-fold aggregations in CVCatNumEncode Transformer

Trade-off: More folds reduce overfitting but see less data in each fold

Requires: Integer value (default: 5)

- Features to force in, e.g. [《G1》, 《G2》, 《G3》]

What it does: Forces specific columns to be included in the experiment

How it works: Forced features are handled by the most interpretable transformer and never removed (though model may assign 0 importance)

Default transformers: OriginalTransformer for numeric, CatOriginalTransformer for categorical, etc.

Requires: List format (default: [])

- Required GINI relative improvement for Interactions

What it does: Sets the minimum GINI improvement required for InteractionTransformer to return interactions

How it works: If GINI improvement is not better than this threshold compared to original features, the interaction is not returned

Noisy data: Decrease this value if you have noisy data but still want interactions

Requires: Float value (default: 0.5)

- Number of transformed Interactions to make

What it does: Sets the number of transformed interactions to create from many generated trial interactions

Selection: Chooses the best interactions from all generated trials

Requires: Integer value (default: 5)

- Lowest allowed variable importance at interpretability 10

What it does: Sets the minimum variable importance threshold for features at interpretability level 10

Feature dropping: Features below this threshold are dropped (with possible replacement)

Scaling: This setting also determines the overall scale for lower interpretability settings

Adjustment: Lower this value if you’re okay with many weak features or see performance drops due to weak feature removal

Requires: Float value (default: 0.001)

- Whether to take minimum (True) or mean (False) of delta improvement in score when aggregating feature selection scores across multiple folds/depths

What it does: Controls how to aggregate feature selection scores across multiple folds/depths

- Options:

True (minimum): Takes the minimum score (more pessimistic, ignores optimistic scores)

False (mean): Takes the average score across folds/depths

Delta improvement: Original metric minus shuffled feature metric (or negative if minimizing)

Tree methods: Multiple depths may be fitted - only features kept for all depths are retained regardless of this setting

Data size variation: If interpretability >= fs_data_vary_for_interpretability, half data is used as another fit - only features kept for all data sizes are retained

Small data note: Disabled for small data since arbitrary slices can lead to disjoint important features

Requires: Boolean toggle (default: Enabled)

Image Settings:

- Enable Image Transformer for processing of image data

What it does: Controls whether to use pretrained deep learning models for image data processing

How it works: Converts image URIs (jpg, png, etc.) to numeric representations using ImageNet-pretrained models

GPU requirement: If no GPUs are found, must be set to 〈on〉 to enable

Requires: String selection (default: 《auto》, options: 《auto》, 《on》, 《off》)

- Supported ImageNet pretrained architectures for Image Transformer

What it does: Specifies which ImageNet pretrained architectures to use for Image V2 Transformer

Internet requirement: Non-default architectures require internet access to download from H2O S3 buckets

Download all models: Get DAI image models and unzip in image_pretrained_models_dir

Available options: efficientnet_b0, tf_efficientnetv2, convnextv2, regnety, levit, etc

Requires: List format (default: [《levit》], options: [《efficientnet_b0》, 《tf_efficientnetv2》, 《convnextv2》, 《regnety》, 《levit》])

- Dimensionality of feature space created by Image Transformer

What it does: Sets the dimensionality of the feature space created by Image V2 Transformer

Available dimensions: 10, 25, 50, 100, 200, 300

Requires: List format (default: [100], options: [10, 25, 50, 100, 200, 300])

- Enable fine-tuning of pretrained models used for Image Transformer

What it does: Controls whether to fine-tune the pretrained models used for Image V2 Transformer

Purpose: Allows models to adapt to your specific image data

Requires: Boolean toggle (default: Disabled)

- Number of epochs for fine-tuning used for Image Transformer

What it does: Sets the number of training epochs for fine-tuning Image V2 Transformer models

Requires: Integer value (default: 2)

- List of augmentations for fine-tuning used for Image Transformer

What it does: Specifies image augmentations to apply during fine-tuning of ImageNet pretrained models

Documentation: Details about individual augmentations at Albumentations documentation

Exception: Does not apply to tf_efficientnetv2 (uses recommended transformers from Hugging Face)

Available options: Blur, CLAHE, Downscale, GaussNoise, GridDropout, HorizontalFlip, HueSaturationValue, ImageCompression, OpticalDistortion, RandomBrightnessContrast, RandomRotate90, ShiftScaleRotate, VerticalFlip

Requires: List format (default: [《HorizontalFlip》], options: [《Blur》, 《CLAHE》, 《Downscale》, 《GaussNoise》, 《GridDropout》, 《HorizontalFlip》, 《HueSaturationValue》, 《ImageCompression》, 《OpticalDistortion》, 《RandomBrightnessContrast》, 《RandomRotate90》, 《ShiftScaleRotate》, 《VerticalFlip》])

- Batch size for Image Transformer. Automatic: -1

What it does: Sets the batch size for Image V2 Transformer processing

Automatic mode: Set to -1 for automatic batch size selection

Requires: Integer value (default: -1)

- Path to pretrained Image models. It is used to load the pretrained models if there is no Internet access.

What it does: Sets the local path to pretrained Image models for offline use

When it’s used: Loads models locally when there’s no internet access

Requires: String path (default: 《./pretrained/image/》)

- Enable GPU(s) for faster transformations of Image Transformer.

What it does: Controls whether to use GPU(s) for faster image transformations with Image V2 Transformer

Performance benefit: Can lead to significant speedups when GPUs are available

Requires: Boolean toggle (default: Enabled)

NLP Settings:

- Enable word-based CNN TensorFlow transformers for NLP

What it does: Controls whether to use Word-based CNN (Convolutional Neural Network) models as transformers for NLP

How it works: Uses out-of-fold predictions from Word-based CNN Torch models when Torch is enabled

Requires: String selection (default: 《auto》, options: 《auto》, 《on》, 《off》)

- Enable word-based BiGRU TensorFlow transformers for NLP

What it does: Controls whether to use Word-based Bi-GRU (Bidirectional Gated Recurrent Unit) models as transformers for NLP

How it works: Uses out-of-fold predictions from Word-based Bi-GRU Torch models when Torch is enabled

Requires: String selection (default: 《auto》, options: 《auto》, 《on》, 《off》)

- Enable character-based CNN TensorFlow transformers for NLP

What it does: Controls whether to use Character-level CNN models as transformers for NLP

How it works: Uses out-of-fold predictions from Character-level CNN Torch models when Torch is enabled

Requires: String selection (default: 《auto》, options: 《auto》, 《on》, 《off》)

- Enable PyTorch transformers for NLP

What it does: Controls whether to use pretrained PyTorch models (BERT Transformer) for NLP tasks

How it works: Fits a linear model on top of pretrained embeddings

Requirements: Internet connection required; GPU(s) highly recommended

Default behavior: 〈auto〉 means disabled; set to 〈on〉 to enable

Text column handling: Reduce string_col_as_text_min_relative_cardinality closer to 0.0 and string_col_as_text_threshold closer to 0.0 to force string columns to be treated as text despite low unique counts

Requires: String selection (default: 《auto》, options: 《auto》, 《on》, 《off》)

- Max number of rows to use for fitting the linear model on top of the pretrained embeddings.

What it does: Limits the number of rows used for fitting the linear model on pretrained embeddings

Performance consideration: More rows can slow down the fitting process

Recommendation: Use values less than 100,000

Requires: Integer value (default: 50000)

- Max. TensorFlow epochs for NLP

What it does: Sets the maximum number of training epochs for TensorFlow models used to create NLP features

Requires: Integer value (default: 2)

- Accuracy above enable TensorFlow NLP by default for all models

What it does: Sets the minimum accuracy level to automatically enable TensorFlow NLP models for text-dominated problems

How it works: When TensorFlow NLP transformers are set to 〈auto〉, this determines when to add all enabled models at experiment start

Mutation behavior: At lower accuracy levels, TensorFlow NLP transformations are only created as mutations

Override: If TensorFlow NLP is set to 〈on〉, this parameter is ignored

Requires: Integer value (default: 5)

- Path to pretrained embeddings for TensorFlow NLP models. If empty, will train from scratch.

What it does: Specifies the path to pretrained embeddings for TensorFlow NLP models

Path options: Local file system path or S3 location (s3://)

Training from scratch: If empty, models will train from scratch

Example: Download and unzip GloVe word embeddings

Example path: /path/on/server/to/glove.6B.300d.txt

Requires: String path (default: 《》)

- S3 access key Id to use when tensorflow_nlp_pretrained_embeddings_file_path is set to an S3 location.

What it does: Sets the S3 access key ID for downloading pretrained embeddings from S3

When it’s used: Only when tensorflow_nlp_pretrained_embeddings_file_path points to an S3 location

Requires: String (default: 《》)

- S3 secret access key to use when tensorflow_nlp_pretrained_embeddings_file_path is set to an S3 location.

What it does: Sets the S3 secret access key for downloading pretrained embeddings from S3

When it’s used: Only when tensorflow_nlp_pretrained_embeddings_file_path points to an S3 location

Requires: String (default: 《》)

- For TensorFlow NLP, allow training of unfrozen pretrained embeddings (in addition to fine-tuning of the rest of the graph)

What it does: Controls whether to train all neural network weights, including pretrained embedding layer weights

Enabled: All weights are trained (embedding layer + rest of graph)

Disabled: Embedding layer is frozen, but other weights are fine-tuned

Requires: Boolean toggle (default: Disabled)

- Tokenize single characters.

What it does: Controls whether to create tokens from single alphanumeric characters in Text (Count and TF/IDF) transformers

Enabled: Creates tokens from single characters (e.g., 〈Street 3〉 → 〈Street〉 and 〈3〉)

Disabled: Requires 2+ alphanumeric characters for tokens (e.g., 〈Street 3〉 → 〈Street〉)

Requires: Boolean toggle (default: Enabled)

- Max size of the vocabulary for text transformers.

What it does: Sets the maximum vocabulary size (in tokens) for Tfidf/Count/Comatrix text transformers (not CNN/BERT)

Multiple values: First value used for initial models, remaining values used during parameter tuning and feature evolution

Performance recommendation: Values smaller than 10,000 for speed

Reasonable choices: 100, 1000, 5000, 10000, 50000, 100000, 500000

Computational note: If force_enable_text_comatrix_preprocess is True, only selective top vocabularies are used due to complexity

Requires: List format (default: [1000, 5000])

Time Series Settings:

- Time-series lag-based recipe

What it does: Enables time series lag-based recipe with lag transformers

When disabled: Uses the same train-test gap and periods but no lag transformers are enabled

Limited transformations: Without lag transformers, feature transformations are quite limited

Alternative approach: Consider setting enable_time_unaware_transformers to true to treat the problem more like an IID (Independent and Identically Distributed) type problem

Requires: Boolean toggle (default: Enabled)

- Generate holiday features

What it does: Automatically generates is-holiday features from date columns

Purpose: Creates binary features indicating whether each date is a holiday

Requires: Boolean toggle (default: Enabled)

- Country code(s) for holiday features

What it does: Specifies which countries〉 holidays to include in holiday features

Available countries: UnitedStates, UnitedKingdom, EuropeanCentralBank, Germany, Mexico, Japan

Requires: List format (default: [《UnitedStates》, 《UnitedKingdom》, 《EuropeanCentralBank》, 《Germany》, 《Mexico》, 《Japan》])

- Whether to sample lag sizes

What it does: Controls whether to sample from a set of possible lag sizes for each lag-based transformer

How it works: Samples from lag sizes (e.g., lags=[1, 4, 8]) up to max_sampled_lag_sizes lags

Benefits: Reduces overall model complexity and size, especially when many columns are unavailable for prediction

Requires: Boolean toggle (default: Disabled)

- Number of sampled lag sizes. -1 for auto.

What it does: Sets the maximum number of lag sizes to sample when sample_lag_sizes is enabled

How it works: Samples from lag sizes (e.g., lags=[1, 4, 8]) up to this maximum for each lag-based transformer

Auto mode (-1): Uses the same value as feature interaction depth controlled by max_feature_interaction_depth

Benefits: Helps reduce overall model complexity and size

Requires: Integer value (default: -1)

- Time-series lags override, e.g. [7, 14, 21]

What it does: Overrides the default lag values to be used in time series analysis

- Format options:

[7, 14, 21] - Use this exact list

21 - Produce lags from 1 to 21

21:3 - Produce lags from 1 to 21 in steps of 3

5-21 - Produce lags from 5 to 21

5-21:3 - Produce lags from 5 to 21 in steps of 3

Requires: List format (default: [])

- Lags override for features that are not known ahead of time

What it does: Overrides lag values specifically for features that are not known ahead of time

Format options: Same as Time-series lags override (exact list, range, or step patterns)

Use case: For features where future values are not available at prediction time

Requires: List format (default: [])

- Lags override for features that are known ahead of time

What it does: Overrides lag values specifically for features that are known ahead of time

Format options: Same as Time-series lags override (exact list, range, or step patterns)

Use case: For features where future values are available at prediction time

Requires: List format (default: [])

- Smallest considered lag size (-1 = auto)

What it does: Sets the smallest lag size to consider in time series analysis

Auto mode (-1): Automatically determines the smallest lag size

Requires: Integer value (default: -1)

- Enable feature engineering from time column

What it does: Controls whether to enable feature engineering based on the selected time column

Example: Creates features like Date~weekday from the time column

Requires: Boolean toggle (default: Enabled)

- Allow integer time column as numeric feature

What it does: Controls whether to use integer time column as a numeric feature

Warning: Using numeric timestamps as input features can cause models to memorize actual timestamps instead of learning generalizable patterns

Recommendation: Keep disabled for time series recipes to avoid overfitting to specific time values

Requires: Boolean toggle (default: Disabled)

- Allowed date and date-time transformations

What it does: Specifies which date and date-time transformations are allowed

- Available transformations:

Date: year, quarter, month, week, weekday, day, dayofyear, num

Time: hour, minute, second

Feature naming: Features appear as 《get_》 + transformation name in Driverless AI

Overfitting warning: 〈num〉 is a direct numeric value representing floating point time, which can cause overfitting on IID problems (disabled by default)

Requires: List format (default: [《year》, 《quarter》, 《month》, 《week》, 《weekday》, 《day》, 《dayofyear》, 《hour》, 《minute》, 《second》])

- Auto filtering of date and date-time transformations

What it does: Automatically filters out date transformations that would create unseen values in the future

Purpose: Prevents overfitting by avoiding transformations that don’t generalize to future data

Requires: Boolean toggle (default: Enabled)

- Consider time groups columns as standalone features

What it does: Controls whether to treat time groups columns (tgc) as standalone features

Separation: 〈time_column〉 is treated separately via 〈Enable feature engineering from time column〉

Target encoding: tgc_allow_target_encoding independently controls whether time column groups are target encoded

Feature type control: Use allowed_coltypes_for_tgc_as_features for per-feature-type control

Requires: Boolean toggle (default: Enabled)

- Which tgc feature types to consider as standalone features

What it does: Specifies which time groups columns (tgc) feature types to consider as standalone features

When it applies: Only when 《Consider time groups columns as standalone features》 is enabled

Available types: [《numeric》, 《categorical》, 《ohe_categorical》, 《datetime》, 《date》, 《text》]

Separation: 〈time_column〉 is treated separately via 〈Enable feature engineering from time column〉

Lag-based override: If lag-based time series recipe is disabled, all tgc are allowed as features

Requires: List format (default: [《numeric》, 《categorical》, 《ohe_categorical》, 《datetime》, 《date》, 《text》])

- Enable time unaware transformers

What it does: Controls whether to enable transformers (clustering, truncated SVD) that are normally disabled for time series

Overfitting risk: These transformers can leak information across time within each fold, potentially causing overfitting

Requires: String selection (default: 《auto》, options: 《auto》, 《on》, 《off》)

- Always group by all time groups columns for creating lag features

What it does: Controls whether to use all time groups columns when creating lag features

Alternative: When disabled, samples from time groups columns instead of using all

Requires: Boolean toggle (default: Enabled)

- Target encoding of time groups

What it does: Controls whether to allow target encoding of time groups

When useful: Helpful when there are many groups

Independence: allow_tgc_as_features independently controls if tgc are treated as normal features

- Options:

〈auto〉: Choose CV by default

〈CV〉: Enable out-of-fold and CV-in-CV encoding

〈simple〉: Simple memorized targets per group

〈off〉: Disable target encoding

Requirement: Only relevant for time series experiments with at least one time column group apart from the time column

Requires: String selection (default: 《auto》, options: 《auto》, 《CV》, 《simple》, 《off》)

- Auto-Tune time column groups as features and target encoding

What it does: Automatically tries both feature treatment and target encoding approaches for time column groups during tuning

When it applies: If allow_tgc_as_features is true or tgc_allow_target_encoding is true

Benefits: Safer than forcing one approach - lets the system determine which works better

Requires: Boolean toggle (default: Enabled)

- Dropout mode for lag features

What it does: Controls dropout mode for lag features to achieve equal missing value ratios between train and validation/test

- Available modes:

〈off〉: No dropout

〈independent〉: Simple feature-wise dropout

〈dependent〉: Takes lag-size dependencies per sample/row into account

Requires: String selection (default: 《dependent》, options: 《off》, 《dependent》, 《independent》)

- Probability to create non-target lag features (-1.0 = auto)

What it does: Sets the normalized probability of choosing to lag non-target features relative to target features

Auto mode (-1.0): Automatically determines the probability

Requires: Float value (default: -1.0)

- Probability for new time-series transformers to use default lags (-1.0 = auto)

What it does: Sets the probability for new Lags/EWMA transformers to use default lag values

Default lags: Determined by frequency/gap/horizon, independent of data

Auto mode (-1.0): Automatically determines the probability

Requires: Float value (default: -1.0)

- Probability of exploring interaction-based lag transformers (-1.0 = auto)

What it does: Sets the unnormalized probability of choosing lag time-series transformers based on interactions

Auto mode (-1.0): Automatically determines the probability

Requires: Float value (default: -1.0)

- Probability of exploring aggregation-based lag transformers (-1.0 = auto)

What it does: Sets the unnormalized probability of choosing lag time-series transformers based on aggregations

Auto mode (-1.0): Automatically determines the probability

Requires: Float value (default: -1.0)

- Time series centering or detrending transformation

What it does: Applies centering or detrending transformation to remove trends from the target signal

How it works: Fits trend model parameters, removes trend from target signal, fits pipeline on residuals

Prediction: Adds the trend back to predictions

Compatibility: Can be cascaded with 〈Time series lag-based target transformation〉, but mutually exclusive with regular target transformations

Robust variants: Use RANSAC for higher tolerance to outliers

SEIR model: The Epidemic transformer uses the SEIR model (see SEIR model on Wikipedia)

Requires: String selection (default: 《none》, options: 《none》, 《centering (fast)》, 《centering (robust)》, 《linear (fast)》, 《linear (robust)》, 《logistic》, 《epidemic》)

- Custom bounds for SEIRD epidemic model parameters

What it does: Sets custom bounds for the SEIRD epidemic model parameters used for target de-trending per time series group

Target requirement: The target column must correspond to I(t) - infected cases as a function of time

Process: For each training split and time series group, the SEIRD model is fitted to the target signal by optimizing free parameters

Residuals: SEIRD model value is subtracted from training response, residuals passed to feature engineering pipeline

Prediction: SEIRD model value is added back to residual predictions from the pipeline

Important: Careful selection of bounds for parameters N, beta, gamma, delta, alpha, rho, lockdown, beta_decay, beta_decay_rate is crucial for good results

Requires: Dictionary format (default: {})

- Which SEIRD model component the target column corresponds to: I: Infected, R: Recovered, D: Deceased.

What it does: Specifies which SEIRD model component the target column represents

- Available components:

〈I〉: Infected cases

〈R〉: Recovered cases

〈D〉: Deceased cases

Requires: String selection (default: 《I》, options: 《I》, 《R》, 《D》)

- Time series lag-based target transformation

What it does: Applies lag-based target transformation for time series

Compatibility: Can be cascaded with 〈Time series centering or detrending transformation〉, but mutually exclusive with regular target transformations

- Available options:

〈none〉: No transformation

〈difference〉: Difference transformation

〈ratio〉: Ratio transformation

Requires: String selection (default: 《none》, options: 《none》, 《difference》, 《ratio》)

- Lag size used for time series target transformation

What it does: Sets the lag size used for time series target transformation (see 〈Time series lag-based target transformation〉)

Auto mode (-1): Uses smallest valid value = prediction periods + gap (automatically adjusted by Driverless AI if too small)

Requires: Integer value (default: -1)

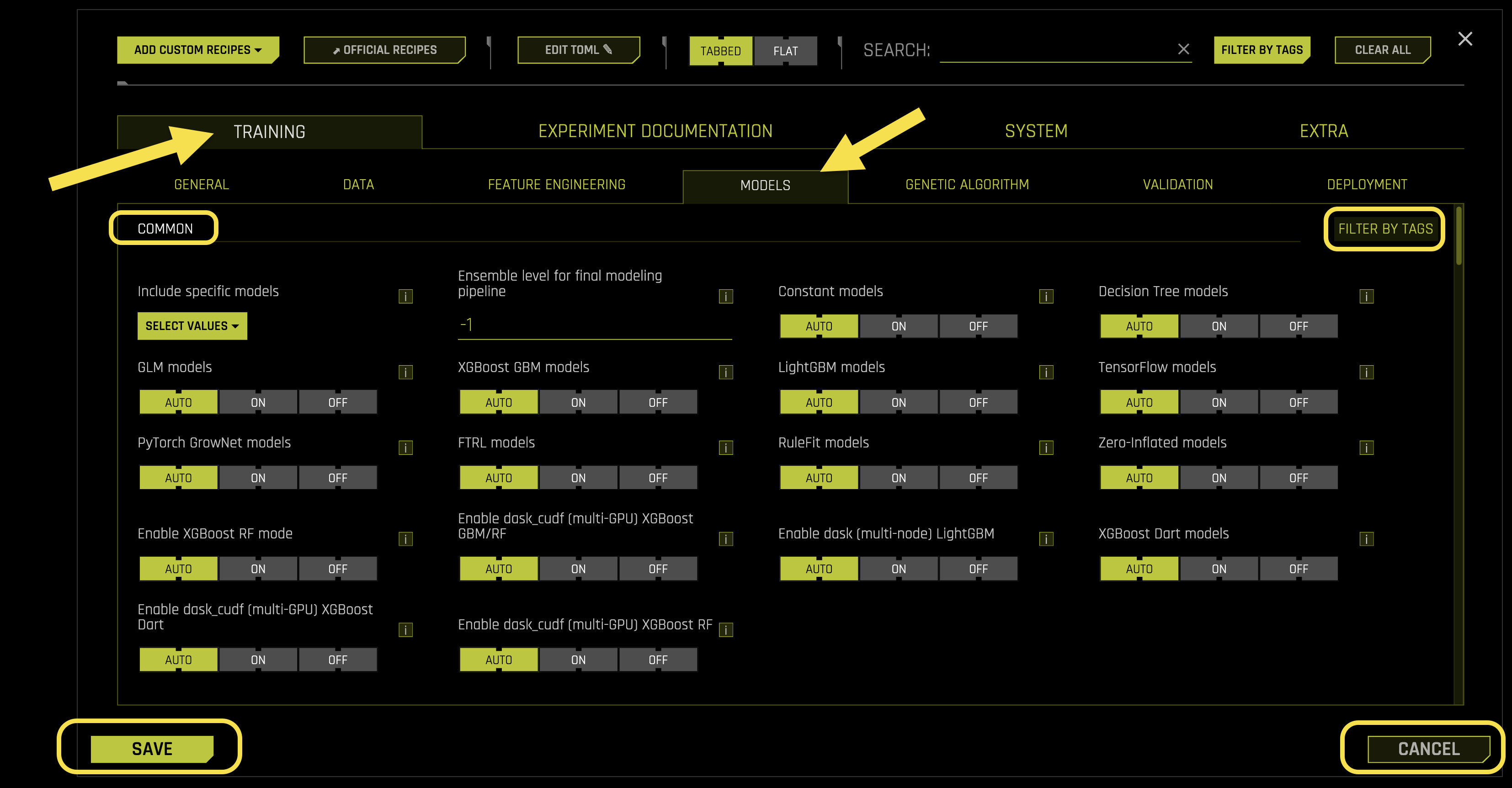

Models

The Models sub-tab configures machine learning algorithms, ensemble settings, and model-specific parameters.

Common Settings:

- Include specific models

What it does: Controls which specific machine learning models to include in the experiment

Leakage detection: By default, LightGBM Model is used for leakage detection when possible

Fold column behavior: If a fold column is used, leakage checking works without using the fold column

Model selection: When models are turned off in Model Expert Settings, only models selected with included_models are used

Time series note: This option is always disabled for time-series experiments

Requires: List format (default: [])

- Ensemble level for final modeling pipeline

What it does: Controls the ensemble strategy for the final modeling pipeline

Auto mode (-1): Automatically determines based on ensemble_accuracy_switch, accuracy, data size, etc.

- Ensemble levels:

0: No ensemble - only final single model with validated iteration/tree count

1: 1 model with multiple ensemble folds (cross-validation)

≥2: Multiple models with multiple ensemble folds (cross-validation)

Requires: Integer value (default: -1)

- Constant models

What it does: Controls whether to enable constant (baseline) models

Purpose: Provides a simple baseline for comparison

Requires: String selection (default: 《auto》, options: 《auto》, 《on》, 《off》)

- Decision Tree models

What it does: Controls whether to enable Decision Tree models

Auto behavior: Disabled unless only non-constant model is chosen

Requires: String selection (default: 《auto》, options: 《auto》, 《on》, 《off》)

- GLM models

What it does: Controls whether to enable GLM (Generalized Linear Model) models

Purpose: Linear models with various link functions for different data types

Requires: String selection (default: 《auto》, options: 《auto》, 《on》, 《off》)

- XGBoost GBM models

What it does: Controls whether to enable XGBoost GBM (Gradient Boosting Machine) models

Purpose: High-performance gradient boosting for structured data

Requires: String selection (default: 《auto》, options: 《auto》, 《on》, 《off》)

- LightGBM models

What it does: Controls whether to enable LightGBM (Light Gradient Boosting Machine) models

Purpose: Fast gradient boosting with low memory usage

Requires: String selection (default: 《auto》, options: 《auto》, 《on》, 《off》)

- TensorFlow models

What it does: Controls whether to enable TensorFlow models

Requires: String selection (default: 《auto》, options: 《auto》, 《on》, 《off》)

- PyTorch GrowNet models

What it does: Controls whether to enable PyTorch-based GrowNet models

Purpose: Deep neural network models using PyTorch framework

Requires: String selection (default: 《auto》, options: 《auto》, 《on》, 《off》)

- FTRL models

What it does: Controls whether to enable FTRL (Follow The Regularized Leader) models

Purpose: Online learning algorithm for large-scale machine learning

Requires: String selection (default: 《auto》, options: 《auto》, 《on》, 《off》)

- RuleFit models

What it does: Controls whether to enable RuleFit models

Status: Beta version with no MOJO support

Purpose: Combines decision trees and linear models for interpretable rules

Requires: String selection (default: 《auto》, options: 《auto》, 《on》, 《off》)

- Zero-Inflated models

What it does: Controls whether to enable Zero-Inflated models

Purpose: Specialized models for count data with excess zeros

Requires: String selection (default: 《auto》, options: 《auto》, 《on》, 《off》)

- Enable XGBoost RF mode

What it does: Controls whether to enable XGBoost Random Forest mode

Purpose: Uses Random Forest algorithm within XGBoost framework

Requires: String selection (default: 《auto》, options: 《auto》, 《on》, 《off》)

- Enable dask_cudf (multi-GPU) XGBoost GBM/RF

What it does: Controls whether to enable multi-GPU XGBoost GBM/RF using dask_cudf

Requirements: Disabled by default, must be explicitly enabled