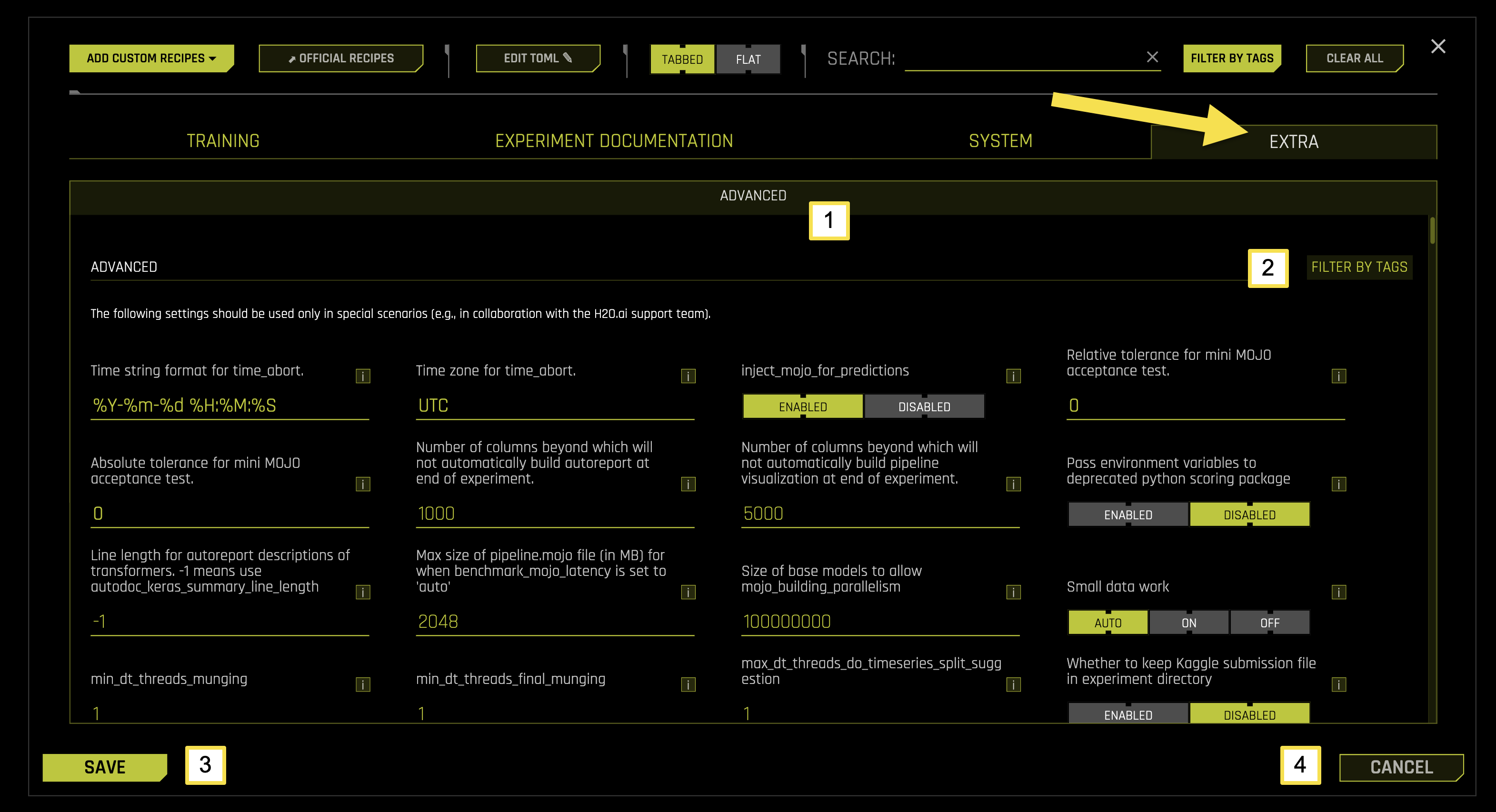

Extra Settings

The EXTRA tab in EXPERT SETTINGS provides advanced configuration options for your experimental environment. These settings are designed for experienced users and specific use cases, often used in collaboration with H2O.ai support teams.

Extra Tab Sub-Categories

The EXTRA tab contains one main sub-category with specialized settings for machine learning experiments.

To access these settings, navigate to EXPERT SETTINGS > EXTRA tab from the Experiment Setup page.

The following table describes the available options on the EXTRA page:

Sub-Category |

Description |

|---|---|

[1] Advanced |

Configure specialized settings for advanced scenarios and support collaboration |

[2] Filter by Tags |

Filter and organize extra settings using custom tags and labels |

[3] Save |

Save all extra configuration changes |

[4] Cancel |

Cancel changes and revert to previous configuration settings |

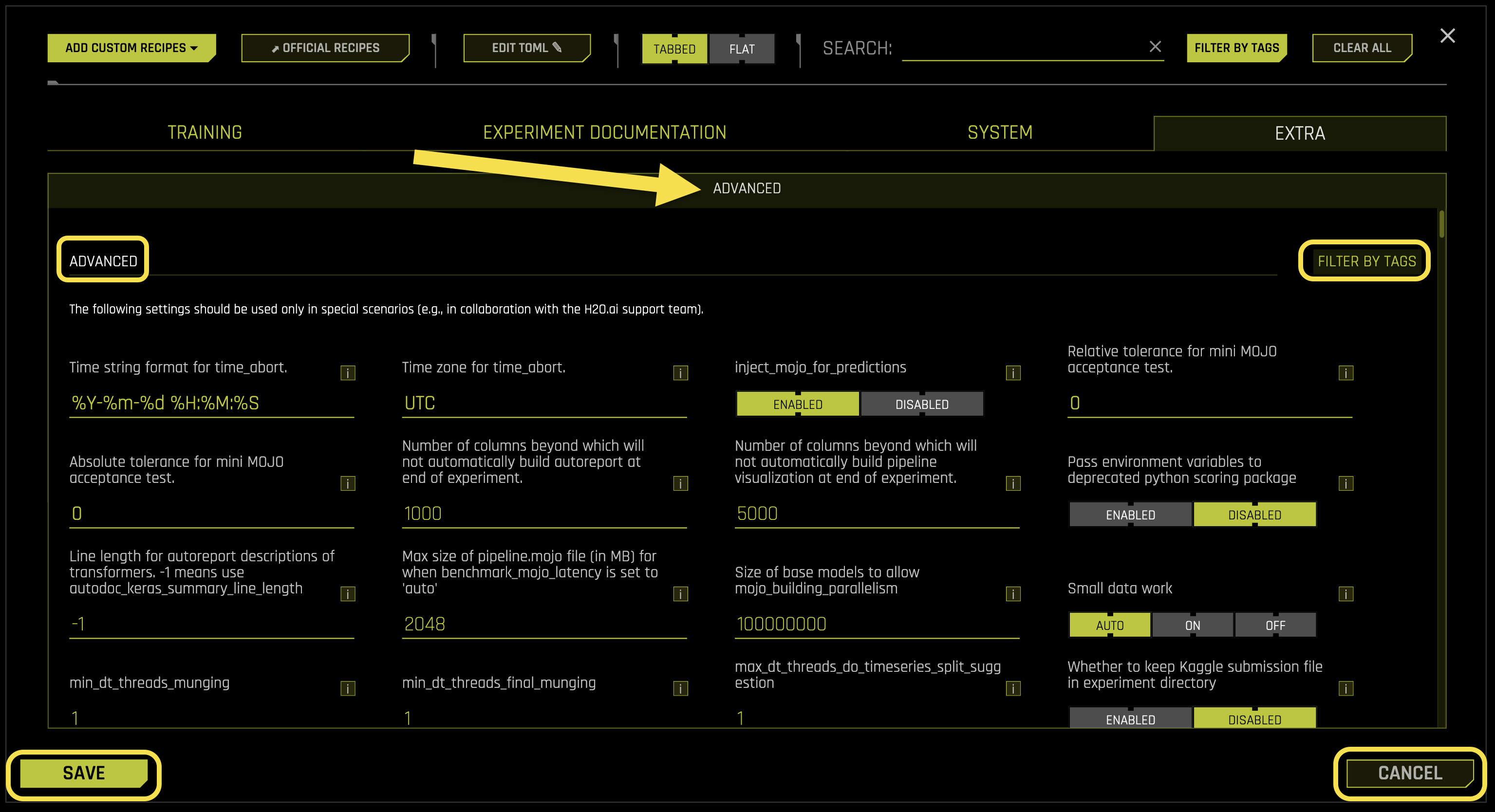

Advanced

The Advanced sub-tab contains specialized configuration options for advanced users and specific use cases. These settings provide fine-tuned control over system behavior and are typically used in collaboration with H2O.ai support teams.

Advanced Settings:

- Time string format for time_abort

What it does: Defines the date and time format string for experiment abortion scheduling

Purpose: Enables scheduling automatic experiment termination at specific times

Format: Python strftime format string for date/time parsing

Default format: %Y-%m-%d %H:%M:%S (YYYY-MM-DD HH:MM:SS)

Example: “2024-12-31 23:59:59” for New Year’s Eve at 11:59 PM

Requires: String value (default: “%Y-%m-%d %H:%M:%S”)

- Time zone for time_abort

What it does: Specifies the timezone for time_abort scheduling

Purpose: Ensures accurate time-based experiment termination across different timezones

Format: Standard timezone identifier (e.g., UTC, EST, PST)

Default timezone: UTC (Coordinated Universal Time)

Impact: Changes take effect immediately for new experiments

Use case: Set this when running experiments across different geographic locations

Requires: String value (default: “UTC”)

- inject_mojo_for_predictions

What it does: Controls whether to use MOJO (Model Object, Optimized) for prediction operations

Purpose: Enables optimized prediction pipelines using MOJO artifacts

Performance: MOJO provides faster prediction inference than standard methods

Impact: Significantly improves prediction speed for production deployments

Use case: Enable for production systems requiring fast inference

- Available options:

Enabled: Uses MOJO for faster predictions

Disabled: Uses standard prediction methods

Requires: Boolean toggle (default: Enabled)

- Relative tolerance for mini MOJO acceptance test

What it does: Sets the relative tolerance threshold for MOJO acceptance testing

Purpose: Validates MOJO accuracy compared to original model predictions

Tolerance: Relative error threshold for acceptance (0 = exact match)

Use case: Ensures MOJO predictions are within acceptable accuracy bounds

Note: Relative tolerance compares percentage difference between predictions

Requires: Float value (default: 0)

- Absolute tolerance for mini MOJO acceptance test

What it does: Sets the absolute tolerance threshold for MOJO acceptance testing

Purpose: Validates MOJO accuracy using absolute error thresholds

Tolerance: Absolute error threshold for acceptance (0 = exact match)

Use case: Ensures MOJO predictions are within acceptable accuracy bounds

Note: Absolute tolerance compares direct difference between prediction values

Requires: Float value (default: 0)

- Number of columns beyond which will not automatically build autoreport at end of experiment

What it does: Sets the maximum column threshold for automatic autoreport generation

Purpose: Prevents automatic autoreport generation for datasets with too many columns

Performance: Large column counts can significantly slow down autoreport generation

Threshold: Experiments with more columns will skip automatic autoreport

Note: Manual autoreport generation remains available regardless of column count

Requires: Integer value (default: 1000)

- Number of columns beyond which will not automatically build pipeline visualization at end of experiment

What it does: Sets the maximum column threshold for automatic pipeline visualization

Purpose: Prevents automatic pipeline visualization for datasets with too many columns

Performance: Large column counts can significantly slow down visualization generation

Threshold: Experiments with more columns will skip automatic pipeline visualization

Note: Manual pipeline visualization remains available regardless of column count

Requires: Integer value (default: 5000)

- Pass environment variables to deprecated python scoring package

What it does: Controls whether to pass environment variables to legacy Python scoring packages

Purpose: Maintains compatibility with deprecated scoring functionality

Legacy support: Ensures backward compatibility with older scoring implementations

- Available options:

Enabled: Passes environment variables to legacy scoring packages

Disabled: Uses standard environment variable handling

Requires: Boolean toggle (default: Enabled)

- Line length for autoreport descriptions of transformers. -1 means use autodoc_keras_summary_line_length

What it does: Sets the maximum line length for transformer descriptions in autoreports

Purpose: Controls formatting and readability of transformer documentation

Auto mode (-1): Uses the autodoc_keras_summary_line_length setting

Custom value: Sets specific line length for transformer descriptions

Note: Longer lines improve readability but may affect layout on narrow displays

Requires: Integer value (default: -1)

- Max size of pipeline.mojo file (in MB) for when benchmark_mojo_latency is set to ‘auto’

What it does: Sets the maximum allowed size for pipeline.mojo files in automatic benchmarking

Purpose: Prevents latency benchmarking of excessively large MOJO files

Performance: Large MOJO files can cause benchmarking timeouts and resource issues

Size limit: MOJO files larger than this threshold skip automatic latency benchmarking

Note: Manual latency benchmarking is still available for large files

Requires: Integer value (default: 2048)

- Size of base models to allow mojo_building_parallelism

What it does: Sets the minimum model size threshold for enabling parallel MOJO building

Purpose: Optimizes MOJO building performance for appropriately sized models

Performance: Parallel building is only beneficial for models above this size threshold

Threshold: Models smaller than this size use sequential MOJO building

Note: Parallel building overhead is not justified for small models

Requires: Integer value (default: 100000000)

- Small data work

What it does: Controls optimization settings for small datasets

Purpose: Applies specialized optimizations for datasets with limited data

Optimization: Uses faster algorithms and reduced complexity for small datasets

Impact: Improves training speed for datasets with fewer than 10,000 rows

Use case: Enable when working with small datasets to avoid overfitting

- Available options:

auto: Automatically determines small data optimizations

on: Forces small data optimizations

off: Disables small data optimizations

Requires: String selection (default: auto)

- min_dt_threads_munging

What it does: Sets the minimum number of datatable threads for data munging operations

Purpose: Ensures minimum threading performance for data preprocessing

Performance: Guarantees minimum parallel processing for munging operations

Threading: Sets floor value for datatable threading during munging

Note: datatable is a high-performance data manipulation library

Requires: Integer value (default: 1)

- min_dt_threads_final_munging

What it does: Sets the minimum number of datatable threads for final munging operations

Purpose: Ensures minimum threading performance for final data preprocessing

Performance: Guarantees minimum parallel processing for final munging operations

Threading: Sets floor value for datatable threading during final munging

Note: datatable is a high-performance data manipulation library

Requires: Integer value (default: 1)

- max_dt_threads_do_timeseries_split_suggestion

What it does: Sets the maximum number of datatable threads for time series split suggestion operations

Purpose: Controls threading for time series analysis and split recommendation

Performance: Limits threading to prevent resource contention during time series operations

Threading: Sets ceiling value for datatable threading during time series split suggestions

Note: datatable is a high-performance data manipulation library

Requires: Integer value (default: 1)

- Whether to keep Kaggle submission file in experiment directory

What it does: Controls whether to retain Kaggle submission files in the experiment output directory

Purpose: Manages storage of Kaggle competition submission artifacts

Storage: Controls disk space usage for Kaggle-related files

- Available options:

Enabled: Keeps Kaggle submission files in experiment directory

Disabled: Removes Kaggle submission files after processing

Requires: Boolean toggle (default: Enabled)

- Custom Kaggle competitions to make automatic test set submissions for

What it does: Specifies custom Kaggle competitions for automatic test set submissions

Purpose: Enables automated submission to specific Kaggle competitions

Format: List of competition identifiers or names

Use case: Streamlines Kaggle competition workflow for specific contests

Requires: List of strings (default: [])

- ping_period

What it does: Sets the interval in seconds for system status ping during experiments

Purpose: Controls system monitoring frequency during experiment execution

Monitoring: Enables periodic system health checks and status updates

Performance: More frequent pings provide better monitoring but use more resources

Requires: Integer value (default: 60)

- Whether to enable ping of system status during DAI experiments

What it does: Controls whether to enable system status monitoring during experiments

Purpose: Provides real-time system health monitoring during experiment execution

Monitoring: Tracks system performance, resource usage, and experiment progress

- Available options:

Enabled: Enables system status monitoring

Disabled: Disables system status monitoring

Requires: Boolean toggle (default: Enabled)

- stall_disk_limit_gb

What it does: Sets the disk space limit in GB for stalling operations

Purpose: Prevents disk space issues by limiting stalling operation disk usage

Storage: Controls disk space allocation for temporary stalling operations

Performance: Helps prevent system crashes due to disk space exhaustion

Requires: Float value (default: 1)

- min_rows_per_class

What it does: Sets the minimum number of rows required per class for classification problems

Purpose: Ensures sufficient data for each class in classification tasks

Quality: Prevents training on classes with insufficient data

Threshold: Classes with fewer rows may be excluded or handled specially

Requires: Integer value (default: 5)

- min_rows_per_split

What it does: Sets the minimum number of rows required per data split

Purpose: Ensures sufficient data for each split in cross-validation or train/test splits

Quality: Prevents splits with insufficient data that could lead to poor model performance

Threshold: Splits with fewer rows may be adjusted or excluded

Requires: Integer value (default: 5)

- tf_nan_impute_value

What it does: Sets the value used to impute NaN (Not a Number) values in TensorFlow models

Purpose: Handles missing values in TensorFlow model inputs

Imputation: Replaces NaN values with the specified value during model processing

Default value: -5 (negative value to distinguish from real data)

Requires: Float value (default: -5)

- statistical_threshold_data_size_small

What it does: Sets the threshold for considering a dataset as small for statistical operations

Purpose: Determines when to apply small dataset optimizations for statistical calculations

Performance: Smaller datasets may use different algorithms or parameters

Threshold: Datasets below this size are considered small for statistical operations

Requires: Integer value (default: 100000)

- statistical_threshold_data_size_large

What it does: Sets the threshold for considering a dataset as large for statistical operations

Purpose: Determines when to apply large dataset optimizations for statistical calculations

Performance: Larger datasets may use different algorithms or sampling strategies

Threshold: Datasets above this size are considered large for statistical operations

Requires: Integer value (default: 500000000)

- aux_threshold_data_size_large

What it does: Sets the threshold for auxiliary operations on large datasets

Purpose: Determines when to apply large dataset optimizations for auxiliary operations

Performance: Controls memory and processing optimizations for auxiliary tasks

Threshold: Datasets above this size trigger large dataset auxiliary optimizations

Requires: Integer value (default: 10000000)

- set_method_sampling_row_limit

What it does: Sets the maximum number of rows for method sampling operations

Purpose: Limits the number of rows used in sampling-based method evaluations

Performance: Prevents excessive memory usage in sampling operations

Sampling: Controls the scope of sampling for method performance evaluation

Requires: Integer value (default: 5000000)

- performance_threshold_data_size_small

What it does: Sets the threshold for considering a dataset as small for performance optimizations

Purpose: Determines when to apply small dataset performance optimizations

Performance: Smaller datasets may use different performance tuning strategies

Threshold: Datasets below this size are considered small for performance operations

Requires: Integer value (default: 100000)

- performance_threshold_data_size_large

What it does: Sets the threshold for considering a dataset as large for performance optimizations

Purpose: Determines when to apply large dataset performance optimizations

Performance: Larger datasets may use different performance tuning strategies

Threshold: Datasets above this size are considered large for performance operations

Requires: Integer value (default: 100000000)

- gpu_default_threshold_data_size_large

What it does: Sets the threshold for default GPU usage on large datasets

Purpose: Determines when to automatically enable GPU acceleration for large datasets

Performance: GPU acceleration is most beneficial for datasets above this threshold

Threshold: Datasets above this size automatically trigger GPU usage

Requires: Integer value (default: 1000000)

- max_relative_cols_mismatch_allowed

What it does: Sets the maximum allowed relative column mismatch between datasets

Purpose: Controls tolerance for column differences between training and validation data

Validation: Ensures data consistency across different dataset splits

Tolerance: Maximum percentage of column mismatch allowed (0.5 = 50%)

Requires: Float value (default: 0.5)

- max_rows_final_blender

What it does: Sets the maximum number of rows for final model blending operations

Purpose: Limits the number of rows used in final ensemble blending

Performance: Prevents excessive memory usage in final blending operations

Blending: Controls the scope of final model ensemble blending

Requires: Integer value (default: 1000000)

- min_rows_final_blender

What it does: Sets the minimum number of rows required for final model blending operations

Purpose: Ensures sufficient data for reliable final model blending

Quality: Prevents blending on datasets too small for reliable ensemble creation

Threshold: Datasets below this size may skip final blending

Requires: Integer value (default: 10000)

- max_rows_final_train_score

What it does: Sets the maximum number of rows for final training score calculations

Purpose: Limits the number of rows used in final training score evaluation

Performance: Prevents excessive computation time for training score calculation

Evaluation: Controls the scope of final training score assessment

Requires: Integer value (default: 5000000)

- max_rows_final_roccmconf

What it does: Sets the maximum number of rows for final ROC confusion matrix calculations

Purpose: Limits the number of rows used in ROC and confusion matrix evaluation

Performance: Prevents excessive computation time for ROC calculations

Evaluation: Controls the scope of final ROC and confusion matrix assessment

Requires: Integer value (default: 1000000)

- max_rows_final_holdout_score

What it does: Sets the maximum number of rows for final holdout score calculations

Purpose: Limits the number of rows used in final holdout score evaluation

Performance: Prevents excessive computation time for holdout score calculation

Evaluation: Controls the scope of final holdout score assessment

Requires: Integer value (default: 5000000)

- max_rows_final_holdout_bootstrap_score

What it does: Sets the maximum number of rows for final holdout bootstrap score calculations

Purpose: Limits the number of rows used in final holdout bootstrap score evaluation

Performance: Prevents excessive computation time for bootstrap score calculation

Evaluation: Controls the scope of final holdout bootstrap score assessment

Requires: Integer value (default: 1000000)

- Max. rows for leakage detection if wide rules used on wide data

What it does: Sets the maximum number of rows for leakage detection when using wide rules on wide datasets

Purpose: Limits the scope of leakage detection to prevent excessive computation time

Performance: Wide rules on wide data can be computationally expensive

Threshold: Datasets exceeding this limit may use sampling for leakage detection

Requires: Integer value (default: 100000)

- Num. simultaneous predictions for feature selection (0 = auto)

What it does: Sets the number of simultaneous predictions during feature selection operations

Purpose: Controls parallel processing for feature selection prediction tasks

Auto mode (0): Uses automatic parallel processing based on system resources

Custom value: Limits simultaneous predictions to specified number

Performance: More simultaneous predictions can speed up feature selection

Requires: Integer value (default: 0)

- Num. simultaneous fits for shift and leak checks if using LightGBM on CPU (0 = auto)

What it does: Sets the number of simultaneous LightGBM fits for shift and leakage checks on CPU

Purpose: Controls parallel processing for shift and leakage detection using LightGBM

Auto mode (0): Uses automatic parallel processing based on CPU resources

Custom value: Limits simultaneous fits to specified number

Performance: More simultaneous fits can speed up shift and leakage detection

Requires: Integer value (default: 0)

- max_orig_nonnumeric_cols_selected_default

What it does: Sets the maximum number of original non-numeric columns selected by default

Purpose: Controls the default selection of non-numeric columns for feature engineering

Selection: Limits the number of non-numeric columns automatically included

Performance: Helps manage computational complexity for non-numeric features

Requires: Integer value (default: 300)

- max_orig_cols_selected_simple_factor

What it does: Sets the factor for maximum original columns selected in simple scenarios

Purpose: Controls column selection scaling factor for simple feature engineering

Scaling: Multiplies base column selection by this factor for simple cases

Performance: Helps balance feature richness with computational efficiency

Requires: Integer value (default: 2)

- fs_orig_cols_selected_simple_factor

What it does: Sets the factor for original columns selected in feature selection simple scenarios

Purpose: Controls column selection scaling factor for simple feature selection

Scaling: Multiplies base column selection by this factor for simple feature selection

Performance: Helps balance feature selection scope with computational efficiency

Requires: Integer value (default: 2)

- Allow supported models to do feature selection by permutation importance within model itself

What it does: Enables models to perform feature selection using permutation importance internally

Purpose: Allows models to automatically select features based on their own importance calculations

Efficiency: Reduces need for separate feature selection steps

- Available options:

Enabled: Models perform internal feature selection

Disabled: Uses external feature selection methods only

Requires: Boolean toggle (default: Enabled)

- Whether to use native categorical handling (CPU only) for LightGBM when doing feature selection by permutation

What it does: Controls whether to use LightGBM’s native categorical handling for permutation-based feature selection

Purpose: Optimizes categorical feature handling during permutation importance calculations

Performance: Native handling can be faster but is CPU-only

- Available options:

Enabled: Uses LightGBM native categorical handling

Disabled: Uses standard categorical handling

Requires: Boolean toggle (default: Enabled)

- Maximum number of original columns up to which will compute standard deviation of original feature importance. Can be expensive if many features.

What it does: Sets the maximum number of original columns for computing feature importance standard deviation

Purpose: Limits computation of feature importance statistics to prevent performance issues

Performance: Computing standard deviation can be expensive with many features

Threshold: Only computes standard deviation for datasets with columns below this limit

Requires: Integer value (default: 1000)

- num_folds

What it does: Sets the number of cross-validation folds for model evaluation

Purpose: Controls the number of folds used in cross-validation for model assessment

Validation: More folds provide more robust evaluation but increase computation time

Balance: Typical values range from 3-10 folds depending on dataset size

Requires: Integer value (default: 3)

- full_cv_accuracy_switch

What it does: Sets the accuracy threshold for switching to full cross-validation

Purpose: Determines when to use full cross-validation based on model accuracy

Optimization: Uses faster validation methods until accuracy reaches this threshold

Threshold: Models with accuracy above this value use full cross-validation

Requires: Integer value (default: 9)

- ensemble_accuracy_switch

What it does: Sets the accuracy threshold for switching to ensemble methods

Purpose: Determines when to use ensemble methods based on individual model accuracy

Optimization: Uses single models until accuracy reaches this threshold

Threshold: Models with accuracy above this value may trigger ensemble creation

Requires: Integer value (default: 5)

- num_ensemble_folds

What it does: Sets the number of folds used for ensemble model evaluation

Purpose: Controls cross-validation folds specifically for ensemble model assessment

Ensemble: Determines the robustness of ensemble model evaluation

Performance: More folds provide better ensemble evaluation but increase computation

Requires: Integer value (default: 4)

- fold_reps

What it does: Sets the number of repetitions for each fold in cross-validation

Purpose: Controls fold repetition for more robust cross-validation results

Robustness: Multiple repetitions help reduce variance in cross-validation scores

Performance: More repetitions increase computation time but improve reliability

Requires: Integer value (default: 1)

- max_num_classes_hard_limit

What it does: Sets the hard limit for the maximum number of classes in classification problems

Purpose: Prevents excessive computation for classification problems with too many classes

Performance: Large numbers of classes can significantly slow down training and prediction

Limit: Classification problems with more classes may be handled differently

Requires: Integer value (default: 10000)

- min_roc_sample_size

What it does: Sets the minimum sample size for ROC (Receiver Operating Characteristic) calculations

Purpose: Ensures sufficient data for reliable ROC curve computation

Quality: Prevents ROC calculations on datasets too small for reliable results

Threshold: Datasets below this size may skip ROC calculations or use simplified methods

Requires: Integer value (default: 1)

- enable_strict_confict_key_check_for_brain

What it does: Enables strict conflict key checking for the Feature Brain system

Purpose: Provides more rigorous validation of configuration keys in Feature Brain

Validation: Helps prevent configuration conflicts and inconsistencies

- Available options:

Enabled: Uses strict conflict key checking

Disabled: Uses standard key checking

Requires: Boolean toggle (default: Enabled)

- For feature brain or restart/refit, whether to allow brain ingest to use different feature engineering layer count

What it does: Controls whether Feature Brain can use different feature engineering layer counts during ingest

Purpose: Provides flexibility in feature engineering layer configuration for brain operations

Flexibility: Allows adaptation to different feature engineering requirements

- Available options:

Enabled: Allows different layer counts

Disabled: Requires consistent layer counts

Requires: Boolean toggle (default: Disabled)

- brain_maximum_diff_score

What it does: Sets the maximum allowed score difference for Feature Brain operations

Purpose: Controls the tolerance for score differences in brain-based feature selection

Tolerance: Allows small score differences while maintaining brain efficiency

Threshold: Score differences above this value may trigger brain adjustments

Requires: Float value (default: 0.1)

- brain_max_size_GB

What it does: Sets the maximum size in GB for Feature Brain memory usage

Purpose: Controls memory allocation for Feature Brain operations

Memory: Prevents excessive memory usage by Feature Brain system

Limit: Brain operations exceeding this size may use memory optimization strategies

Requires: Float value (default: 20)

- early_stopping

What it does: Controls whether to enable early stopping for model training

Purpose: Prevents overfitting by stopping training when validation performance stops improving

Optimization: Reduces training time and improves generalization

- Available options:

Enabled: Uses early stopping during training

Disabled: Trains for full specified duration

Requires: Boolean toggle (default: Enabled)

- early_stopping_per_individual

What it does: Controls whether to enable early stopping for individual models in genetic algorithm

Purpose: Applies early stopping to individual models during genetic algorithm evolution

Optimization: Improves efficiency of genetic algorithm by stopping poor performers early

- Available options:

Enabled: Uses early stopping for individuals

Disabled: Trains all individuals for full duration

Requires: Boolean toggle (default: Enabled)

- text_dominated_limit_tuning

What it does: Controls tuning limits for text-dominated datasets

Purpose: Applies specialized tuning limits when text features dominate the dataset

Optimization: Adjusts tuning parameters for optimal text processing performance

- Available options:

Enabled: Applies text-dominated tuning limits

Disabled: Uses standard tuning limits

Requires: Boolean toggle (default: Enabled)

- image_dominated_limit_tuning

What it does: Controls tuning limits for image-dominated datasets

Purpose: Applies specialized tuning limits when image features dominate the dataset

Optimization: Adjusts tuning parameters for optimal image processing performance

- Available options:

Enabled: Applies image-dominated tuning limits

Disabled: Uses standard tuning limits

Requires: Boolean toggle (default: Enabled)

- supported_image_types

What it does: Specifies the supported image file types for image processing

Purpose: Defines which image formats can be processed by the system

Compatibility: Ensures only supported image types are processed

Format: List of supported image file extensions

Requires: List of strings (default: [“jpg”, “jpeg”, “png”, “bmp”, “tiff”])

- image_paths_absolute

What it does: Controls whether image paths are treated as absolute paths

Purpose: Determines how image file paths are interpreted and resolved

Path handling: Absolute paths are resolved from root, relative paths from current directory

- Available options:

Enabled: Treats image paths as absolute

Disabled: Treats image paths as relative

Requires: Boolean toggle (default: Disabled)

- text_dl_token_pad_percentile

What it does: Sets the percentile for token padding in deep learning text processing

Purpose: Controls token sequence padding length based on dataset percentile

Padding: Determines how much padding to add to text sequences for consistent length

Percentile: Uses specified percentile of sequence lengths for padding calculation

Requires: Integer value (default: 99)

- text_dl_token_pad_max

What it does: Sets the maximum token padding length for deep learning text processing

Purpose: Limits the maximum length of padded text sequences

Padding: Prevents excessive padding that could waste memory or computation

Limit: Text sequences are padded up to this maximum length

Requires: Integer value (default: 512)

- tune_parameters_accuracy_switch

What it does: Sets the accuracy threshold for switching to parameter tuning

Purpose: Determines when to enable parameter tuning based on model accuracy

Optimization: Uses basic parameters until accuracy reaches this threshold

Threshold: Models with accuracy above this value trigger parameter tuning

Requires: Integer value (default: 3)

- tune_target_transform_accuracy_switch

What it does: Sets the accuracy threshold for switching to target transformation tuning

Purpose: Determines when to enable target transformation tuning based on model accuracy

Optimization: Uses standard target handling until accuracy reaches this threshold

Threshold: Models with accuracy above this value trigger target transformation tuning

Requires: Integer value (default: 5)

- tournament_uniform_style_interpretability_switch

What it does: Sets the interpretability threshold for uniform style tournament selection

Purpose: Determines when to use uniform style tournament based on interpretability setting

Tournament: Controls tournament selection strategy based on interpretability requirements

Threshold: Interpretability settings above this value trigger uniform style tournament

Requires: Integer value (default: 8)

- tournament_uniform_style_accuracy_switch

What it does: Sets the accuracy threshold for uniform style tournament selection

Purpose: Determines when to use uniform style tournament based on model accuracy

Tournament: Controls tournament selection strategy based on accuracy requirements

Threshold: Models with accuracy above this value trigger uniform style tournament

Requires: Integer value (default: 6)

- tournament_model_style_accuracy_switch

What it does: Sets the accuracy threshold for model style tournament selection

Purpose: Determines when to use model style tournament based on model accuracy

Tournament: Controls tournament selection strategy focusing on model characteristics

Threshold: Models with accuracy above this value trigger model style tournament

Requires: Integer value (default: 6)

- tournament_feature_style_accuracy_switch

What it does: Sets the accuracy threshold for feature style tournament selection

Purpose: Determines when to use feature style tournament based on model accuracy

Tournament: Controls tournament selection strategy focusing on feature characteristics

Threshold: Models with accuracy above this value trigger feature style tournament

Requires: Integer value (default: 13)

- tournament_fullstack_style_accuracy_switch

What it does: Sets the accuracy threshold for fullstack style tournament selection

Purpose: Determines when to use fullstack style tournament based on model accuracy

Tournament: Controls tournament selection strategy using full pipeline evaluation

Threshold: Models with accuracy above this value trigger fullstack style tournament

Requires: Integer value (default: 13)

- tournament_use_feature_penalized_score

What it does: Controls whether to use feature-penalized scoring in tournament selection

Purpose: Applies penalty to scores based on feature complexity in tournament evaluation

Scoring: Adjusts model scores to account for feature engineering complexity

- Available options:

Enabled: Uses feature-penalized scoring

Disabled: Uses standard scoring without feature penalties

Requires: Boolean toggle (default: Enabled)

- tournament_keep_poor_scores_for_small_data

What it does: Controls whether to keep poor scoring models for small datasets

Purpose: Retains models with poor scores when working with limited data

Small data: Helps maintain diversity in model selection for small datasets

- Available options:

Enabled: Keeps poor scoring models for small data

Disabled: Removes poor scoring models regardless of dataset size

Requires: Boolean toggle (default: Enabled)

- tournament_remove_poor_scores_before_evolution_model_factor

What it does: Sets the model factor for removing poor scores before evolution phase

Purpose: Controls which models to remove based on score thresholds before evolution

Evolution: Filters out poor performers to focus evolution on better models

Factor: Multiplier applied to score thresholds for model removal decisions

Requires: Float value (default: 0.7)

- tournament_remove_worse_than_constant_before_evolution

What it does: Controls whether to remove models worse than constant models before evolution

Purpose: Removes models that perform worse than simple constant models

Evolution: Ensures evolution focuses on models better than baseline constant models

- Available options:

Enabled: Removes models worse than constants

Disabled: Keeps all models regardless of constant model performance

Requires: Boolean toggle (default: Enabled)

- tournament_keep_absolute_ok_scores_before_evolution_model_factor

What it does: Sets the model factor for keeping absolutely OK scores before evolution

Purpose: Controls retention of models with acceptable absolute scores before evolution

Evolution: Ensures models with good absolute performance are retained

Factor: Multiplier applied to absolute score thresholds for model retention

Requires: Float value (default: 0.2)

- tournament_remove_poor_scores_before_final_model_factor

What it does: Sets the model factor for removing poor scores before final model selection

Purpose: Controls which models to remove based on score thresholds before final selection

Final selection: Filters out poor performers to focus final selection on better models

Factor: Multiplier applied to score thresholds for final model removal decisions

Requires: Float value (default: 0.3)

- tournament_remove_worse_than_constant_before_final_model

What it does: Controls whether to remove models worse than constant models before final selection

Purpose: Removes models that perform worse than simple constant models before final selection

Final selection: Ensures final selection focuses on models better than baseline constants

- Available options:

Enabled: Removes models worse than constants

Disabled: Keeps all models regardless of constant model performance

Requires: Boolean toggle (default: Enabled)

- num_individuals

What it does: Sets the number of individuals in the genetic algorithm population

Purpose: Controls the population size for genetic algorithm evolution

Evolution: Larger populations provide more diversity but increase computation time

Balance: Typical values range from 2-10 individuals depending on problem complexity

Requires: Integer value (default: 2)

- cv_in_cv_overconfidence_protection_factor

What it does: Sets the protection factor for cross-validation within cross-validation overconfidence

Purpose: Provides protection against overconfident predictions in nested cross-validation

Overconfidence: Reduces overconfidence in model predictions through nested validation

Factor: Multiplier applied to overconfidence protection mechanisms

Requires: Integer value (default: 3)

- Exclude specific transformers

What it does: Specifies which transformers to exclude from the experiment

Purpose: Allows exclusion of specific transformers that may not be suitable for the dataset

Exclusion: Removes specified transformers from the available transformer pool

Format: List of transformer names or identifiers to exclude

Use case: Useful for excluding transformers known to cause issues with specific data types

Requires: List of strings (default: [])

- Exclude specific genes

What it does: Specifies which genes (transformer instances) to exclude from the experiment

Purpose: Allows exclusion of specific gene configurations from genetic algorithm

Exclusion: Removes specified genes from the available gene pool

Format: List of gene identifiers or configurations to exclude

Use case: Useful for excluding problematic gene configurations

Requires: List of strings (default: [])

- Exclude specific models

What it does: Specifies which models to exclude from the experiment

Purpose: Allows exclusion of specific models that may not be suitable for the dataset

Exclusion: Removes specified models from the available model pool

Format: List of model names or types to exclude

Use case: Useful for excluding models that don’t work well with specific data characteristics

Requires: List of strings (default: [])

- Exclude specific pretransformers

What it does: Specifies which pretransformers to exclude from the experiment

Purpose: Allows exclusion of specific pretransformers that may not be suitable

Exclusion: Removes specified pretransformers from the available pretransformer pool

Format: List of pretransformer names or types to exclude

Use case: Useful for excluding pretransformers that cause issues with specific data

Requires: List of strings (default: [])

- Exclude specific data recipes

What it does: Specifies which data recipes to exclude from the experiment

Purpose: Allows exclusion of specific data recipes that may not be suitable

Exclusion: Removes specified data recipes from the available recipe pool

Format: List of data recipe names or types to exclude

Use case: Useful for excluding data recipes that don’t work well with specific datasets

Requires: List of strings (default: [])

- Exclude specific individual recipes

What it does: Specifies which individual recipes to exclude from the experiment

Purpose: Allows exclusion of specific individual recipe configurations

Exclusion: Removes specified individual recipes from the available recipe pool

Format: List of individual recipe identifiers to exclude

Use case: Useful for excluding problematic individual recipe configurations

Requires: List of strings (default: [])

- Exclude specific scorers

What it does: Specifies which scorers to exclude from the experiment

Purpose: Allows exclusion of specific scorers that may not be suitable for the problem type

Exclusion: Removes specified scorers from the available scorer pool

Format: List of scorer names or types to exclude

Use case: Useful for excluding scorers that don’t work well with specific problem types

Requires: List of strings (default: [])

- use_dask_for_1_gpu

What it does: Controls whether to use Dask distributed computing for single GPU scenarios

Purpose: Enables Dask distributed processing even when only one GPU is available

Distributed: Provides distributed computing benefits even in single GPU setups

- Available options:

Enabled: Uses Dask for single GPU scenarios

Disabled: Uses standard processing for single GPU

Requires: Boolean toggle (default: Disabled)

- Set Optuna pruner constructor args

What it does: Sets the constructor arguments for Optuna pruner configuration

Purpose: Configures Optuna hyperparameter optimization pruner behavior

Pruning: Controls how Optuna prunes unpromising trials during optimization

Configuration: JSON object with pruner-specific parameters

Default configuration: Includes startup trials, warmup steps, interval steps, and reduction factor

Requires: JSON object (default: {“n_startup_trials”:5,”n_warmup_steps”:20,”interval_steps”:20,”percentile”:25,”min_resource”:”auto”,”max_resource”:”auto”,”reduction_factor”:4,”min_early_stopping_rate”:0,”n_brackets”:4,”min_early_stopping_rate_low”:0,”upper”:1,”lower”:0})

- Set Optuna sampler constructor args

What it does: Sets the constructor arguments for Optuna sampler configuration

Purpose: Configures Optuna hyperparameter optimization sampler behavior

Sampling: Controls how Optuna samples hyperparameter values during optimization

Configuration: JSON object with sampler-specific parameters

Default configuration: Empty object uses default sampling behavior

Requires: JSON object (default: {})

- drop_constant_model_final_ensemble

What it does: Controls whether to drop constant models from the final ensemble

Purpose: Removes constant (baseline) models from the final ensemble selection

Ensemble: Ensures final ensemble focuses on non-constant models only

- Available options:

Enabled: Drops constant models from final ensemble

Disabled: Includes constant models in final ensemble

Requires: Boolean toggle (default: Enabled)

- xgboost_rf_exact_threshold_num_rows_x_cols

What it does: Sets the threshold for XGBoost Random Forest exact mode based on rows × columns

Purpose: Determines when to use exact mode for XGBoost Random Forest based on data size

Performance: Exact mode is more accurate but slower for large datasets

Threshold: Datasets with rows × columns below this value use exact mode

Requires: Integer value (default: 10000)

- Factor by which to drop max_leaves from effective max_depth value when doing loss_guide

What it does: Sets the factor for reducing max_leaves from max_depth in loss-guided training

Purpose: Controls the relationship between max_depth and max_leaves in loss-guided mode

Training: Adjusts leaf count to optimize loss-guided training performance

Factor: Divides max_depth by this factor to determine effective max_leaves

Requires: Integer value (default: 4)

- Factor by which to extend max_depth mutations when doing loss_guide

What it does: Sets the factor for extending max_depth mutations in loss-guided training

Purpose: Controls how max_depth is extended during mutations in loss-guided mode

Mutations: Adjusts depth mutations to optimize loss-guided evolution

Factor: Multiplies max_depth by this factor during mutation operations

Requires: Integer value (default: 8)

- params_tune_grow_policy_simple_trees

What it does: Controls whether to force max_leaves=0 when grow_policy=”depthwise” and max_depth=0 when grow_policy=”lossguide” during simple tree model tuning.

Purpose: Ensures that the tree parameters are properly zeroed according to the chosen grow policy type for simple tree tuning.

- Available options:

Enabled: Forces max_leaves or max_depth to 0 according to the grow_policy setting.

Disabled: Does not force max_leaves or max_depth to 0.

Requires: Boolean toggle (default: Enabled)

- max_epochs_tf_big_data

What it does: Sets the maximum number of epochs for TensorFlow models on big datasets

Purpose: Limits training epochs for TensorFlow models when working with large datasets

Performance: Prevents excessive training time on large datasets

Limit: TensorFlow models stop training after this many epochs on big data

Requires: Integer value (default: 5)

- default_max_bin

What it does: Sets the default maximum number of bins for feature binning

Purpose: Controls the default binning resolution for numerical features

Binning: Higher values provide finer granularity but increase computation

Default: Used when no specific binning configuration is provided

Requires: Integer value (default: 256)

- default_lightgbm_max_bin

What it does: Sets the default maximum number of bins for LightGBM models

Purpose: Controls the default binning resolution specifically for LightGBM

LightGBM: Optimized binning parameter for LightGBM model performance

Default: Used when no specific LightGBM binning configuration is provided

Requires: Integer value (default: 249)

- min_max_bin

What it does: Sets the minimum maximum number of bins allowed

Purpose: Ensures a minimum level of binning granularity

Binning: Prevents excessive reduction in binning resolution

Minimum: Guarantees at least this many bins for numerical features

Requires: Integer value (default: 32)

- tensorflow_use_all_cores

What it does: Controls whether TensorFlow should use all available CPU cores

Purpose: Enables TensorFlow to utilize all CPU cores for parallel processing

Performance: Can significantly improve TensorFlow training and inference speed

- Available options:

Enabled: Uses all available CPU cores

Disabled: Uses default TensorFlow core allocation

Requires: Boolean toggle (default: Enabled)

- tensorflow_use_all_cores_even_if_reproducible_true

What it does: Controls whether TensorFlow uses all cores even when reproducibility is enabled

Purpose: Allows full core utilization even in reproducible mode

Reproducibility: May slightly affect reproducibility but improves performance

- Available options:

Enabled: Uses all cores regardless of reproducibility setting

Disabled: Respects reproducibility settings for core allocation

Requires: Boolean toggle (default: Disabled)

- tensorflow_disable_memory_optimization

What it does: Controls whether to disable TensorFlow memory optimization

Purpose: Allows disabling TensorFlow’s automatic memory optimization features

Memory: May use more memory but can improve performance in some cases

- Available options:

Enabled: Disables TensorFlow memory optimization

Disabled: Uses TensorFlow default memory optimization

Requires: Boolean toggle (default: Enabled)

- tensorflow_cores

What it does: Sets the number of CPU cores to use for TensorFlow operations

Purpose: Controls TensorFlow CPU core allocation for parallel processing

Performance: More cores can improve TensorFlow performance for large models

Auto mode (0): Uses automatic core allocation

Custom value: Limits TensorFlow to specified number of cores

Requires: Integer value (default: 0)

- tensorflow_model_max_cores

What it does: Sets the maximum number of cores per TensorFlow model

Purpose: Controls maximum core allocation for individual TensorFlow models

Performance: Limits per-model core usage to prevent resource contention

Limit: Each TensorFlow model uses at most this many cores

Requires: Integer value (default: 4)

- bert_cores

What it does: Sets the number of CPU cores to use for BERT model operations

Purpose: Controls BERT model CPU core allocation for parallel processing

Performance: More cores can improve BERT model performance

Auto mode (0): Uses automatic core allocation

Custom value: Limits BERT models to specified number of cores

Requires: Integer value (default: 0)

- bert_use_all_cores

What it does: Controls whether BERT models should use all available CPU cores

Purpose: Enables BERT models to utilize all CPU cores for parallel processing

Performance: Can significantly improve BERT model training and inference speed

- Available options:

Enabled: Uses all available CPU cores

Disabled: Uses default BERT core allocation

Requires: Boolean toggle (default: Enabled)

- bert_model_max_cores

What it does: Sets the maximum number of cores per BERT model

Purpose: Controls maximum core allocation for individual BERT models

Performance: Limits per-model core usage to prevent resource contention

Limit: Each BERT model uses at most this many cores

Requires: Integer value (default: 8)

- rulefit_max_tree_depth

What it does: Sets the maximum tree depth for RuleFit models

Purpose: Controls the maximum depth of trees used in RuleFit ensemble

RuleFit: Deeper trees can capture more complex patterns but increase overfitting risk

Limit: RuleFit trees are limited to this maximum depth

Requires: Integer value (default: 6)

- rulefit_max_num_trees

What it does: Sets the maximum number of trees for RuleFit models

Purpose: Controls the maximum number of trees in the RuleFit ensemble

RuleFit: More trees can improve performance but increase computation time

Limit: RuleFit ensemble is limited to this maximum number of trees

Requires: Integer value (default: 500)

- Whether to show real levels in One Hot Encoding feature names

What it does: Controls whether to include actual level values in One Hot Encoding feature names

Purpose: Determines feature name format for One Hot Encoding transformations

Feature names: Real levels can make feature names longer but more descriptive

Aggregation: Can cause feature aggregation problems when switching between binning modes

- Available options:

Enabled: Shows real levels in feature names

Disabled: Uses generic feature names without real levels

Requires: Boolean toggle (default: Disabled)

- Enable basic logging and notifications for ensemble meta learner

What it does: Enables basic logging and notifications for ensemble meta learner operations

Purpose: Provides logging information about ensemble meta learner performance

Monitoring: Helps track ensemble meta learner behavior and performance

- Available options:

Enabled: Enables basic ensemble meta learner logging

Disabled: Disables ensemble meta learner logging

Requires: Boolean toggle (default: Enabled)

- Enable extra logging for ensemble meta learner

What it does: Enables additional detailed logging for ensemble meta learner operations

Purpose: Provides comprehensive logging information about ensemble meta learner

Monitoring: Includes detailed performance metrics and behavior tracking

- Available options:

Enabled: Enables extra ensemble meta learner logging

Disabled: Uses only basic ensemble meta learner logging

Requires: Boolean toggle (default: Disabled)

- Maximum number of fold IDs to show in logs

What it does: Sets the maximum number of fold IDs to display in log messages

Purpose: Limits the verbosity of fold-related log information

Logging: Prevents log messages from becoming too long with many folds

Limit: Only shows fold IDs up to this maximum number in logs

Requires: Integer value (default: 10)

- Declare positive fold scores as unstable if stddev / mean is larger than this value

What it does: Sets the threshold for declaring fold scores as unstable based on coefficient of variation

Purpose: Identifies unstable fold scores that may indicate overfitting or data issues

Stability: Higher values indicate more variable fold scores

Threshold: Fold scores with stddev/mean above this value are marked as unstable

Requires: Float value (default: 0.25)

- Perform stratified sampling for binary classification if the dataset has fewer rows than this

What it does: Sets the dataset size threshold for stratified sampling in binary classification

Purpose: Ensures stratified sampling is used for smaller binary classification datasets

Sampling: Stratified sampling helps maintain class balance in smaller datasets

Threshold: Datasets with fewer rows than this value use stratified sampling

Requires: Integer value (default: 1000000)

- Ratio of most frequent to least frequent class for imbalanced multiclass classification problems

What it does: Sets the class imbalance ratio threshold for triggering special multiclass handling

Purpose: Identifies severely imbalanced multiclass problems requiring special treatment

Imbalance: Higher ratios indicate more severe class imbalance

Threshold: Problems with class ratios above this value trigger special handling

Requires: Float value (default: 5)

- Ratio of most frequent to least frequent class for heavily imbalanced multiclass classification problems

What it does: Sets the class imbalance ratio threshold for triggering heavy imbalance handling

Purpose: Identifies extremely imbalanced multiclass problems requiring special treatment

Heavy imbalance: Very high ratios indicate extreme class imbalance

Threshold: Problems with class ratios above this value trigger heavy imbalance handling

Requires: Float value (default: 25)

**Whether to do rank averaging bagged models inside of imbalanced models, instead of probability

- averaging**

What it does: Controls whether to use rank averaging, instead of probability averaging, when bagging models inside of imbalanced models.

Purpose: Rank averaging can be helpful when ensembling diverse models when ranking metrics like AUC/Gini are optimized.

Averaging: Rank averaging may provide improved performance for imbalanced datasets focused on ranking metrics.

Note: No MOJO support yet for rank averaging in this context.

- Available options:

auto: Automatically decide if rank averaging should be applied * on: Always use rank averaging for bagged models in imbalanced settings

off: Never use rank averaging (always use probability averaging)

Requires: String selection (default: auto)

- imbalance_ratio_notification_threshold

What it does: Sets the class imbalance ratio threshold for sending notifications

Purpose: Triggers notifications when class imbalance exceeds this threshold

Monitoring: Alerts users to potential class imbalance issues

Threshold: Problems with class ratios above this value trigger notifications

Requires: Float value (default: 2)

- nbins_ftrl_list

What it does: Sets the list of binning values for FTRL (Follow The Regularized Leader) models

Purpose: Defines multiple binning options for FTRL model hyperparameter tuning

Tuning: Provides different binning granularities for FTRL model optimization

Values: List of binning values to test during FTRL model training

Requires: List of integers (default: [1000000,10000000,100000000])

- te_bin_list

What it does: Sets the list of binning values for Target Encoding (TE) transformations

Purpose: Defines multiple binning options for Target Encoding hyperparameter tuning

Tuning: Provides different binning granularities for Target Encoding optimization

Values: List of binning values to test during Target Encoding training

Requires: List of integers (default: [25,10,100,250])

- woe_bin_list

What it does: Sets the list of binning values for Weight of Evidence (WoE) transformations

Purpose: Defines multiple binning options for WoE hyperparameter tuning

Tuning: Provides different binning granularities for WoE optimization

Values: List of binning values to test during WoE training

Requires: List of integers (default: [25,10,100,250])

- ohe_bin_list

What it does: Sets the list of binning values for One Hot Encoding (OHE) transformations

Purpose: Defines multiple binning options for OHE hyperparameter tuning

Tuning: Provides different binning granularities for OHE optimization

Values: List of binning values to test during OHE training

Requires: List of integers (default: [10,25,50,75,100])

- binner_bin_list

What it does: Sets the list of binning values for Binner Transformer

Purpose: Defines multiple binning options for Binner Transformer hyperparameter tuning

Tuning: Provides different binning granularities for Binner Transformer optimization

Values: List of binning values to test during Binner Transformer training

Requires: List of integers (default: [5,10,20])

- Timeout in seconds for dropping duplicate rows in training data

What it does: Sets the timeout for duplicate row detection and removal in training data

Purpose: Prevents duplicate row operations from running indefinitely

Performance: Timeout increases proportionally with rows × columns growth

Timeout: Operation stops if time limit is exceeded

Requires: Integer value (default: 60)

- shift_check_text

What it does: Controls whether to perform shift detection on text features

Purpose: Enables distribution shift detection specifically for text columns

Text shift: Detects changes in text feature distributions between datasets

- Available options:

Enabled: Performs text shift detection

Disabled: Skips text shift detection

Requires: Boolean toggle (default: Disabled)

- use_rf_for_shift_if_have_lgbm

What it does: Controls whether to use Random Forest for shift detection when LightGBM is available

Purpose: Optimizes shift detection algorithm selection based on available models

Algorithm: Random Forest may be more robust for shift detection in some cases

- Available options:

Enabled: Uses Random Forest for shift detection when LightGBM is available

Disabled: Uses LightGBM for shift detection

Requires: Boolean toggle (default: Enabled)

- shift_key_features_varimp

What it does: Sets the variable importance threshold for key features in shift detection

Purpose: Controls which features are considered key for shift detection

Feature selection: Only features above this importance threshold are used for shift detection

Threshold: Features with variable importance below this value are excluded

Requires: Float value (default: 0.01)

- shift_check_reduced_features

What it does: Controls whether to perform shift detection on reduced feature sets

Purpose: Enables shift detection using dimensionality-reduced features

Reduction: Can improve shift detection performance and reduce computation

- Available options:

Enabled: Performs shift detection on reduced features

Disabled: Uses full feature set for shift detection

Requires: Boolean toggle (default: Enabled)

- shift_trees

What it does: Sets the number of trees to use for shift detection models

Purpose: Controls the complexity of models used for distribution shift detection

Detection: More trees can improve shift detection accuracy but increase computation

Balance: Typical values range from 50-200 trees depending on dataset size

Requires: Integer value (default: 100)

- shift_max_bin

What it does: Sets the maximum number of bins for shift detection models

Purpose: Controls the binning granularity for shift detection feature processing

Binning: Higher values provide finer granularity but increase computation

Detection: Affects the sensitivity of shift detection algorithms

Requires: Integer value (default: 256)

- shift_min_max_depth

What it does: Sets the minimum maximum depth for shift detection trees

Purpose: Controls the minimum complexity of trees used for shift detection

Depth: Ensures trees have sufficient depth to capture shift patterns

Minimum: Trees are at least this deep for shift detection

Requires: Integer value (default: 4)

- shift_max_max_depth

What it does: Sets the maximum maximum depth for shift detection trees

Purpose: Controls the maximum complexity of trees used for shift detection

Depth: Prevents trees from becoming too complex and overfitting

Maximum: Trees are at most this deep for shift detection

Requires: Integer value (default: 8)

- detect_features_distribution_shift_threshold_auc

What it does: Sets the AUC threshold for detecting feature distribution shift

Purpose: Determines the sensitivity of feature distribution shift detection

Detection: Features with AUC above this threshold are flagged as having distribution shift

Threshold: Higher values require stronger evidence of shift to trigger detection

Requires: Float value (default: 0.55)

- leakage_check_text

What it does: Controls whether to perform leakage detection on text features

Purpose: Enables data leakage detection specifically for text columns

Text leakage: Detects potential data leakage in text features between training and test sets

- Available options:

Enabled: Performs text leakage detection

Disabled: Skips text leakage detection

Requires: Boolean toggle (default: Enabled)

- leakage_key_features_varimp

What it does: Sets the variable importance threshold for key features in leakage detection

Purpose: Controls which features are considered key for leakage detection

Feature selection: Only features above this importance threshold are used for leakage detection

Threshold: Features with variable importance below this value are excluded

Requires: Float value (default: 0.001)

- leakage_check_reduced_features

What it does: Controls whether to perform leakage detection on reduced feature sets

Purpose: Enables leakage detection using dimensionality-reduced features

Reduction: Can improve leakage detection performance and reduce computation

- Available options:

Enabled: Performs leakage detection on reduced features

Disabled: Uses full feature set for leakage detection

Requires: Boolean toggle (default: Enabled)

- use_rf_for_leakage_if_have_lgbm

What it does: Controls whether to use Random Forest for leakage detection when LightGBM is available

Purpose: Optimizes leakage detection algorithm selection based on available models

Algorithm: Random Forest may be more robust for leakage detection in some cases

- Available options:

Enabled: Uses Random Forest for leakage detection when LightGBM is available

Disabled: Uses LightGBM for leakage detection

Requires: Boolean toggle (default: Enabled)

- leakage_trees

What it does: Sets the number of trees to use for leakage detection models

Purpose: Controls the complexity of models used for data leakage detection

Detection: More trees can improve leakage detection accuracy but increase computation

Balance: Typical values range from 50-200 trees depending on dataset size

Requires: Integer value (default: 100)

- leakage_max_bin

What it does: Sets the maximum number of bins for leakage detection models

Purpose: Controls the binning granularity for leakage detection feature processing

Binning: Higher values provide finer granularity but increase computation

Detection: Affects the sensitivity of leakage detection algorithms

Requires: Integer value (default: 256)

- leakage_min_max_depth

What it does: Sets the minimum maximum depth for leakage detection trees

Purpose: Controls the minimum complexity of trees used for leakage detection

Depth: Ensures trees have sufficient depth to capture leakage patterns

Minimum: Trees are at least this deep for leakage detection

Requires: Integer value (default: 6)

- leakage_max_max_depth

What it does: Sets the maximum maximum depth for leakage detection trees

Purpose: Controls the maximum complexity of trees used for leakage detection

Depth: Prevents trees from becoming too complex and overfitting

Maximum: Trees are at most this deep for leakage detection

Requires: Integer value (default: 8)

- leakage_train_test_split

What it does: Sets the train/test split ratio for leakage detection

Purpose: Controls how data is split for leakage detection model training

Split: Determines the proportion of data used for training vs. testing

Ratio: Fraction of data used for training (0.25 = 25% training, 75% testing)

Requires: Float value (default: 0.25)

- Whether to report basic system information on server startup

What it does: Controls whether to display basic system information when the server starts

Purpose: Provides system overview information during server initialization

Startup: Shows system configuration and resource information at startup

- Available options:

Enabled: Reports basic system information on startup

Disabled: Skips system information reporting on startup

Requires: Boolean toggle (default: Enabled)

- abs_tol_for_perfect_score

What it does: Sets the absolute tolerance threshold for considering a score as perfect

Purpose: Defines the numerical tolerance for perfect score detection

Perfect score: Scores within this tolerance of theoretical maximum are considered perfect

Tolerance: Very small value to account for numerical precision issues

Requires: Float value (default: 0.0001)

- data_ingest_timeout

What it does: Sets the timeout in seconds for data ingestion operations

Purpose: Prevents data ingestion from running indefinitely

Timeout: Data ingestion operations stop if time limit is exceeded

Default: 86400 seconds (24 hours) for large dataset ingestion

Requires: Integer value (default: 86400)

- debug_daimodel_level

What it does: Sets the debug level for DAI model operations

Purpose: Controls the verbosity of debug information for model operations

Debug levels: Higher values provide more detailed debug information

Levels: 0 = minimal, 1 = standard, 2 = detailed, 3 = comprehensive

Requires: Integer value (default: 0)

- Whether to show detailed predict information in logs

What it does: Controls whether to display detailed prediction information in log messages

Purpose: Provides comprehensive logging of prediction operations and results

Logging: Includes detailed information about prediction processes and outputs

- Available options:

Enabled: Shows detailed prediction information in logs

Disabled: Uses standard prediction logging

Requires: Boolean toggle (default: Enabled)

- Whether to show detailed fit information in logs

What it does: Controls whether to display detailed model fitting information in log messages

Purpose: Provides comprehensive logging of model training operations and results

Logging: Includes detailed information about model fitting processes and performance

- Available options:

Enabled: Shows detailed fit information in logs

Disabled: Uses standard fit logging

Requires: Boolean toggle (default: Enabled)

- show_inapplicable_models_preview

What it does: Controls whether to show inapplicable models in the preview interface

Purpose: Displays models that are not applicable to the current dataset or configuration

Preview: Helps users understand which models are excluded and why

- Available options:

Enabled: Shows inapplicable models in preview

Disabled: Hides inapplicable models from preview

Requires: Boolean toggle (default: Disabled)

- show_inapplicable_transformers_preview

What it does: Controls whether to show inapplicable transformers in the preview interface

Purpose: Displays transformers that are not applicable to the current dataset or configuration

Preview: Helps users understand which transformers are excluded and why

- Available options:

Enabled: Shows inapplicable transformers in preview

Disabled: Hides inapplicable transformers from preview

Requires: Boolean toggle (default: Disabled)

- show_warnings_preview

What it does: Controls whether to show warning messages in the preview interface

Purpose: Displays warning information about potential issues or recommendations

Preview: Helps users identify potential problems before starting experiments

- Available options:

Enabled: Shows warnings in preview

Disabled: Hides warnings from preview

Requires: Boolean toggle (default: Disabled)

- show_warnings_preview_unused_map_features

What it does: Controls whether to show warnings about unused map features in preview

Purpose: Displays warnings when map features are defined but not used

Map features: Helps users identify unused feature mapping configurations

- Available options:

Enabled: Shows unused map feature warnings

Disabled: Hides unused map feature warnings

Requires: Boolean toggle (default: Enabled)

- max_cols_show_unused_features

What it does: Sets the maximum number of columns to show for unused features warnings

Purpose: Limits the verbosity of unused features warning messages

Warnings: Prevents warning messages from becoming too long with many unused features

Limit: Only shows unused features up to this maximum number in warnings

Requires: Integer value (default: 1000)

- max_cols_show_feature_transformer_mapping

What it does: Sets the maximum number of columns to show for feature transformer mapping

Purpose: Limits the verbosity of feature transformer mapping display

Mapping: Prevents mapping displays from becoming too long with many features

Limit: Only shows feature mappings up to this maximum number

Requires: Integer value (default: 1000)

- warning_unused_feature_show_max

What it does: Sets the maximum number of unused features to show in warning messages

Purpose: Limits the number of unused features displayed in warning messages

Warnings: Prevents warning messages from becoming too long with many unused features

Limit: Only shows up to this many unused features in warnings

Requires: Integer value (default: 3)

- interaction_finder_max_rows_x_cols

What it does: Sets the maximum rows × columns threshold for interaction finder operations

Purpose: Limits the scope of interaction detection to prevent excessive computation

Performance: Interaction finding can be computationally expensive on large datasets

Threshold: Datasets with rows × columns above this value may skip interaction finding

Requires: Integer value (default: 200000)

- interaction_finder_corr_threshold

What it does: Sets the correlation threshold for interaction finder detection

Purpose: Controls the sensitivity of interaction detection based on feature correlations

Detection: Higher thresholds require stronger correlations to detect interactions

Threshold: Features with correlations above this value may be considered for interactions

Requires: Float value (default: 0.95)

- Minimum number of bootstrap samples

What it does: Sets the minimum number of bootstrap samples for statistical operations

Purpose: Ensures sufficient bootstrap samples for reliable statistical estimates

Bootstrap: More samples provide more robust statistical estimates

Minimum: Guarantees at least this many bootstrap samples are used

Requires: Integer value (default: 1)

- Maximum number of bootstrap samples

What it does: Sets the maximum number of bootstrap samples for statistical operations

Purpose: Limits the number of bootstrap samples to prevent excessive computation

Bootstrap: Prevents bootstrap operations from running too long

Maximum: Bootstrap operations use at most this many samples

Requires: Integer value (default: 100)

- Minimum fraction of rows to use for bootstrap samples

What it does: Sets the minimum fraction of rows to include in bootstrap samples

Purpose: Ensures bootstrap samples contain sufficient data for reliable estimates

Sampling: Higher fractions provide more robust bootstrap estimates

Minimum: Bootstrap samples contain at least this fraction of original rows

Requires: Float value (default: 1)

- Maximum fraction of rows to use for bootstrap samples

What it does: Sets the maximum fraction of rows to include in bootstrap samples

Purpose: Limits the size of bootstrap samples to control computation time

Sampling: Prevents bootstrap samples from becoming too large

Maximum: Bootstrap samples contain at most this fraction of original rows

Requires: Float value (default: 10)

- Seed to use for final model bootstrap sampling

What it does: Sets the random seed for final model bootstrap sampling operations

Purpose: Ensures reproducible bootstrap sampling for final model evaluation

Reproducibility: Same seed produces identical bootstrap samples across runs

Auto mode (-1): Uses random seed for each bootstrap operation

Custom value: Uses specified seed for reproducible bootstrap sampling

Requires: Integer value (default: -1)

- benford_mad_threshold_int

What it does: Sets the Mean Absolute Deviation (MAD) threshold for Benford’s Law validation on integer data

Purpose: Controls the sensitivity of Benford’s Law compliance detection for integer features

Benford’s Law: Validates whether integer data follows expected digit distribution patterns

Threshold: Integer features with MAD above this value may violate Benford’s Law

Requires: Float value (default: 0.03)

- benford_mad_threshold_real

What it does: Sets the Mean Absolute Deviation (MAD) threshold for Benford’s Law validation on real number data

Purpose: Controls the sensitivity of Benford’s Law compliance detection for real number features

Benford’s Law: Validates whether real number data follows expected digit distribution patterns

Threshold: Real number features with MAD above this value may violate Benford’s Law

Requires: Float value (default: 0.1)

- Use tuning-evolution search result for final model transformer

What it does: Controls whether to use tuning-evolution search results for final model transformer selection

Purpose: Applies evolutionary search results to final model transformer configuration

Evolution: Uses genetic algorithm results to optimize final model transformer settings

- Available options:

Enabled: Uses tuning-evolution results for final model

Disabled: Uses standard transformer selection for final model

Requires: Boolean toggle (default: Enabled)

- Factor of standard deviation of bootstrap scores by which to accept new model in genetic algorithm

What it does: Sets the factor for accepting new models in genetic algorithm based on bootstrap score variation

Purpose: Controls model acceptance threshold considering bootstrap score uncertainty

GA Selection: Helps balance exploration vs. exploitation in genetic algorithm

Factor: New models are accepted if score improvement exceeds this factor times bootstrap stddev

Requires: Float value (default: 0.01)

- Minimum number of bootstrap samples that are required to limit accepting new model

What it does: Sets the minimum bootstrap samples required before applying acceptance limitations

Purpose: Ensures sufficient bootstrap samples before using score-based acceptance criteria

Bootstrap: Provides reliable score estimates before applying acceptance thresholds

Minimum: At least this many bootstrap samples are required for acceptance limitations

Requires: Integer value (default: 10)

- features_allowed_by_interpretability

What it does: Sets the maximum number of features allowed for each interpretability setting

Purpose: Controls feature complexity limits based on interpretability requirements