Install on NVIDIA GPU Cloud/NGC Registry¶

Driverless AI is supported on the following NVIDIA DGX products, and the installation steps for each platform are the same.

Environment¶

Provider |

GPUs |

Min Memory |

Suitable for |

|---|---|---|---|

NVIDIA GPU Cloud |

Yes |

Serious use |

|

NVIDIA DGX-1/DGX-2 |

Yes |

128 GB |

Serious use |

NVIDIA DGX Station |

Yes |

64 GB |

Serious Use |

Installing the NVIDIA NGC Registry¶

Note: These installation instructions assume that you are running on an NVIDIA DGX machine. Driverless AI is only available in the NGC registry for DGX machines.

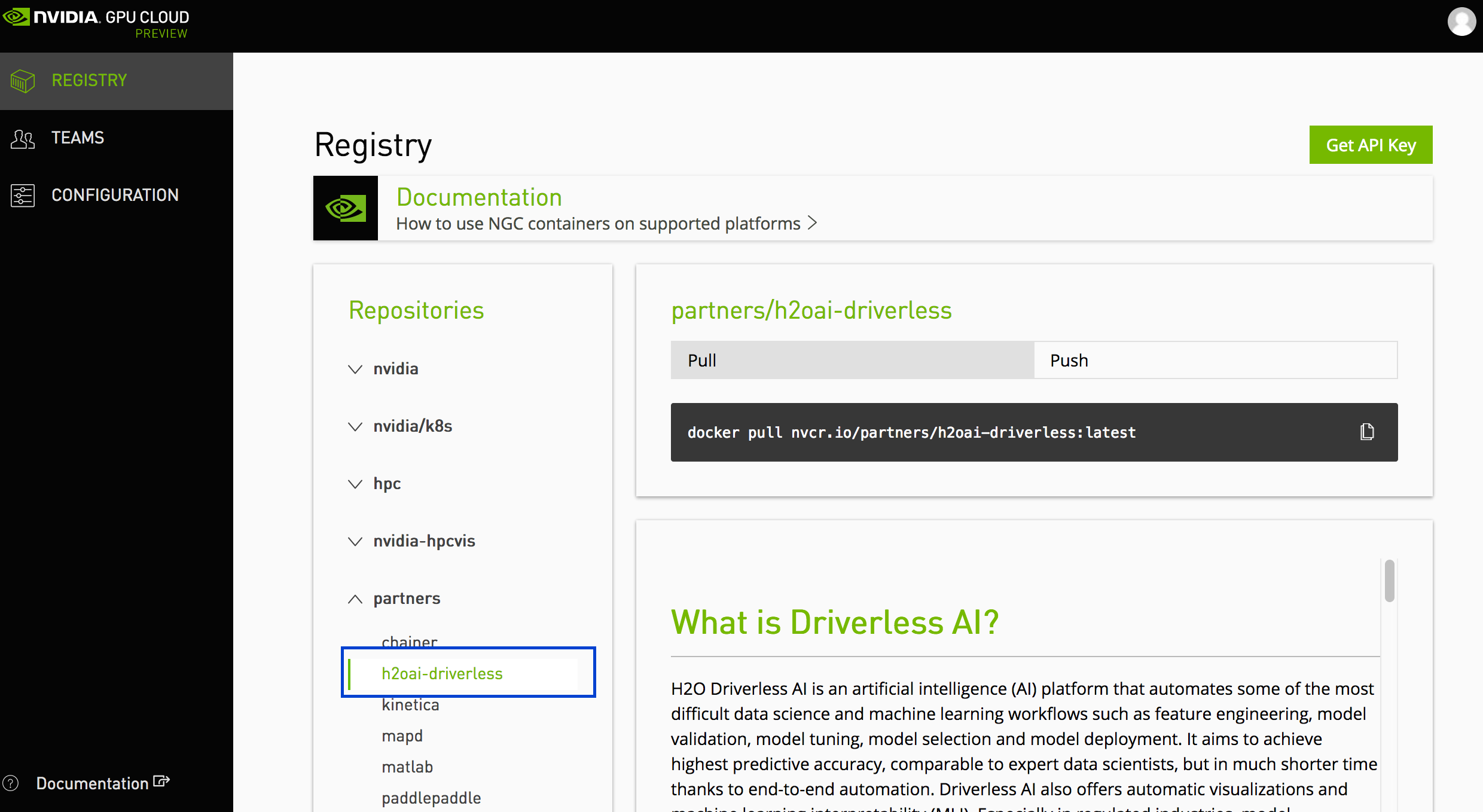

Log in to your NVIDIA GPU Cloud account at https://ngc.nvidia.com/registry. (Note that NVIDIA Compute is no longer supported by NVIDIA.)

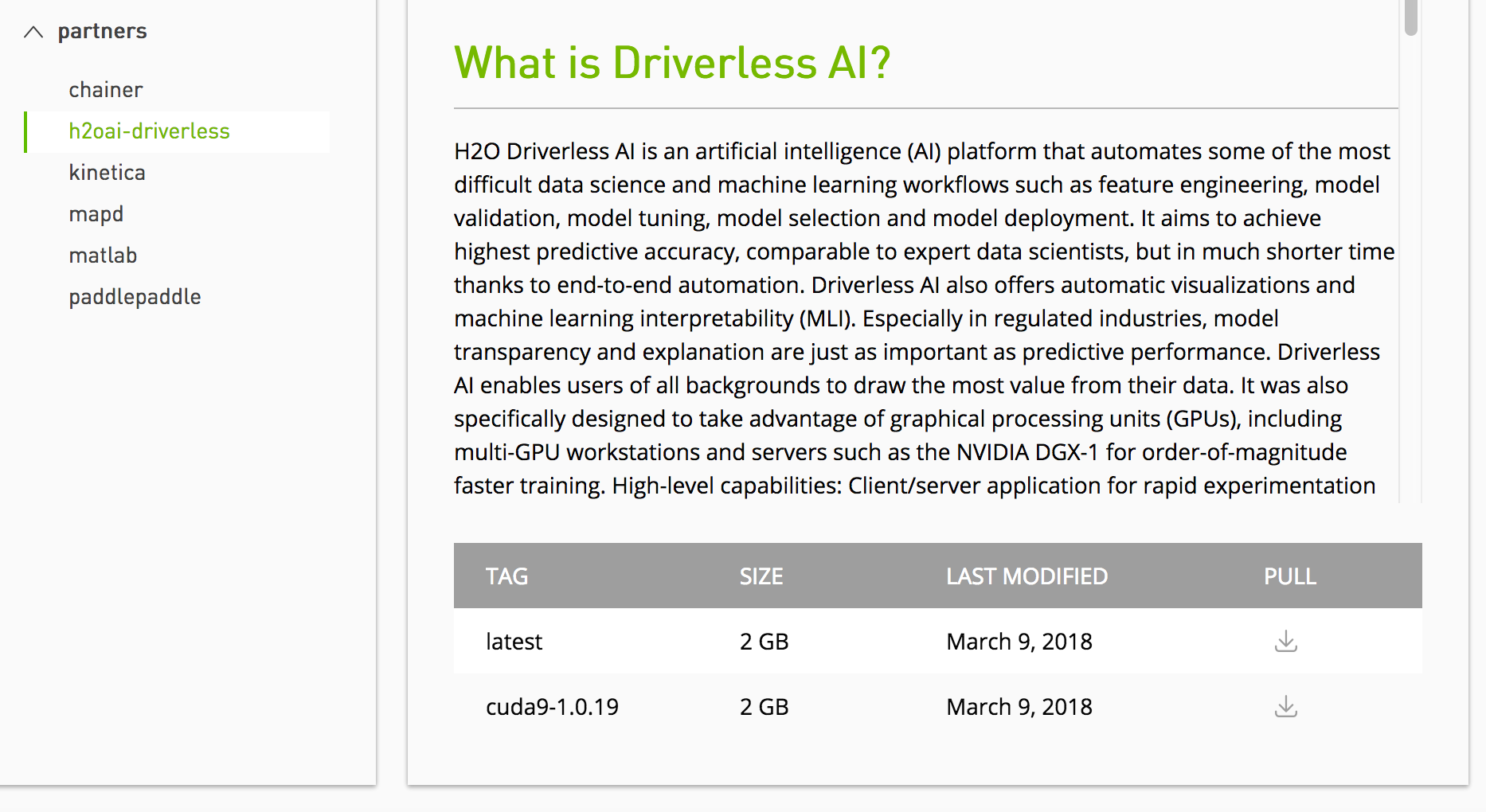

In the Registry > Partners menu, select h2oai-driverless.

At the bottom of the screen, select one of the H2O Driverless AI tags to retrieve the pull command.

On your NVIDIA DGX machine, open a command prompt and use the specified pull command to retrieve the Driverless AI image. For example:

docker pull nvcr.io/nvidia_partners/h2o-driverless-ai:latest

Set up a directory for the version of Driverless AI on the host machine:

# Set up directory with the version name mkdir dai-1.10.7.5

Set up the data, log, license, and tmp directories on the host machine:

# cd into the directory associated with the selected version of Driverless AI cd dai-1.10.7.5 # Set up the data, log, license, and tmp directories on the host machine mkdir data mkdir log mkdir license mkdir tmp

At this point, you can copy data into the data directory on the host machine. The data will be visible inside the Docker container.

Enable persistence of the GPU. Note that this only needs to be run once. Refer to the following for more information: http://docs.nvidia.com/deploy/driver-persistence/index.html.

sudo nvidia-smi -pm 1

Run

docker imagesto find the new image tag.Start the Driverless AI Docker image and replace TAG below with the image tag. Depending on your install version, use the

docker run --runtime=nvidia(>= Docker 19.03) ornvidia-docker(< Docker 19.03) command. Note that from version 1.10 DAI docker image runs with internaltinithat is equivalent to using--initfrom docker, if both are enabled in the launch command, tini will print a (harmless) warning message.

We recommend

--shm-size=2g --cap-add=SYS_NICE --ulimit nofile=131071:131071 --ulimit nproc=16384:16384in docker launch command. But if user plans to build image auto model extensively, then--shm-size=4gis recommended for Driverless AI docker command.Note: Use

docker versionto check which version of Docker you are using.# Start the Driverless AI Docker image docker run --runtime=nvidia \ --pid=host \ --rm \ --shm-size=2g --cap-add=SYS_NICE --ulimit nofile=131071:131071 --ulimit nproc=16384:16384 \ -u `id -u`:`id -g` \ -p 12345:12345 \ -v `pwd`/data:/data \ -v `pwd`/log:/log \ -v `pwd`/license:/license \ -v `pwd`/tmp:/tmp \ h2oai/dai-ubi8-x86_64:1.10.7.5-cuda11.2.2.xx# Start the Driverless AI Docker image nvidia-docker run \ --pid=host \ --rm \ --shm-size=2g --cap-add=SYS_NICE --ulimit nofile=131071:131071 --ulimit nproc=16384:16384 \ -u `id -u`:`id -g` \ -p 12345:12345 \ -v `pwd`/data:/data \ -v `pwd`/log:/log \ -v `pwd`/license:/license \ -v `pwd`/tmp:/tmp \ h2oai/dai-ubi8-x86_64:1.10.7.5-cuda11.2.2.xxDriverless AI will begin running:

-------------------------------- Welcome to H2O.ai's Driverless AI --------------------------------- - Put data in the volume mounted at /data - Logs are written to the volume mounted at /log/20180606-044258 - Connect to Driverless AI on port 12345 inside the container - Connect to Jupyter notebook on port 8888 inside the container

Connect to Driverless AI with your browser:

http://Your-Driverless-AI-Host-Machine:12345

Stopping Driverless AI¶

Use Ctrl+C to stop Driverless AI.

Upgrading Driverless AI¶

The steps for upgrading Driverless AI on an NVIDIA DGX system are similar to the installation steps.

WARNINGS:

This release deprecates experiments and MLI models from 1.7.0 and earlier.

Experiments, MLIs, and MOJOs reside in the Driverless AI tmp directory and are not automatically upgraded when Driverless AI is upgraded. We recommend you take the following steps before upgrading.

Build MLI models before upgrading.

Build MOJO pipelines before upgrading.

Stop Driverless AI and make a backup of your Driverless AI tmp directory before upgrading.

If you did not build MLI on a model before upgrading Driverless AI, then you will not be able to view MLI on that model after upgrading. Before upgrading, be sure to run MLI jobs on models that you want to continue to interpret in future releases. If that MLI job appears in the list of Interpreted Models in your current version, then it will be retained after upgrading.

If you did not build a MOJO pipeline on a model before upgrading Driverless AI, then you will not be able to build a MOJO pipeline on that model after upgrading. Before upgrading, be sure to build MOJO pipelines on all desired models and then back up your Driverless AI tmp directory.

The upgrade process inherits the service user and group from /etc/dai/User.conf and /etc/dai/Group.conf. You do not need to manually specify the DAI_USER or DAI_GROUP environment variables during an upgrade.

Note: Use Ctrl+C to stop Driverless AI if it is still running.

Requirements¶

As of 1.7.0, CUDA 9 is no longer supported. Your host environment must have CUDA 10.0 or later with NVIDIA drivers >= 440.82 installed (GPU only). Driverless AI ships with its own CUDA libraries, but the driver must exist in the host environment. Go to https://www.nvidia.com/Download/index.aspx to get the latest NVIDIA Tesla V/P/K series driver.

Upgrade Steps¶

On your NVIDIA DGX machine, create a directory for the new Driverless AI version.

Copy the data, log, license, and tmp directories from the previous Driverless AI directory into the new Driverless AI directory.

Run

docker pull nvcr.io/h2oai/h2oai-driverless-ai:latestto retrieve the latest Driverless AI version.Start the Driverless AI Docker image.

Connect to Driverless AI with your browser at http://Your-Driverless-AI-Host-Machine:12345.