Deploy Driverless AI models¶

This page describes how to deploy models in Driverless AI.

Note: AWS Lambda and REST server deployment options are no longer supported, and have been replaced by Triton Inference Server deployment.

Overview¶

Deploy from the UI¶

The following deployment options are available directly from the UI:

H2O MLOps deployment (Note that to use this deployment option, you must specify

h2o_mlops_ui_urlin theconfig.tomlfile.)

Additional deployment options¶

By default, each completed Driverless AI experiment (unless explicitly disabled or not available due to modified expert settings) creates at least one scoring pipeline for scoring in Python, C++, Java, and R.

The following is a list of deployment options and examples for deploying Driverless AI MOJO (Java and C++ with Python/R wrappers) and Python Scoring pipelines for production purposes. The deployment template documentation** can be accessed from here. For more customized requirements, contact support@h2o.ai.

H2O MLOps deployment¶

H2O MLOps is an open, interoperable platform for model deployment, management, governance, and monitoring that features integration with Driverless AI. The following steps describe how you can create H2O MLOps deployments directly from the completed experiment page in Driverless AI.

Note: To use this deployment option, you must specify h2o_mlops_ui_url in the config.toml file.

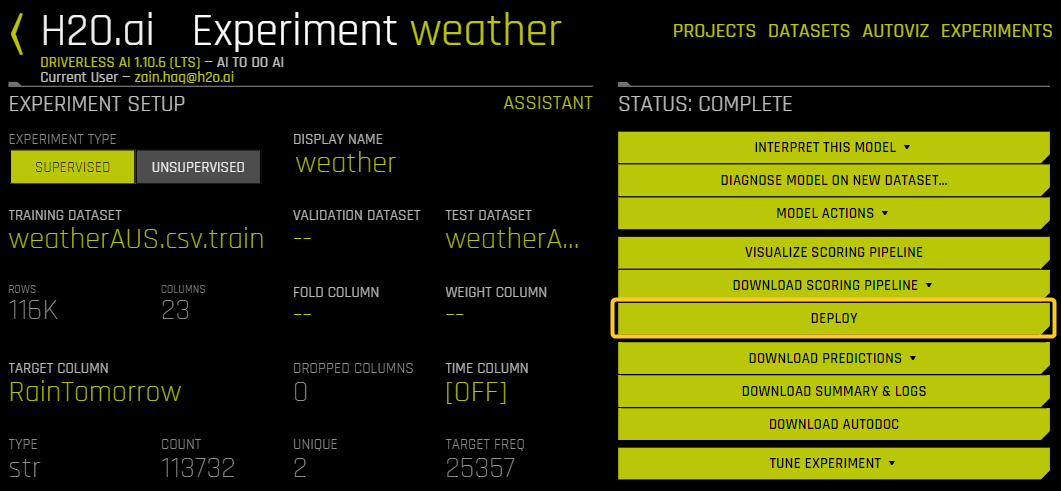

On the completed experiment page, click Deploy.

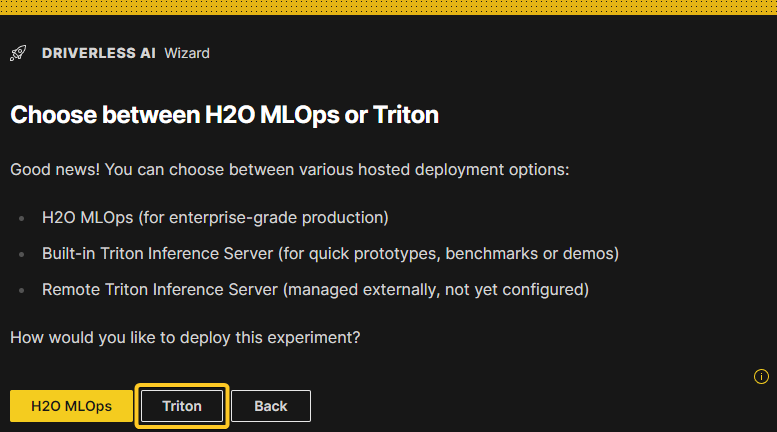

Click the H2O MLOps button. This action results in one of the following outcomes:

The experiment is assigned to a single Project: You are redirected to the Project detail page in the H2O MLOps app.

The experiment is assigned to multiple Projects: Select a project to go to in the H2O MLOps app. Alternatively, create a new Project to assign the experiment to. If you choose to create a new Project, you are prompted to enter a name and description for the Project. Once the new Project has been created and the experiment has been linked to it, you can click the Go to MLOps page button to navigate to the Project detail page in the H2O MLOps app.

The experiment isn’t assigned to any Project: Select a Project to link the experiment to. Alternatively, create a new Project and link the experiment to it.

Triton Inference Server deployment¶

Driverless AI provides a built-in Triton Inference Server that can be used for testing, prototyping, or benchmarking. NVIDIA Triton Inference Server is an open source inference serving software that streamlines AI inferencing. For business-critical deployments, it is recommended to use a remote Triton server or run Driverless AI in Triton-only mode instead of running Triton alongside a Driverless AI web server. For more advanced model operations, see H2O MLOps.

Notes:

Currently, only CPUs are supported.

Triton Inference Server deployments cannot be accessed from H2O MLOps.

Description of relevant configuration options¶

Connect to external Triton inference server¶

This section describes the Expert Settings that need to be configured to connect to an external Triton inference server from Driverless AI.

triton_host_remote: Set the hostname or IP address of a remote Triton inference service (that is, an inference service outside of DAI). This setting is used whenauto_deploy_triton_scoring_pipelineandmake_triton_scoring_pipelineare not disabled. If this option is specified set, ensure thattriton_model_repository_dir_remoteandtriton_server_params_remoteare also set.triton_model_repository_dir_remote: Set the path to the model repository directory for a remote Triton inference server outside of Driverless AI. All Triton deployments for all users are stored in this directory. Requires write access to this directory from Driverless AI (shared file system). Note that this setting is optional. If this setting isn’t set, each model deployment is uploaded over the gRPC protocol, but note that this only works for models that are smaller than 2GB due to protobuf limits. For larger models, using the Export functionality and putting the downloaded file contents on the remote Triton server directly is recommended.triton_server_params_remote: Set the parameters to connect to a remote Triton server. This option is only required whentriton_host_remoteandtriton_model_repository_dir_remoteare set.

Built-in Triton inference server¶

enable_triton_server_local: Specify whether to enable the built-in Triton inference server. If this is set to false, you can still connect to a remote Triton inference server by settingtriton_host. If this is set to true, the built-in Triton inference server starts.triton_host_local: Set the hostname or IP address of a built-in Triton inference service, to be used whenauto_deploy_triton_scoring_pipelineandmake_triton_scoring_pipelineare not disabled. Only needed ifenable_triton_server_localis disabled. This option is required to be set for some systems like AWS in order for networking packages to reach the server.triton_server_params_local: Specify Triton server command line arguments passed with--key=value.triton_model_repository_dir_local: Set the path to the model repository (relative todata_directory) for local Triton inference server built-in to Driverless AI. Note that all Triton deployments for all users are stored in this directory.triton_server_core_chunk_size_local: Specify the number of cores to specify as resource so that C++ MOJO can use its own multi-threaded parallel row batching to save memory and increase performance. A value of 1 is most portable across any Triton server, and is the most efficient use of resources for small (for example, 1) batch sizes, while 4 is reasonable default (assuming requests are batched).

DAI Deployment Wizard¶

The following steps describe how to use the DAI Deployment Wizard to create and manage Triton Inference Server deployments.

On the completed experiment page, click the Deploy button.

Click the Triton button. (Note that this step may not be required on standalone DAI.)

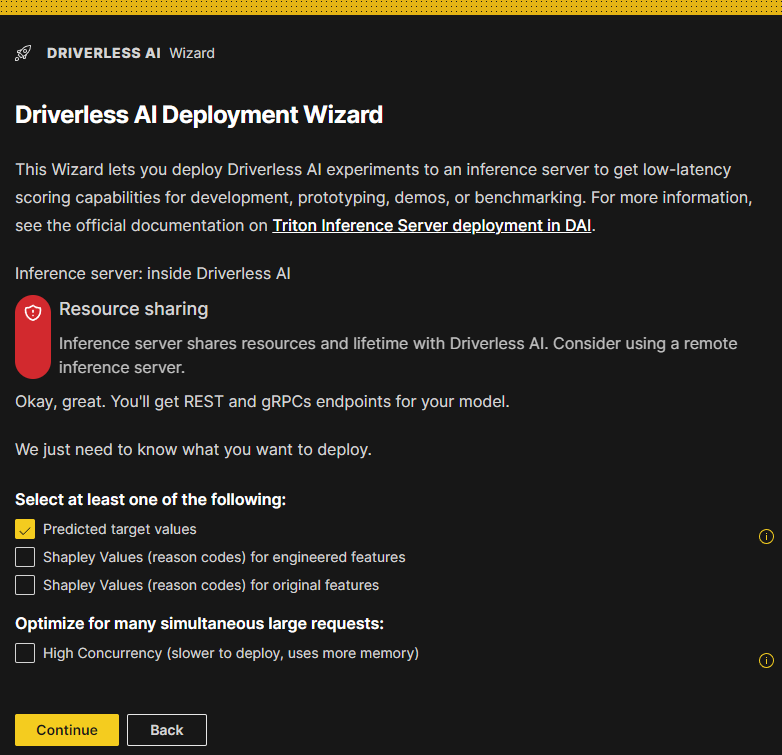

The DAI Deployment Wizard is displayed. This Wizard lets you deploy Driverless AI experiments to an inference server to get low-latency scoring capabilities for development, prototyping, demos, or benchmarking.

Select at least one of the following:

Predicted target values

Shapley values (reason codes) for engineered features

Shapley values (reason codes) for original features

(Optional) If you want to optimize the deployment for many simultaneous large requests, select the High Concurrency (slower to deploy, uses more memory) option. For more information, see the following subsection.

Confirm your selections and click the Continue button to proceed.

High concurrency¶

By default, one single model instance that can handle multiple incoming requests simultaneously and efficiently is deployed. If you expect to create many simultaneous requests, each with a large number of records to score, and require higher overall throughput, select High Concurrency (slower to deploy, uses more memory). Selecting this option causes multiple deployment instances to be created depending on the hardware resources and the triton_server_core_chunk_size_local expert setting. Note that multiple instances take more time to launch and require more memory.

Note: P99 Latency and Throughput numbers are not affected by the high concurrency setting because the built-in simple benchmark is not performing simultaneous requests.

Deployed models list¶

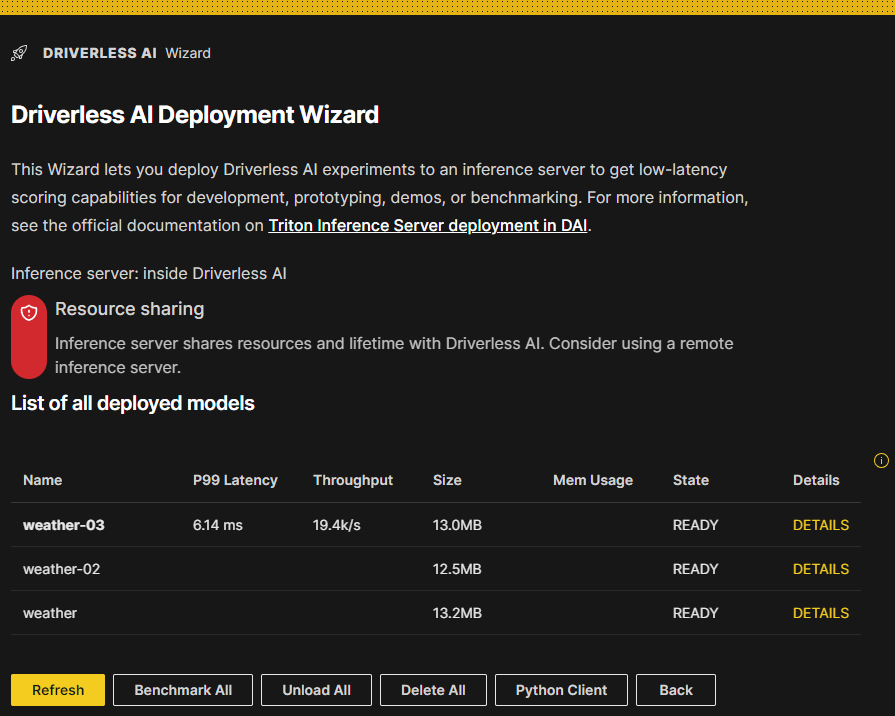

A list of all models that have been previously deployed is displayed. They can be active (ready) or inactive (unavailable) deployments. A deployment can be unloaded if it is not being used temporarily to free up resources.

For each deployed model in this list, the following details are provided:

Model name: Click the name of a model to go to the completed experiment page for that model.

P99 latency: The 99-th percentile (that is, the estimate of worst case) of the latency in milliseconds when scoring one row (sequentially, one row at a time).

Throughput: The approximate number of rows that can be scored in one second when scoring many rows at a time in a batch request.

Size: The size of the MOJO pipeline on disk.

Memory usage: The size of the MOJO pipeline in memory during scoring (at the

time the MOJO was created). Note that this number may change as newer MOJO runtimes are released.

State: The following list descibes the various possible states of a deployment.

READY: The deployment is loaded and active (ready to make predictions).

UNLOADING: This indicates a transition state between READY and UNAVAILABLE.

UNAVAILABLE: This indicates an unloaded deployment that can be loaded again when needed.

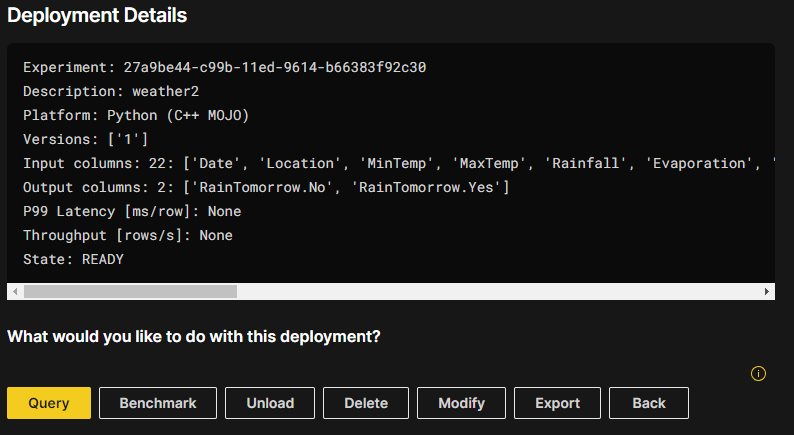

Details: Displays the name, description, platform, model version, input columns, output columns, P99 latency, throughput, and state of the deployed model.

Actions available from the deployed models listing page¶

The following list describes the actions that are available from the deployed models listing page.

Load all: Loads all deployed models that are not in “READY” state, and run a very quick benchmark test. In the context of the Triton Inference Server, loading and unloading refer to the process of adding or removing a model to or from the server’s model repository. Once a model is loaded, it is ready to serve inference requests.

Benchmark all: Run a benchmark for all of the listed deployed models. Note that Shapley values are noticeably slower than regular predictions.

Unload all: Unloads all of the deployed models. Unloading a model from the Triton Inference Server involves removing the model from the server’s model repository, which frees up resources that can be used by other models. This lets you save memory without having to delete models. Models that have been unloaded can be loaded again.

Delete all: Delete all the listed deployed models. Exercise caution when using this option.

Python client: Displays instructions for downloading, installing, and using the Driverless AI Triton Python Client for Python 3.x.

Back: Return to the completed experiment page.

Actions available from the deployment Details page¶

The following list describes the actions that are available from the deployment Details page. You can access these actions by clicking DETAILS next to a specific deployed model in the list of deployed models.

Query: Creates a simple single-row query with curl that can be used to score the model with the HTTP REST API.

Benchmark: Performs simple runtime characterization of the endpoint.

Load / Unload: In the context of the Triton Inference Server, loading and unloading refer to the process of adding or removing a model to or from the server’s model repository. You can use this button to load or unload a specific model.

Delete: Unloads the model and deletes the local directory (only for DAI-internal Triton models).

Modify: Redeploys the model. You can choose to deploy any combination of Target Predictions/Shapley Values/Original Shapley Values.

Export: Lets you download a zip file of the contents of the folder inside any Triton repository, anywhere.

Back: Return to the deployed models listing page.

MOJO With Java Runtime deployment options¶

The following are several options for deploying Driverless AI MOJO with Java Runtime. The links in the diagram lead to code examples and templates.

Driverless AI MOJO Java Runtime Deployment Options¶

The Java MOJO scoring pipelines can also be deployed from within the Driverless AI GUI. For more information, see Deployment options from within Driverless AI GUI.

MOJO With C++ Runtime deployment options¶

Here we list some example scenarios and platforms for deploying Driverless AI MOJO with C++ Runtime. MOJO C++ runtime can also be run directly from R/Python terminals. For more information, see Driverless AI MOJO Scoring Pipeline - C++ Runtime with Python (Supports Shapley) and R Wrappers.

Driverless AI MOJO C++ Runtime Deployment Options¶