Mac OS X

This section describes how to install, start, stop, and upgrade the Driverless AI Docker image on Mac OS X. Note that this uses regular Docker and not NVIDIA Docker.

Note: Support for GPUs and MOJOs is not available on Mac OS X.

The installation steps assume that you have a license key for Driverless AI. For information on how to obtain a license key for Driverless AI, visit https://h2o.ai/o/try-driverless-ai/. Once obtained, you will be prompted to paste the license key into the Driverless AI UI when you first log in, or you can save it as a .sig file and place it in the license folder that you will create during the installation process.

Caution:

This is an extremely memory-constrained environment for experimental purposes only. Stick to small datasets! For serious use, please use Linux.

Be aware that there are known performance issues with Docker for Mac. More information is available here: https://docs.docker.com/docker-for-mac/osxfs/#technology.

Environment

Operating System |

GPU Support? |

Min Mem |

Suitable for |

|---|---|---|---|

Mac OS X |

No |

16 GB |

Experimentation |

Installing Driverless AI

Retrieve the Driverless AI Docker image from https://www.h2o.ai/download/.

Download and run Docker for Mac from https://docs.docker.com/docker-for-mac/install.

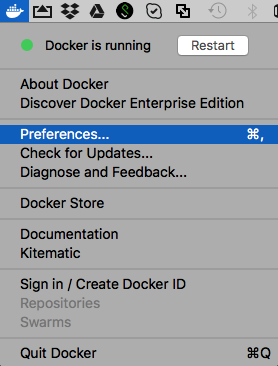

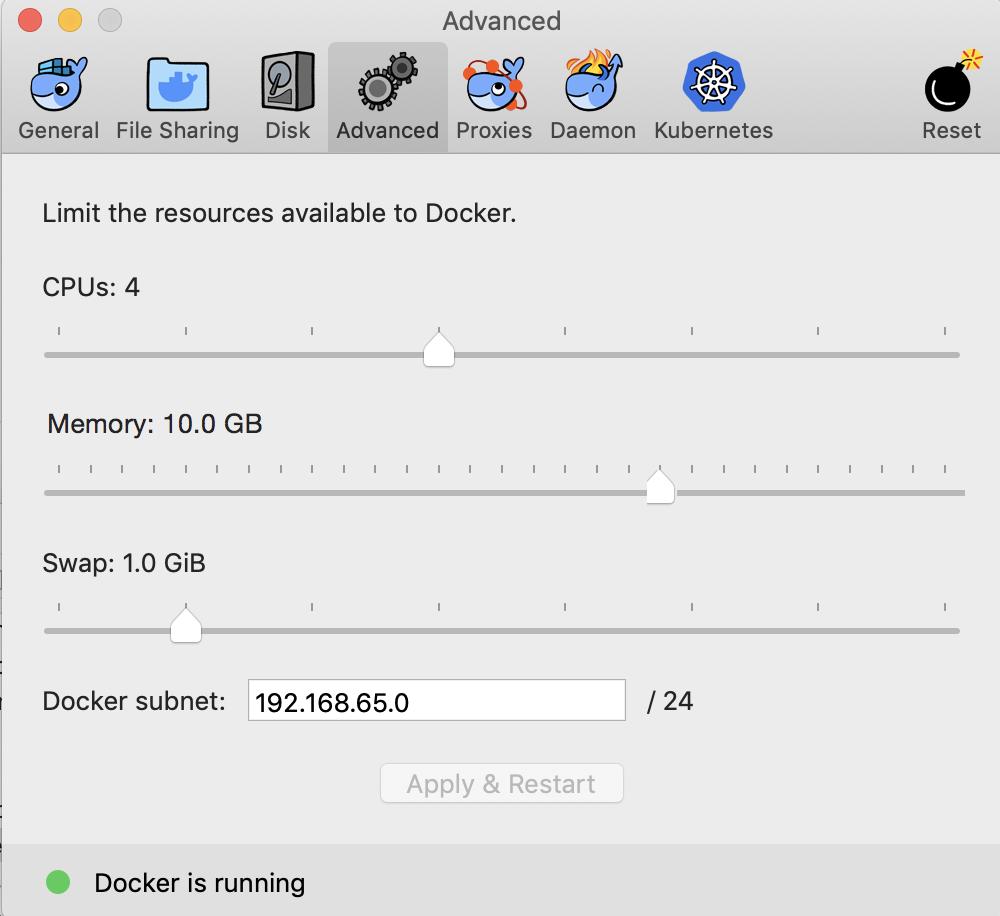

Adjust the amount of memory given to Docker to be at least 10 GB. Driverless AI won’t run at all with less than 10 GB of memory. You can optionally adjust the number of CPUs given to Docker. You will find the controls by clicking on (Docker Whale)->Preferences->Advanced as shown in the following screenshots. (Don’t forget to Apply the changes after setting the desired memory value.)

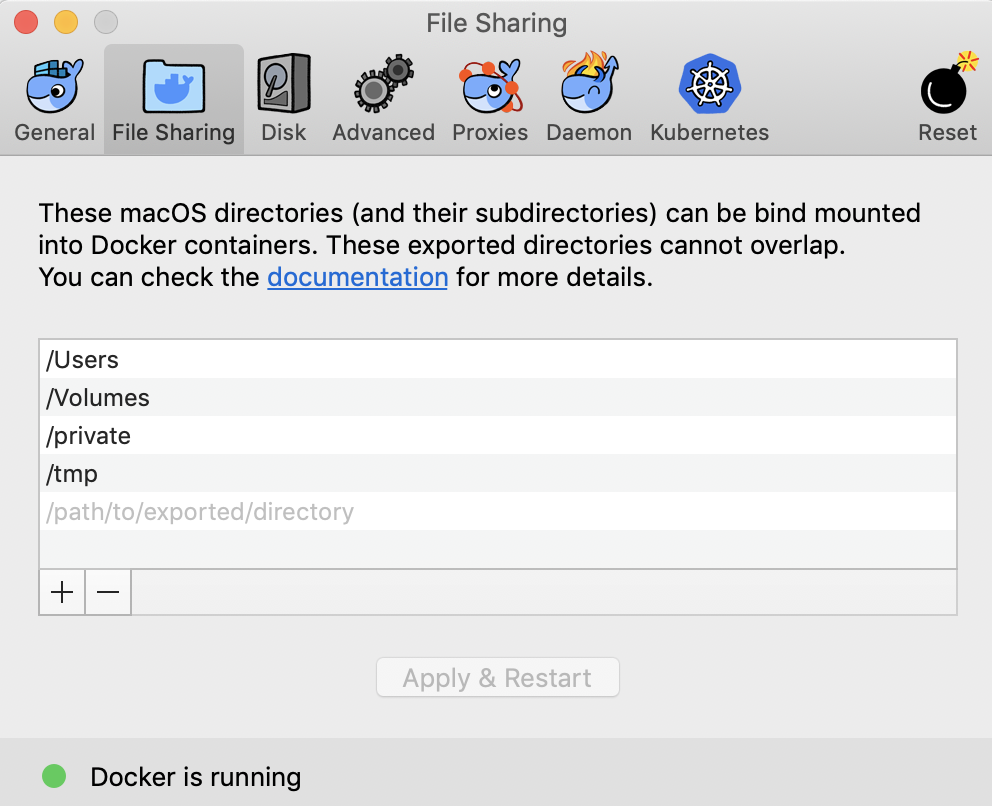

On the File Sharing tab, verify that your macOS directories (and their subdirectories) can be bind mounted into Docker containers. More information is available here: https://docs.docker.com/docker-for-mac/osxfs/#namespaces.

Set up a directory for the version of Driverless AI within the Terminal:

mkdir dai-2.0.0

With Docker running, open a Terminal and move the downloaded Driverless AI image to your new directory.

Change directories to the new directory, then load the image using the following command:

cd dai-2.0.0 docker load < dai-docker-ubi8-x86_64-2.0.0.tar.gz

Set up the data, log, license, and tmp directories (within the new Driverless AI directory):

mkdir data mkdir log mkdir license mkdir tmp

Optionally copy data into the data directory on the host. The data will be visible inside the Docker container at /data. You can also upload data after starting Driverless AI.

Run

docker imagesto find the image tag.Start the Driverless AI Docker image (still within the new Driverless AI directory). Replace TAG below with the image tag. Note that GPU support will not be available. Note that from version 1.10 DAI docker image runs with internal

tinithat is equivalent to using--initfrom docker, if both are enabled in the launch command, tini prints a (harmless) warning message.

We recommend

--shm-size=2g --cap-add=SYS_NICE --ulimit nofile=131071:131071 --ulimit nproc=16384:16384in docker launch command. But if user plans to build image auto model extensively, then--shm-size=4gis recommended for Driverless AI docker command.docker run \ --pid=host \ --rm \ --shm-size=2g --cap-add=SYS_NICE --ulimit nofile=131071:131071 --ulimit nproc=16384:16384 \ -u `id -u`:`id -g` \ -p 12345:12345 \ -v `pwd`/data:/data \ -v `pwd`/log:/log \ -v `pwd`/license:/license \ -v `pwd`/tmp:/tmp \ h2oai/dai-ubi8-x86_64:2.0.0-cuda11.8.0.xx

Connect to Driverless AI with your browser at http://localhost:12345.

Stopping the Docker Image

To stop the Driverless AI Docker image, type Ctrl + C in the Terminal (Mac OS X) or PowerShell (Windows 10) window that is running the Driverless AI Docker image.

Upgrading the Docker Image

This section provides instructions for upgrading Driverless AI versions that were installed in a Docker container. These steps ensure that existing experiments are saved.

WARNING: Experiments, MLIs, and MOJOs reside in the Driverless AI tmp directory and are not automatically upgraded when Driverless AI is upgraded.

Build MLI models before upgrading.

Build MOJO pipelines before upgrading.

Stop Driverless AI and make a backup of your Driverless AI tmp directory before upgrading.

If you did not build MLI on a model before upgrading Driverless AI, then you will not be able to view MLI on that model after upgrading. Before upgrading, be sure to run MLI jobs on models that you want to continue to interpret in future releases. If that MLI job appears in the list of Interpreted Models in your current version, then it will be retained after upgrading.

If you did not build a MOJO pipeline on a model before upgrading Driverless AI, then you will not be able to build a MOJO pipeline on that model after upgrading. Before upgrading, be sure to build MOJO pipelines on all desired models and then back up your Driverless AI tmp directory.

Note: Stop Driverless AI if it is still running.

Upgrade Steps

SSH into the IP address of the machine that is running Driverless AI.

Set up a directory for the version of Driverless AI on the host machine:

# Set up directory with the version name mkdir dai-2.0.0 # cd into the new directory cd dai-2.0.0

Retrieve the Driverless AI package from https://www.h2o.ai/download/ and add it to the new directory.

Load the Driverless AI Docker image inside the new directory:

# Load the Driverless AI docker image docker load < dai-docker-ubi8-x86_64-2.0.0.tar.gz

Copy the data, log, license, and tmp directories from the previous Driverless AI directory to the new Driverless AI directory:

# Copy the data, log, license, and tmp directories on the host machine cp -a dai_rel_1.8.4/data dai-2.0.0/data cp -a dai_rel_1.8.4/log dai-2.0.0/log cp -a dai_rel_1.8.4/license dai-2.0.0/license cp -a dai_rel_1.8.4/tmp dai-2.0.0/tmpAt this point, your experiments from the previous versions will be visible inside the Docker container.

Use

docker imagesto find the new image tag.Start the Driverless AI Docker image.

Connect to Driverless AI with your browser at http://Your-Driverless-AI-Host-Machine:12345.