Dask Multinode Training (Alpha)

Driverless AI can be configured to run in a multinode worker mode where each worker has a Dask CPU worker and (if the worker has GPUs) a Dask GPU worker. The main node in this setup has a Dask scheduler. This document describes the Dask training process and how to configure it.

Before setting up Dask multinode training, you must configure Redis Multinode training in Driverless AI.

Note: For Dask multinode examples, see Dask Multinode examples.

Understanding Dask Multinode Training

Dask multinode training in Driverless AI can be used to run a single experiment that trains across the multinode cluster. It is effective in situations where you need to run and complete a single experiment with large amounts of data or a large hyper-parameter space search. The Dask distributed machines can be CPU only or CPU + GPU, with Dask experiments using resources accordingly.

For more information on Dask multinode design concepts, see https://dask.org/.

Notes:

Dask multinode training in Driverless AI is currently in a preview stage. If you are interested in using Dask multinode configurations, contact support@h2o.ai.

Dask multinode training requires the transfer of data between several different workers. For example, if an experiment uses the Dask cluster, it must distribute data among cluster workers to be trained by XGBoost or Optuna hyper-parameter search.

Dask tasks are scheduled on a first in, first out (FIFO) basis.

Users can enable Dask multinode training on a per-experiment basis from the expert settings.

If an experiment chooses to use the Dask cluster (default is true if applicable), then a single experiment runs on the entire multinode cluster. For this reason, using a large number of commodity-grade hardware is not useful in the context of Dask multinode.

By default, Dask models are not selected because they can be less efficient for small data than non-Dask models. Set

show_warnings_preview = truein the config.toml to display warnings whenever a user does not select Dask models and the system is capable of using them.For more information on queuing in Driverless AI, see Experiment Queuing In Driverless AI.

Dask Multinode Setup Example

This section provides supplemental instructions for enabling Dask on the multinode cluster. Currently enabling Dask multinode requires setting up Redis multinode. For more information on setting up and starting Redis multinode on Driverless AI, see.

VPC Settings

In the VPC settings, enable inbound rules to listen to TCP connections on ports dask_server_port and dask_cuda_server_port (if using GPUs). If you want Dask dashboard access to monitor Dask operations, also include dask_dashboard_port and dask_cuda_dashboard_port (if using GPUs). For using LightGBM with Dask, also enable lightgbm_listen_port.

Edit the Driverless AI config.toml

After Driverless AI is installed, edit the following config option in the config.toml file.

# Dask settings -- set the IP address of the Dask server. Same as the IP of the main Driverless AI node, and usually same as the Redis/MinIO IP

dask_server_ip = "<host_ip>"

For the dask_server_ip parameter, Driverless AI automatically tries the Redis, MinIO, and local IP addresses to see if it can find the Dask scheduler. In such a case, the dask_server_ip parameter does not have to be set.

On EC2 systems, if the main server is http://ec2-52-71-252-183.compute-1.amazonaws.com:12345/, it is recommended to use the nslookup-resolved IP instead of the EC2 IP due to the way Dask and XGBoost (with rabit) operate. For example, nslookup ec2-52-71-252-183.compute-1.amazonaws.com gives 10.10.4.103. Redis, MinIO, and Dask subsequently use that as the IP in the config.toml file. If dask_server_ip is not specified, its value is automatically inferred from Redis or MinIO.

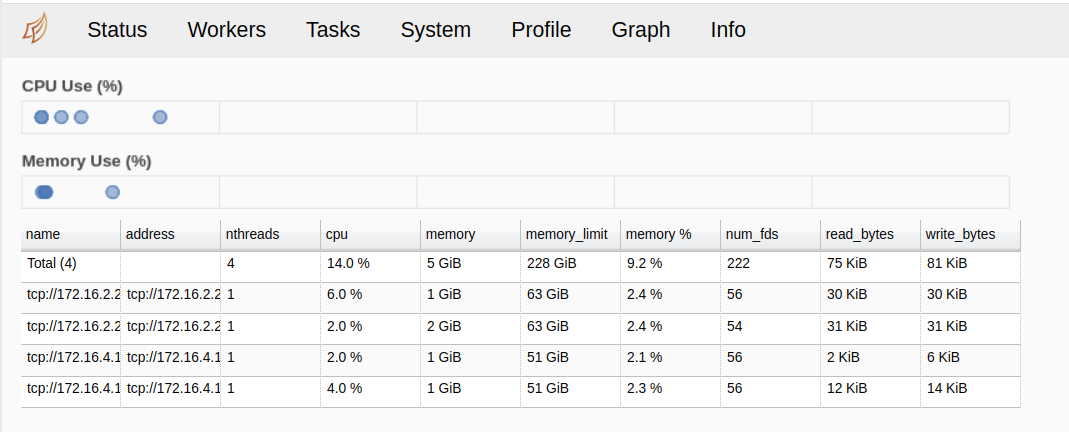

Once the worker node starts, use the Driverless AI server IP and Dask dashboard port(s) to view the status of the Dask cluster.

Description of Configuration Attributes

General Dask Settings

enable_dask_cluster: Specifies whether to enable a Dask worker on each multinode worker.dask_server_ip: IP address used by server for Dask and Dask CUDA communications.

CPU Cluster Dask Settings

dask_server_port: Port used by server for Dask communications.dask_dashboard_port: Dask dashboard port for Dask diagnostics.dask_cluster_kwargs: Set Dask CUDA/RAPIDS cluster settings for single node workers.dask_scheduler_env: Set Dask scheduler env.dask_scheduler_options: Set Dask scheduler command-line options.dask_worker_env: Set Dask worker environment variables.dask_worker_options: Set Dask worker command-line options.dask_protocol: Protocol used for Dask communications.dask_worker_nprocs: Number of processes per Dask worker.dask_worker_nthreads: Number of threads per process for Dask.

GPU CUDA Cluster Dask Settings

dask_cuda_server_port: Port using by server for Dask cuda communications.dask_cuda_dashboard_port: Dask dashboard port for dask_cuda diagnostics.dask_cuda_cluster_kwargs: Set Dask CUDA/RAPIDS cluster settings for single node workers.dask_cuda_scheduler_env: Set Dask CUDA scheduler env.dask_cuda_scheduler_options: Set Dask CUDA scheduler command-line options.dask_cuda_worker_options: Set Dask CUDA worker options.dask_cuda_worker_env: Set Dask CUDA worker environment variables.dask_cuda_protocol: Protocol using for dask cuda communications.dask_cuda_worker_nthreads: Number of threads per process for dask_cuda.

Other Cluster Dask Settings

lightgbm_listen_port: LightGBM local listening port when using Dask with LightGBM.

Example: User Experiment Dask Settings

To enable XGBoost GBM on a Dask GPU cluster:

Set num_gpus_for_prediction to number of GPUs on each worker node

Go to expert settings and under Recipes tab, push Include specific models and ensure only selected model is XGBoostGBMDaskModel

For more configuration options, see User Experiment Dask Settings.

Notes:

The same steps can be used for a local Dask cluster on a single node with multiple GPUs.

If have Dask cluster but only want to use the worker node’s GPUs, set use_dask_cluster to False.

If have Dask cluster or single dask node available as single user, one can set exclusive_mode to “max” in expert settings to maximize usage of workers in cluster.

User Experiment Dask Settings

use_dask_cluster: Whether to use Dask cluster (True) or only local cluster for multi-GPU case (False).enable_xgboost_rapids: Enable RAPIDS-cudf extensions to XGBoost GBM/Dart. (1)enable_xgboost_gbm_dask: Enable dask_cudf (multi-GPU) XGBoost GBM. (2)enable_lightgbm_dask: Enable Dask (multi-node) LightGBM. (Experimental) (2)enable_xgboost_dart_dask: Enable dask_cudf (multi-GPU) XGBoost Dart. (2)enable_hyperopt_dask: Enable dask (multi-node/multi-GPU) hyperparameter search. (3)

Notes:

Automatically enabled if set num_gpus_for_prediction > 0

See also recipe tab and “Include specific models”.

See also num_inner_hyperopt_trials_prefinal num_inner_hyperopt_trials_final. Currently only fully supported by XGBoost.