Feature Count Control

This page describes how to control feature counts during the feature selection process in H2O Driverless AI (DAI).

Original Feature Control

To control the count of original features when creating an experiment, use one of the following methods:

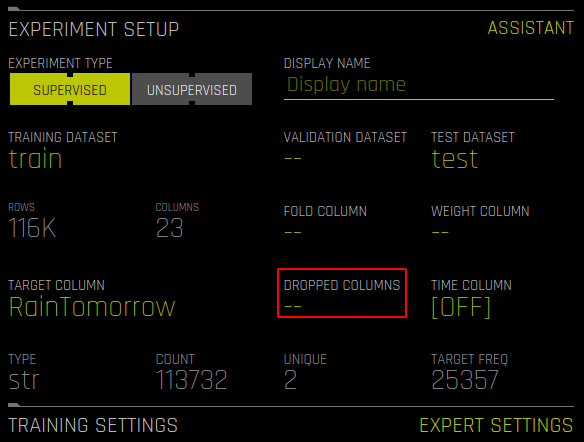

On the Experiment Setup page, click Dropped Columns to manually select specific columns to drop.

Use the Features to Drop Expert Setting to enter a list of features to drop. The list of features must be formatted as follows:

["V1", "V2", "V3"]This method is convenient if you have a long list of features that need to be dropped. For information on how to use Expert Settings in DAI, see Understanding Expert Settings.

If you are unsure about which original columns are best, you can let DAI select the best features by setting the following configuration options, which use DAI’s feature selection (FS) by permutation importance to determine which original features are beneficial to keep, and which features to remove if they negatively impact the model.

max_orig_cols_selected: Specify the maximum number of columns to be selected from the set of original columns using FS by permutation importance.max_orig_numeric_cols_selected: This option has the same functionality asmax_orig_cols_selected, but for numeric columns.max_orig_nonnumeric_cols_selected: This option has the same functionality asmax_orig_cols_selected, but for non-numeric columns.

To view a report about original features without any action, set

orig_features_fs_report = true.In general, FS can be controlled by setting the following parameters:

max_rows_fs fs_orig_cols_selected_simple_factor use_native_cats_for_lgbm_fs predict_shuffle_inside_model fs_data_vary_for_interpretability fs_data_frac statistical_threshold_data_size_large stabilize_fsFS is also affected by TOML values like

max_int_as_cat_uniques, because for categorical original features we have to transform them to see how useful they are, so the nature of the transformation can matter.

If strategy is FS (for high interpretability dial) we will use FS to get rid of poor features that hurt the model, and this can be fine-tuned with the following parameters:

fs_orig_cols_selected fs_orig_numeric_cols_selected fs_orig_nonnumeric_cols_selectedNominally we only limit categoricals by count, but otherwise any features that actually hurt the model are removed. Such an “FS individual” might do better than other individuals added in some cases. But FS is a greedy algorithm, and might overdo removal of features, while the genetic algorithm might find ways to still use features in other transformed ways.

Transformed Feature Control

For transformed features, the Experiment Setup page and Expert Settings control the genetic algorithm (GA) that decides how many features should be present. In some cases, however, too few or too many features are made.

To control the number of transformed features that are made during an experiment, use the nfeatures_max and ngenes_max settings. The term gene in ngenes_max refers to the number of transformers used, while the term feature in nfeatures_max refers to the transformed features. These settings can be used to control the number of allowed transformers and transformed features by setting a limit beyond which transformed features or transformers are removed. (The transformed features or transformers with the lowest variable importance are removed first.)

In some cases, specifying nfeatures_max and ngenes_max may be sufficient to get a restricted model. However, the best practice when using these settings is to first run an experiment without specifying any restrictions, and then retrain the final pipeline with the restrictions enabled. You can retrain the final pipeline from the completed experiment page by clicking Tune Experiment > Retrain / Refit > From Final Checkpoint. For more information on retraining the final pipeline, see Retrain / Refit.

To force DAI to add more transformations, use the ngenes_min parameter. This can be useful if you want DAI to search more actively through all of the potential permutations of transformers and input features. That is, if you only selected target encoding, and DAI only has four input features, it may take too long to search through all of the potential permutations. In this case, increasing the value of the ngenes_min parameter forces DAI to search more actively at the cost of more features per model.

DAI automatically sets limits on the number of features that are made. To override the default behavior, use the following parameters:

nfeatures_max_threshold

limit_features_by_interpretability

features_allowed_by_interpretability

varimp_threshold_at_interpretability_10

stabilize_varimp

stabilize_features

rdelta_percent_score_penalty_per_feature_by_interpretability

feature_cost_mean_interp_for_penalty

features_cost_per_interp

apply_featuregene_limits_after_tuning

remove_scored_0gain_genes_in_postprocessing_above_interpretability

remove_scored_0gain_genes_in_postprocessing_above_interpretability_final_population

remove_scored_by_threshold_genes_in_postprocessing_above_interpretability_final_population

You can also control the number of input features a transformer gets by using the following parameters:

fixed_feature_interaction_depth

max_feature_interaction_depth

feature_engineering_effort

enable_genetic_algorithm

(That is, to create basic features with no tuning or evolution, set enable_genetic_algorithm='off'.)

Individuals Control

You can control the number or type of individuals that are tuned or evolved by using the following config.toml parameters:

parameter_tuning_num_models

fixed_num_individuals

Sample Use Case

The following is a sample use case for controlling feature counts.

Example:

You want to limit the number of features used for scoring to 14.

Solution A:

For transformed features, set

nfeatures_max = 14in the Expert Settings window.For original features, set the following parameters:

max_orig_cols_selected max_orig_numeric_cols_selected max_orig_nonnumeric_cols_selected

Solution B

Without changing any parameters, let DAI complete the experiment. After the experiment is complete, inspect the ensemble_features_orig files in the Experiment Summary to see which original features were not important, then decide whether to drop even more of them by performing “tune” experiment and retrain final pipeline (You can also choose to refit from best model for an even closer match to the original experiment). In the Experiment Setup page, use the Dropped Columns option to select and drop the columns that were low importance according to the parent experiment. This causes the transformers that needed the dropped original features to be automatically pruned, and the model will have used a more intelligent process of deciding which original features are good instead of the more greedy FS by permutation importance.