Completed Experiment Page

The following sections describe the completed experiment page.

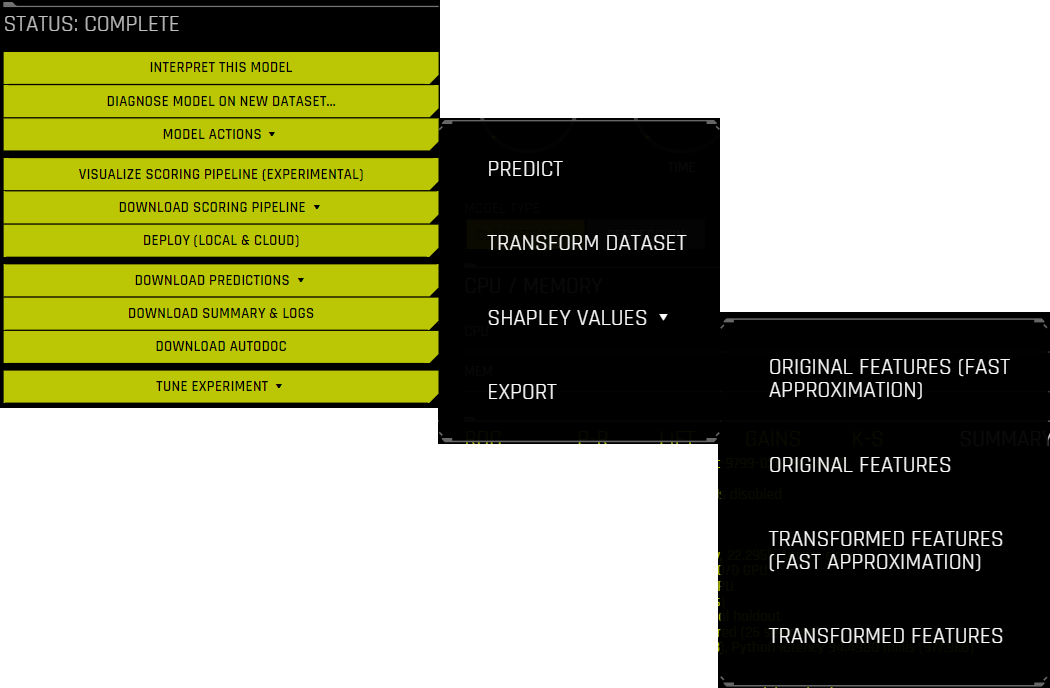

Completed Experiment Actions

The following is a description of the actions that can be performed after the status of an experiment changes from Running to Complete.

Interpret This Model drop-down: To create an interpretation of your model with the default settings, click Interpret this model > With default settings. To create an interpretation with custom settings of your choosing, click Interpret this model > With custom settings. If an interpretation of the model already exists, you can view it by clicking Interpret this model > Show most recent interpretation. For more information, see MLI Overview.

Note

This feature is not supported for Image or multiclass Time Series experiments.

Diagnose Model on New Dataset: For more information, see Model Performance on Another Dataset.

Model Actions drop-down:

Analyze results: Opens the Experiment Results Wizard. Note that this wizard is currently in a beta state.

Predict: See Scoring on Another Dataset.

Transform Dataset: See Transform dataset.

Fit & Transform Dataset: See Fit and transform dataset. (Not available for Time Series experiments.)

Shapley Values drop-down: Download Shapley values for original or transformed features. Driverless AI calls XGBoost and LightGBM SHAP functions to get contributions for transformed features. Shapley for original features is approximated from transformed features by evenly splitting (naive method) the contribution amongst the contributing features. For more information, see Shapley values in DAI. Select Fast Approximation to make Shapley predictions using only a single fold and model from all of the available folds and models in the ensemble. For more information on the fast approximation options, refer to the

fast_approx_num_treesandfast_approx_do_one_fold_one_modelconfig.toml settings.Original Features (Fast Approximation)

Original Features

Transformed Features (Fast Approximation)

Transformed Features

Export: Export the experiment. For more information, see Exporting and Importing Experiments.

Visualize Scoring Pipeline: View a visualization of the experiment scoring pipeline. For more information, refer to Visualizing the Scoring Pipeline.

Download Scoring Pipeline drop-down:

Download Python Scoring Pipeline: Download a standalone Python scoring pipeline for H2O Driverless AI. For more information, refer to Driverless AI Standalone Python Scoring Pipeline.

Download MOJO Scoring Pipeline: A standalone Model Object, Optimized scoring pipeline. For more information, refer to MOJO Scoring Pipelines. (Note that this option is not available for TensorFlow or RuleFit models.)

Note

If scoring pipelines were not built as part of the experiment, then options to build the Python and MOJO scoring pipelines are displayed.

Deploy: For information on deploying DAI models, see Deploy Driverless AI models.

Download Predictions: For regression experiments, output includes predictions with lower and upper bounds. For classification experiments, output includes probability for each class and labels created by using the threshold_scorer. For binary problems, F1 is the default threshold_scorer, so if a validation set is provided, then the threshold for max F1 on the validation set is used to create the labels. When performing cross validation, the mean of thresholds for max F1 on internal validation folds is used to create the labels. For multiclass problems, argmax is used to create the labels.

Training Predictions (Holdout Data Only): In CSV format, available if a validation set was not provided. Note that for this option, the training predictions file contains predictions for the test (holdout) part of the dataset. This file is provided for evaluation purposes, and it corresponds to the data that was set aside for testing during the classic training/test split.

Validation Set Predictions: In CSV format, available if a validation set was provided.

Test Set Predictions: In CSV format, available if a test dataset is used.

Download Summary & Logs: Download a zip file containing the following files. For more information, refer to the Experiment Summary section.

Experiment logs (regular and anonymized)

A summary of the experiment

The experiment features along with their relative importance

The Custom Individual Recipe for the experiment

Ensemble information

An experiment preview

Word version of an auto-generated report for the experiment

A target transformations tuning leaderboard

A tuning leaderboard

Download AutoDoc: Download an auto-generated report for the experiment as a Word (DOCX) document. This file is also available in the Experiment Summary ZIP file. Note that this option is not available for deprecated models. For more information, see Using AutoDoc.

Tune Experiment drop-down: Tune the completed experiment by using the following options:

New / Continue: Select one of the following options:

With same settings: Create a new experiment that copies the setup of the original experiment. Selecting this option takes you to the Experiment Setup page, where you can change any parameter of the original experiment.

From last checkpoint: Create a new experiment that copies the setup of the original experiment and continues from the last iteration’s checkpoint of models and features. Selecting this option takes you to the Experiment Setup page, where you can change any parameter of the original experiment.

Retrain / Refit: Retrain the experiment’s final pipeline. For more information, see Retrain / Refit.

Create Individual Recipe: Create the Individual Recipe for the experiment being viewed. After the Individual Recipe has been created, you can either download it to your local filesystem or upload it as a Custom Recipe. If you upload the Individual Recipe as a Custom Recipe, it can be found on the Recipes page and in the Expert Settings under Include specific individuals. For more information on the Individual Recipe, see Custom Individual Recipe.

Note

The preceding “Download” options (with the exception of AutoDoc) appear as “Export” options if artifacts were enabled when Driverless AI was started. For more information, refer to Exporting Artifacts.

Experiment Insights and Scores

While an experiment is running or after an experiment is completed, you can view insights (for time series and Automatic Image Model experiments) and scores related to the model by clicking Insights or Scores. For more information, refer to Model Insights and Model Scores.