Prompts

Create a prompt template

Overview

You can create an array of prompt templates for a Collection or Chat session, each designed to guide the language model in generating specific tailored text (for example, tailored text for a Collection's description or a question from a Chat session).

These templates serve as structured outlines with instructions for the model to follow, ensuring consistent and tailored outputs for your specific needs. In other words, prompt templates enable customization of responses from Enterprise h2oGPTe by providing reusable instructions sent alongside the user's query/request to the LLM.

Instructions

To create a new prompt template, consider the following instructions:

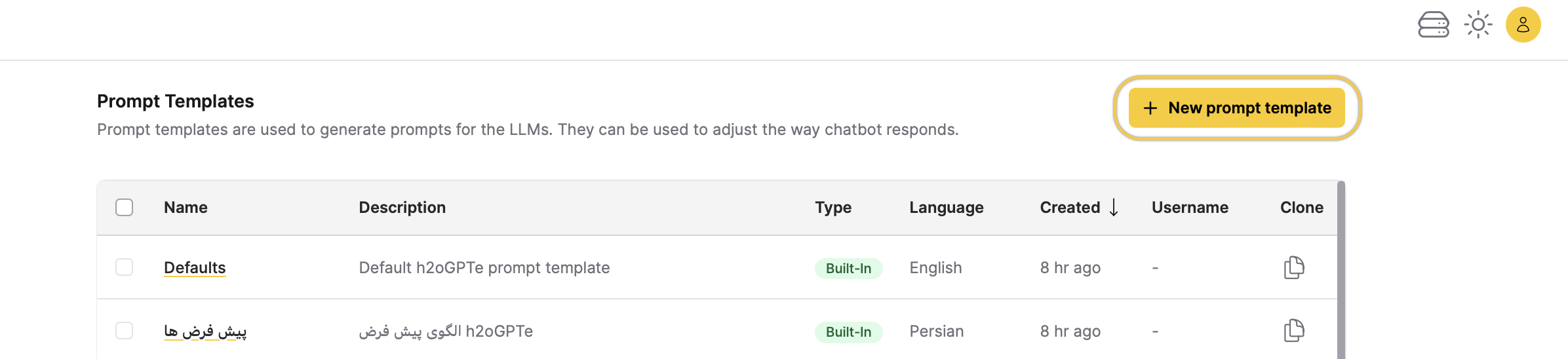

- On the Enterprise h2oGPTe navigation menu, click Prompts.

- Click + New prompt template.

- In the Template name box, enter a name for the prompt template.

- (Optional) In the following setting sections, make the changes you want:

- Click + Create.

Create a prompt template for a specific language

Enterprise h2oGPTe features built-in prompt templates for Chinese, Turkish, Russian, Portuguese, Malay, Japanese, Indonesian, Hindi, French, Persian, and Spanish. You can also create your own prompt template instead of using the defaults and select a language from a list of about 180 languages that you can choose from.

The built-in prompt templates support multiple languages by:

- Writing the System Prompt (personality) in the specified language.

- Writing all prompt extensions in the specified language (the prompt used before and after the RAG chunks are included in the prompt, self-reflection prompts, etc.).

- Using an embedding model (the model that converts the documents to embeddings in the vector database) that supports the selected language.

If the LLM you choose to use to answer the questions does not understand the language you have chosen, then you will likely get responses back in English. For example, Mixtral only supports English, French, Italian, German, and Spanish.

Clone a prompt template

Overview

Once you've designed a new prompt template, you can duplicate it to generate additional templates with identical or similar configurations. This feature streamlines the process of creating multiple templates tailored to your specific requirements.

Instructions

To clone a prompt template, consider the following steps:

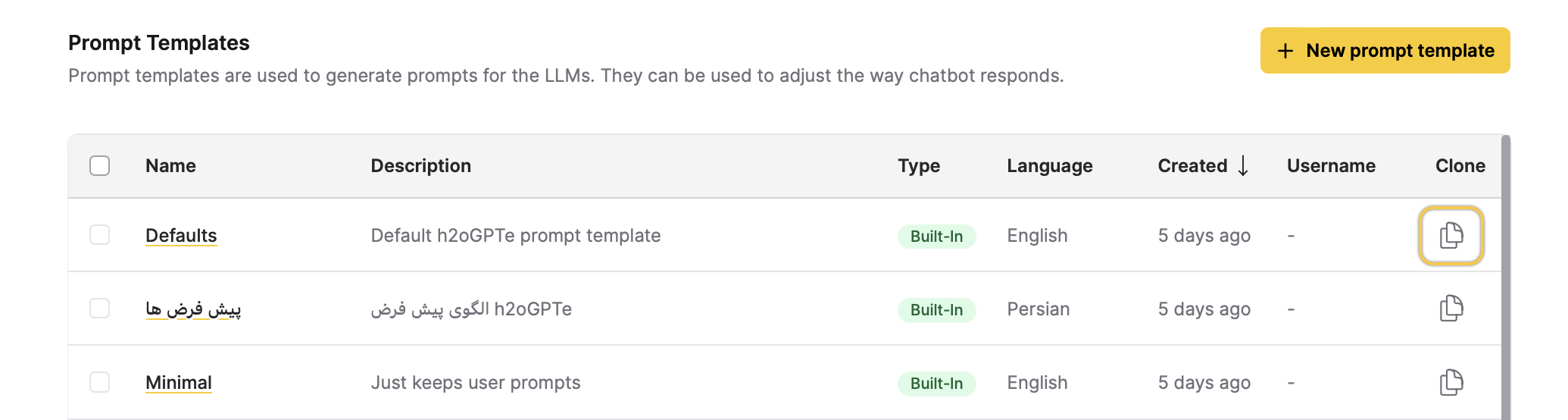

- On the Enterprise h2oGPTe navigation menu, click Prompts.

- In the Prompts table, locate the row where the prompt template you want to clone is located.

- Click Clone.

- In the Template name box, enter a name for the clone prompt template.

- (Optional) In the following setting sections, make the changes you want:

- Click + Clone.

Prompt template settings

General

Template name

This setting defines the name for the prompt template.

Language

This setting defines the language for the prompt template.

Description

This setting defines the description for the prompt template.

Prompts

System prompt

This setting defines a system prompt, which refers to a language model's initial input (instruction) to initiate a specific task or interaction. In the context of natural language processing models like Enterprise h2oGPTe, a system prompt is the initial text input given to the model to prompt it to generate a response for a Collection and Document.

System prompts can vary widely depending on the task. They serve as a guide for the model, providing context and direction for generating the desired output. System prompts can range from simple queries or instructions to more complex scenarios or prompts tailored to specific tasks, such as summarization.

In essence, a system prompt acts as the starting point for the language model's generation process, shaping the direction and content of its responses.

For example: You are h2oGPTe, an expert question-answering document AI system created by H2O.ai that performs like GPT-4 by OpenAI.

The system prompt provides overall alignment and safety and is used for all Chats and Document summarizations. Self-reflection uses its own system prompt.

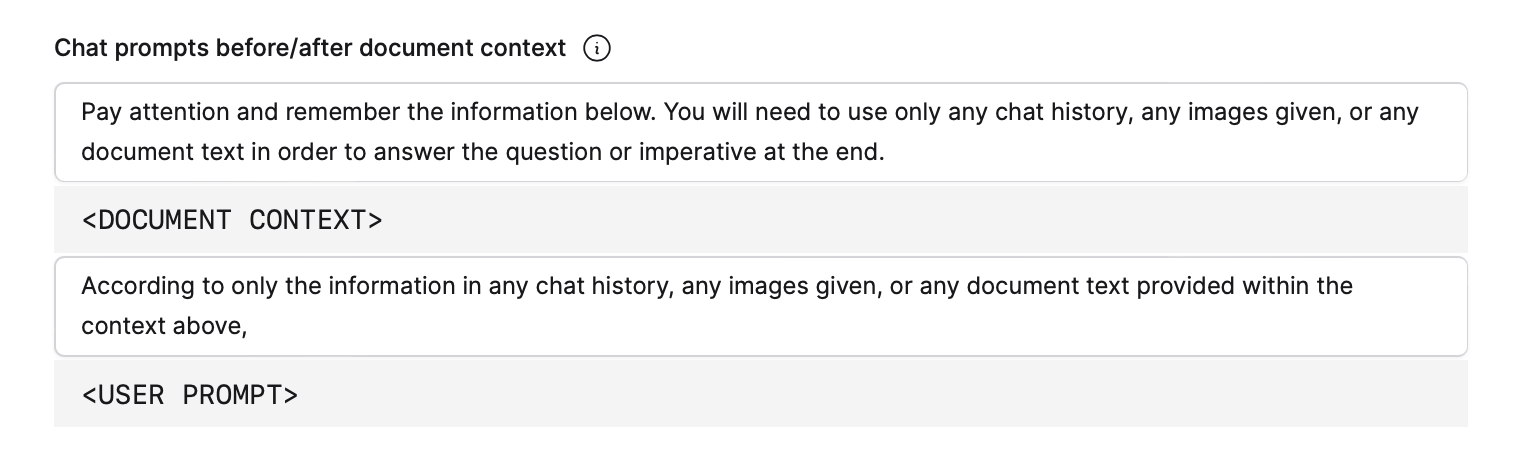

Chat prompts before/after document context

-

Before text box: This text box allows you to define a prompt before the Document(s) contexts within your Collection. This prompt is crucial in constructing the LLM prompt that Enterprise h2oGPTe sends to the Large Language Model (LLM). The LLM prompt serves as the question or instruction you send to the LLM to generate the desired response. You can customize this RAG prompt according to your specific requirements and objectives.

For example: Pay attention and remember the information below. You will need to use only the given document context to answer the question or imperative at the end.

-

After text box: This text box allows you to define a prompt after the Document contexts within your Collection. This prompt is crucial in constructing the LLM prompt that Enterprise h2oGPTe sends to the Large Language Model (LLM). The LLM prompt serves as the question or instruction you send to the LLM to generate the desired response. You can customize this prompt according to your specific requirements and objectives.

For example: According to only the information in the document sources provided within the context above,

This setting is the most important set of prompts when doing grounded generation. Grounded generation, within the context of Language Models (LLMs), refers to the generation of text that is coherent and grammatically correct and aligned with a given context, prompt, or grounding information. This grounding information could include textual prompts, keywords, or any other form of input that constrains or guides the generation process.

In the context of LLMs like Enterprise h2oGPTe, grounding can help steer the generation process toward producing more relevant and appropriate text for a given task or scenario. For example, providing a prompt about a specific topic or domain can help the model generate more focused and accurate text within that domain.

HyDE No-RAG LLM prompt extension

This setting defines a prompt extension that helps retrieve more relevant context from the Document(s) during the first large language model (LLM) call when doing HyDE (hypothetical document embedding) based generation.

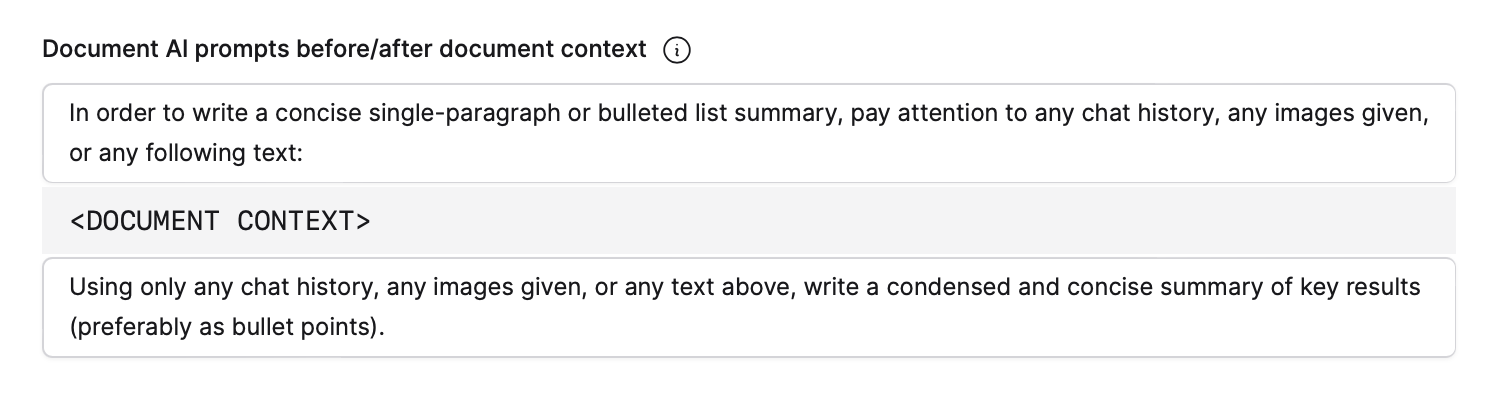

Document AI prompts before/after document context

This setting delineates the pre- and post-context prompts used by Enterprise h2oGPTe to generate a Document summary.

-

Pre-context: The first part of the setting (first text box) enables you to specify a pre-context prompt involving the provision of specific instructions or queries to guide the language model (LLM) before introducing contextual information. Such a prompt steers the subsequent document summary generated by the LLM, ensuring alignment with the intended context or query.

-

Post-context: The second part of the setting (second text box) enables you to specify a prompt after context, which refers to providing a specific instruction or query to guide the language model (LLM) after presenting it with contextual information. This prompt is used to direct the subsequent document summary generated by the LLM, ensuring that it aligns with the provided context or query.

Auto-gen collection description prompt

This setting defines a prompt to automatically generate a description for a Collection. This prompt guides the system as it crafts a concise and informative summary of the content or purpose of a Collection. Customizing this prompt allows you to tailor the generated descriptions to suit your needs, ensuring clarity and relevance for users accessing your Collection.

For example: Create a short one-sentence summary from the above context, with the goal to make it clear to the reader what this is about.

Auto-gen document summary prompts�

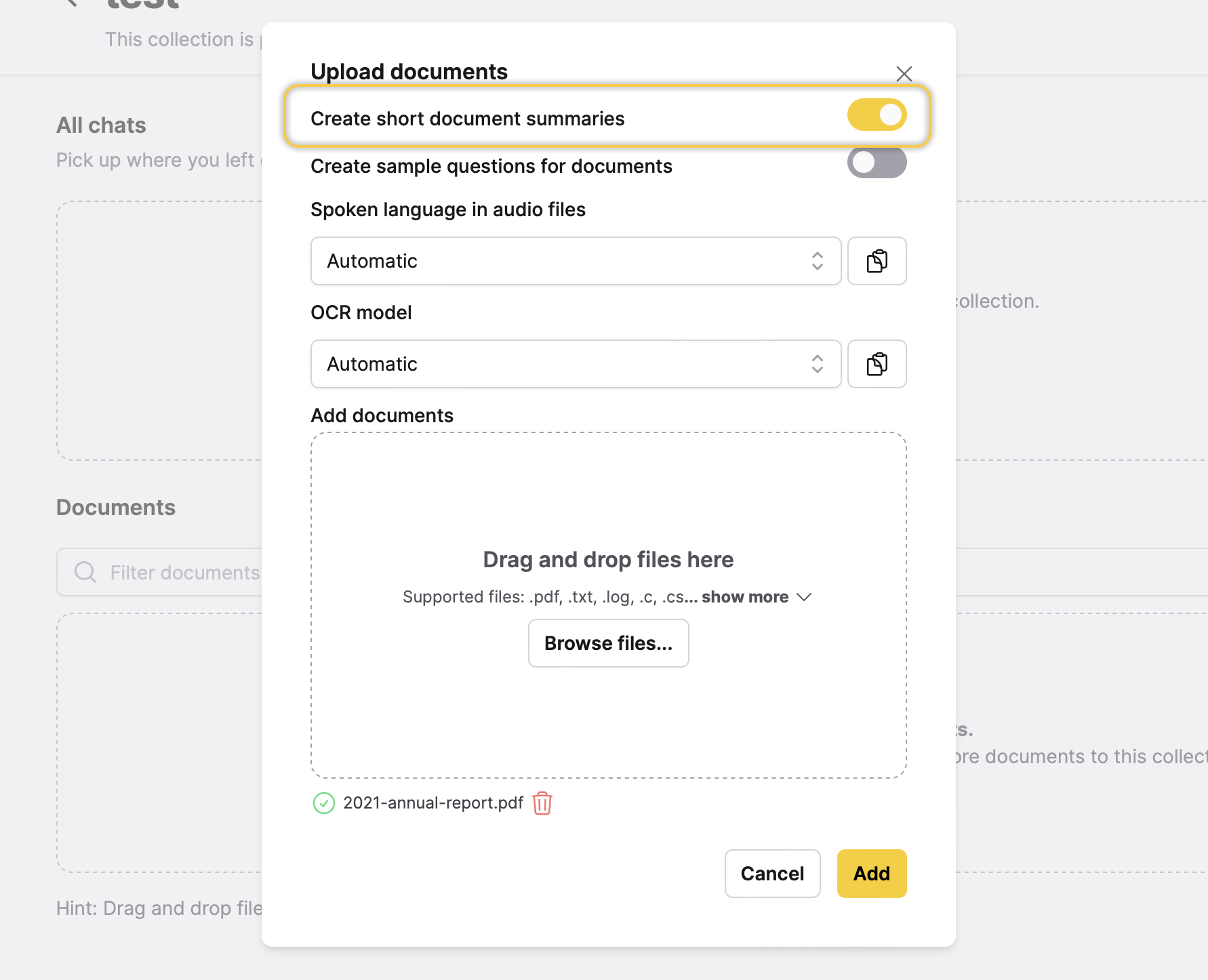

This setting defines a prompt that Enterprise h2oGPTe utilizes to generate a summary of a Document you import. In particular, this prompt is utilized if you specify to Enterprise h2oGPTe that you want an auto-generated summary of a Document you are about to import.

To learn how to specify to Enterprise h2oGPTe how to generate an auto-generated summary for a Document you are about to import, see Add a Document(s) to a Collection.

Prompt per image batch for vision models

This setting defines the prompt used to obtain answers for the user queries from a batch of images when vision mode is enabled.

Prompt for final image batch reduction for vision models

This setting defines the prompt used to obtain the final answer for the user query from the per-image-batch answers when vision mode is enabled.

Self-reflection

Self-reflection system prompt (personality)

This setting defines the self-reflection prompt for the System prompt. In particular, this setting defines the prompt for the language model to self-reflect based on predefined personality traits or characteristics. This prompt encourages the model to generate responses that reflect a particular personality, fostering consistency and depth in its interactions. This setting lets users tailor the language model's responses to align with desired personality traits, enhancing the overall user experience and interaction quality.

For example: You are acting as a judge. You must be fair and impartial and pay attention to details.

Self-reflection prompt

This setting defines a self-reflection prompt within the system, prompting the system to engage in self-assessment after processing contextual information. It dictates the wording and format of the prompt, encouraging the system to evaluate the quality and relevance of its responses in light of the provided context. By utilizing this setting, you can guide the system to reflect on its output and enhance its ability to generate coherent and appropriate responses within specific contexts.

Sample questions

Document sample questions prompt

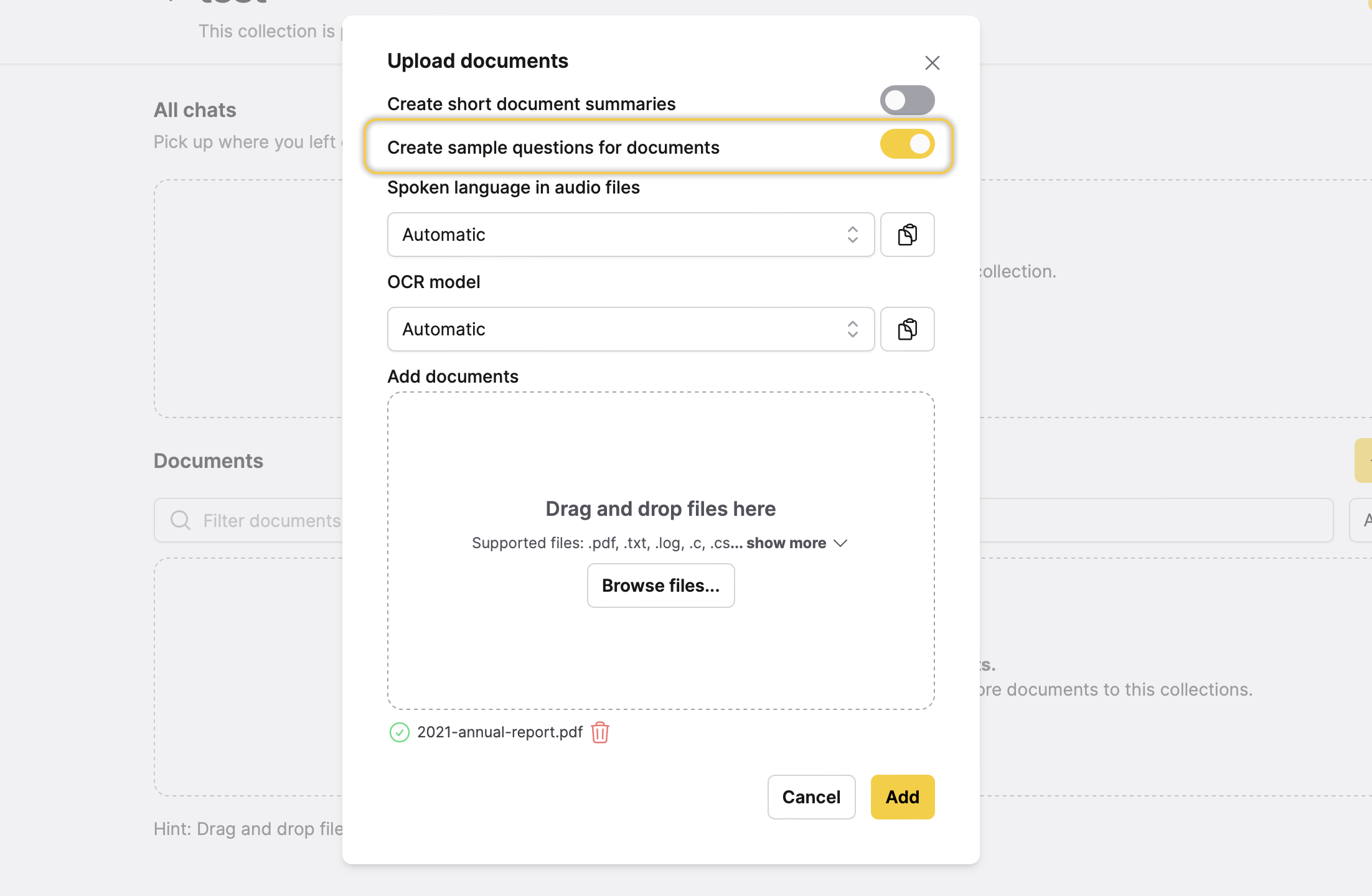

This setting defines a prompt that is used to generate a set of questions for a Collection (in particular, for a Document). In particular, this prompt is used if you specify to Enterprise h2oGPTe that you want to auto-generate a set of questions for the Document you are about to import.

- By default, Enterprise h2oGPTe displays four auto-generated questions when Chatting with the Collection containing the Document specified to auto-generate questions. These questions are displayed when the following setting is toggled for the Document(s) being imported: Create sample questions for documents.

- To learn how you can specify to Enterprise h2oGPTe that you want to auto-generate a set of questions for the Document you are about to import, see Add a Document(s) to a Collection.

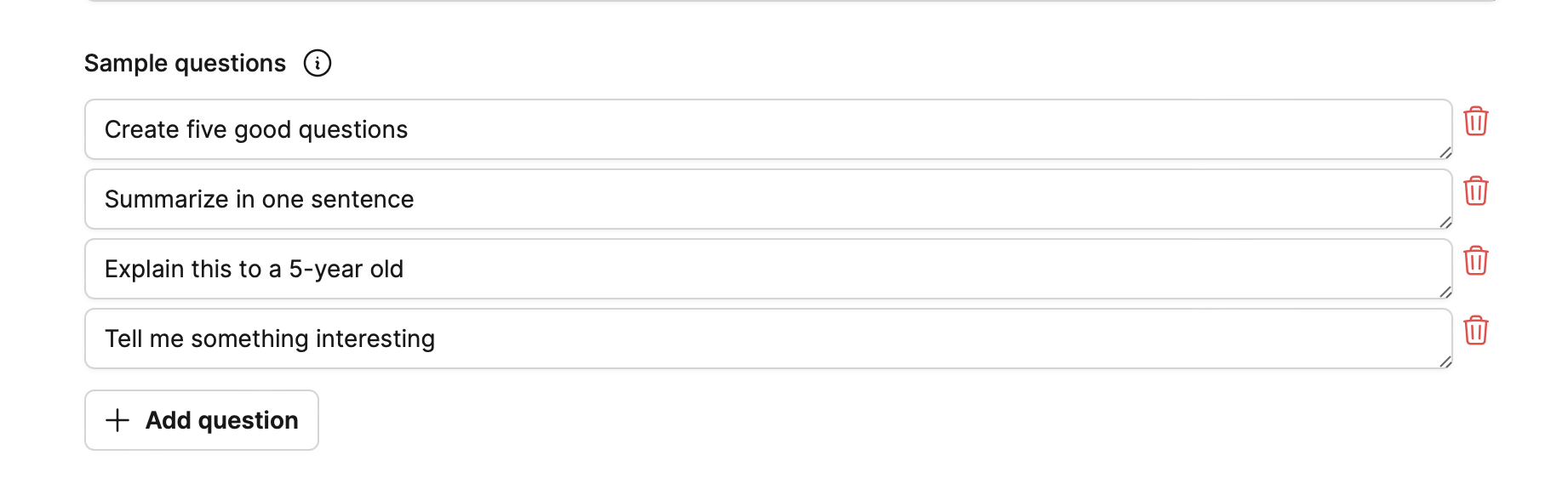

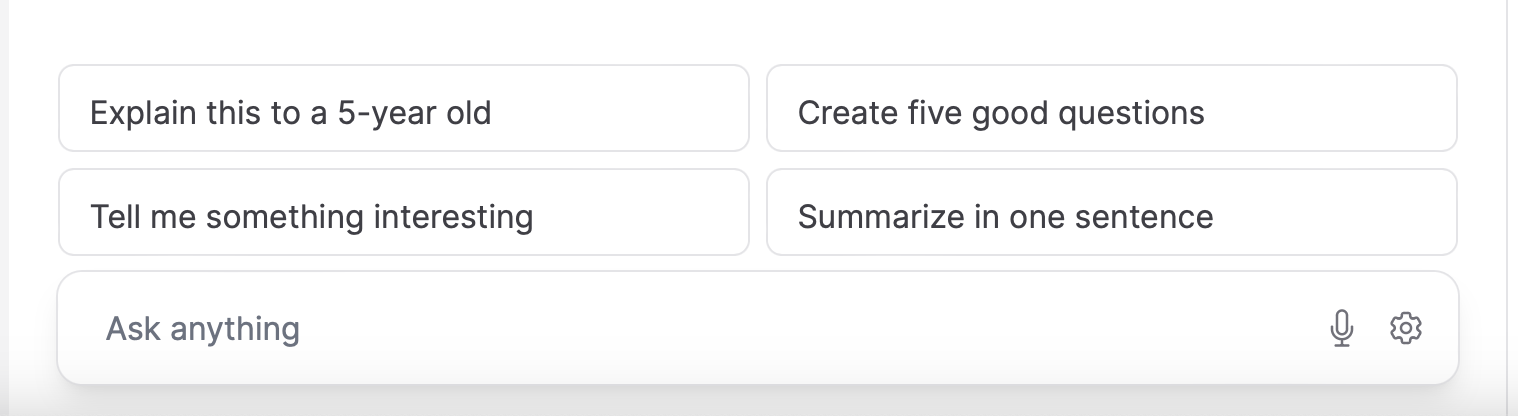

Sample questions

In the Sample questions setting section, you can define which sample questions will be available when first Chatting with a Collection using the (this) prompt template.

By default, Enterprise h2oGPTe displays four sample questions when Chatting with the Collection using the (this) prompt template. These sample questions are displayed when the following setting was not toggled for the Document(s) being imported: Create sample questions for documents.

- Submit and view feedback for this page

- Send feedback about Enterprise h2oGPTe to cloud-feedback@h2o.ai