MLflow PyTorch example

This example demonstrates how you can upload and deploy an MLflow PyTorch model using the MLOps Python client. It uploads an MLflow PyTorch model to MLOps and analyzes it. It then sets its metadata and parameters, and deploys it to the dev environment in MLOps.

- Install PyTorch

- Install MLflow

- Install scikit-learn

- You will need the values for the following constants in order to successfully carry out the task. Contact your administrator to obtain deployment specific values.

| Constant | Value | Description |

|---|---|---|

MLOPS_API_URL | Usually: https://api.mlops.my.domain | Defines the URL for the MLOps Gateway component. You can verify the correct URL by navigating to the API URL in your browser. It should provide a page with a list of available routes. |

TOKEN_ENDPOINT_URL | https://mlops.keycloak.domain/auth/realms/[fill-in-realm-name]/protocol/openid-connect/token | Defines the token endpoint URL of the Identity Provider. This uses Keycloak as the Identity Provider. Keycloak Realm should be provided. |

REFRESH_TOKEN | <your-refresh-token> | Defines the user's refresh token |

CLIENT_ID | <your-client-id> | Sets the client id for authentication. This is the client you will be using to connect to MLOps. |

PROJECT_NAME | MLflow+PyTorch Upload And Deploy Example | Defines a project that the script will create for the MLflow model. |

EXPERIMENT_NAME | pytorch-mlflow-model | Defines the experiment display name. |

DEPLOYMENT_ENVIRONMENT | DEV | Defines the target deployment environment. |

REFRESH_STATUS_INTERVAL | 1.0 | Defines a refresh interval for the deployment health check. |

MAX_WAIT_TIME | 300 | Defines maximum waiting time for the deployment to become healthy. |

The following steps demonstrate how you can use MLOps Python client to upload and deploy an MLflow PyTorch model in MLOps.

-

Change the values of the following constants in your

MLflowPyTorchExample.pyfile as given in the preceding data table.- Format

- Sample

MLflowPyTorchExample.py### Constants

MLOPS_API_URL = <MLOPS_API_URL>

TOKEN_ENDPOINT_URL = <TOKEN_ENDPOINT_URL>

REFRESH_TOKEN = <REFRESH_TOKEN>

CLIENT_ID = <CLIENT_ID>

PROJECT_NAME = <PROJECT_NAME>

EXPERIMENT_NAME = <EXPERIMENT_NAME>

DEPLOYMENT_ENVIRONMENT = <DEPLOYMENT_ENVIRONMENT>

REFRESH_STATUS_INTERVAL = <REFRESH_STATUS_INTERVAL>

MAX_WAIT_TIME = <MAX_WAIT_TIME>MLflowPyTorchExample.py### Constants

MLOPS_API_URL = "https://api.mlops.my.domain"

TOKEN_ENDPOINT_URL="https://mlops.keycloak.domain/auth/realms/[fill-in-realm-name]/protocol/openid-connect/token"

REFRESH_TOKEN="<your-refresh-token>"

CLIENT_ID="<your-mlops-client>"

PROJECT_NAME = "MLflow+PyTorch Upload And Deploy Example"

EXPERIMENT_NAME = "pytorch-mlflow-model"

DEPLOYMENT_ENVIRONMENT = "DEV"

REFRESH_STATUS_INTERVAL = 1.0

MAX_WAIT_TIME = 300 -

Run the

MLflowPyTorchExample.pyfile.- Execution

- Sample response

python3 MLflowPyTorchExample.pyDeployment has become healthy -

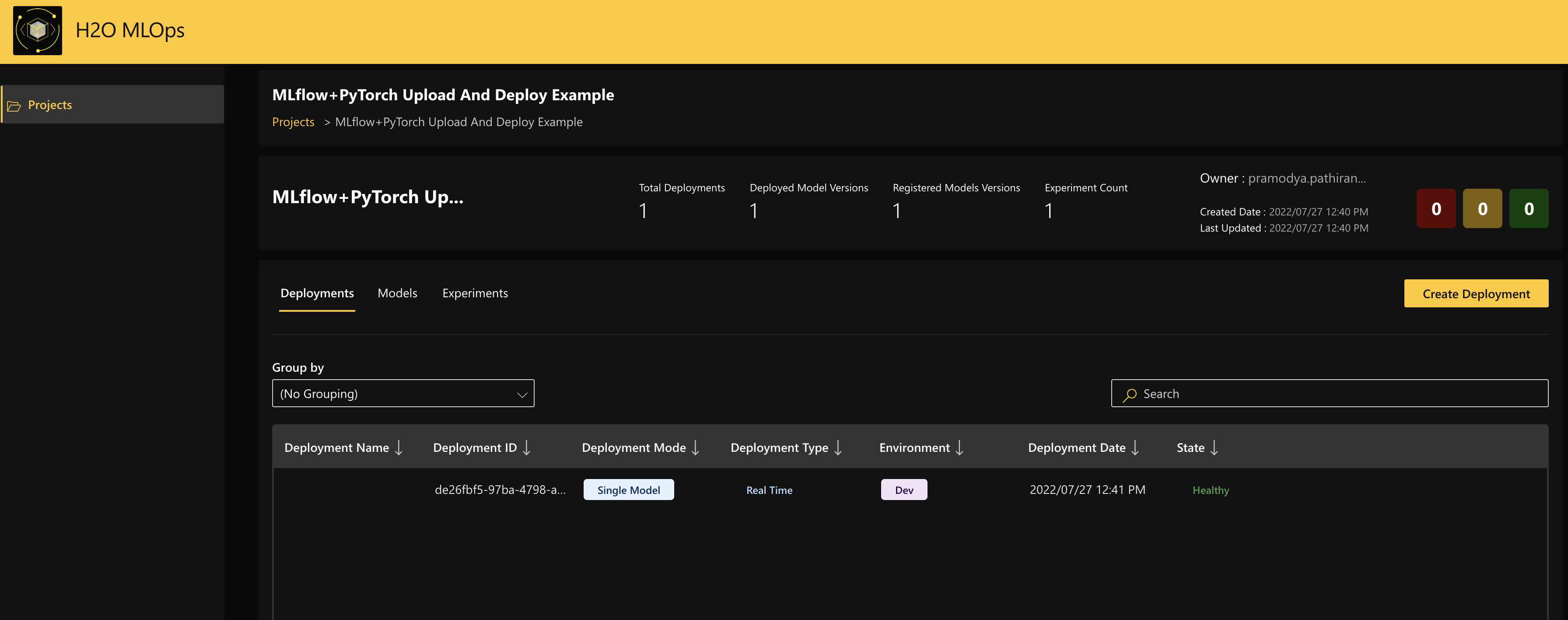

Finally, navigate to MLOps and click the project name

MLflow+PyTorch Upload And Deploy Exampleunder Projects to view the deployed model. Note

NoteFor more information about model deployments in MLOps, see Understand deployments in MLOps.

Example walkthrough

This section provides a walkthrough of each of the sections in the MLflowPyTorchExample.py file.

-

Include the Helper function, which waits for the deployment to be healthy.

-

Convert the extracted metadata into storage compatible value objects.

-

Train the PyTorch model.

MLflowPyTorchExample.py# Train pytorch model.

X_train, y_train = sklearn.datasets.load_wine(return_X_y=True, as_frame=True)

X_tensor = torch.from_numpy(X_train.to_numpy())

y_tensor = torch.from_numpy(y_train.to_numpy())

dataset = torch.utils.data.dataset.TensorDataset(X_tensor, y_tensor)

torch_model = torch.nn.Linear(13, 1)

loss_fn = torch.nn.MSELoss(reduction="sum")

learning_rate = 1e-6

for batch in dataset:

torch_model.zero_grad()

X, y = batch

y_prediction = torch_model(X.float())

loss = loss_fn(y_prediction, y.float())

loss.backward()

with torch.no_grad():

for param in torch_model.parameters():

param -= learning_rate * param.grad

# Infering and setting model signature

# Model signature is mandatory for models that are going to be loadable by the

# server. Only ColSpec inputs and output are supported.

model_signature = signature.infer_signature(X_train)

model_signature.outputs = mlflow.types.Schema(

[mlflow.types.ColSpec(name="quality", type=mlflow.types.DataType.float)]

) -

Create a project in MLOps and create an artifact in MLOps storage.

-

Store, zip, and upload the model.

MLflowPyTorchExample.py# Storing, zipping and uploading the model

model_tmp = tempfile.TemporaryDirectory()

try:

model_dir_path = os.path.join(model_tmp.name, "wine_model")

mlflow.pytorch.save_model(

torch_model, model_dir_path, signature=model_signature

)

zip_path = shutil.make_archive(

os.path.join(model_tmp.name, "artifact"), "zip", model_dir_path

)

with open(zip_path, mode="rb") as zipped:

mlops_client.storage.artifact.upload_artifact(

file=zipped, artifact_id=artifact.id

)

finally:

model_tmp.cleanup() -

Customize the composition of the deployment and specify the deployment as a single deployment.

-

Finally, create the deployment and wait for the deployment to become healthy. This analyzes and sets the metadata and parameters of the model, and deploys it to the

DEVenvironment.

- Submit and view feedback for this page

- Send feedback about H2O MLOps to cloud-feedback@h2o.ai