Tutorial 3A: Text-entity recognition annotation task

Overview

This tutorial describes the process of creating a text-entity recognition annotation task, including specifying an annotation task rubric for it. To highlight the process, we will annotate a dataset containing user reviews (in text format) and ratings (from 0 to 5) of Amazon products.

Step 1: Explore dataset

We are going to use the preloaded Amazon reviews demo dataset for this tutorial. The dataset contains 180 samples (text), each containing a review of an Amazon product. Let's quickly explore the dataset.

- On the H2O Label Genie navigation menu, click Datasets.

- In the Datasets table, click amazon-reviews-demo.

Step 2: Create an annotation task

Now that we understand the dataset, let's create an annotation task that enables you to annotate the dataset. For this tutorial, the annotation task refers to a text-entity recognition annotation task locating and classifying named entities in unstructured text into pre-defined categories.

- Click New annotation task.

- In the Task name box, enter

tutorial-3a. - In the Task description box, enter

Annotate a dataset containing reviews from Amazon products. - In the Select task list, select Entity recognition.

- In the Select text column box, select comment.

- Click Create task.

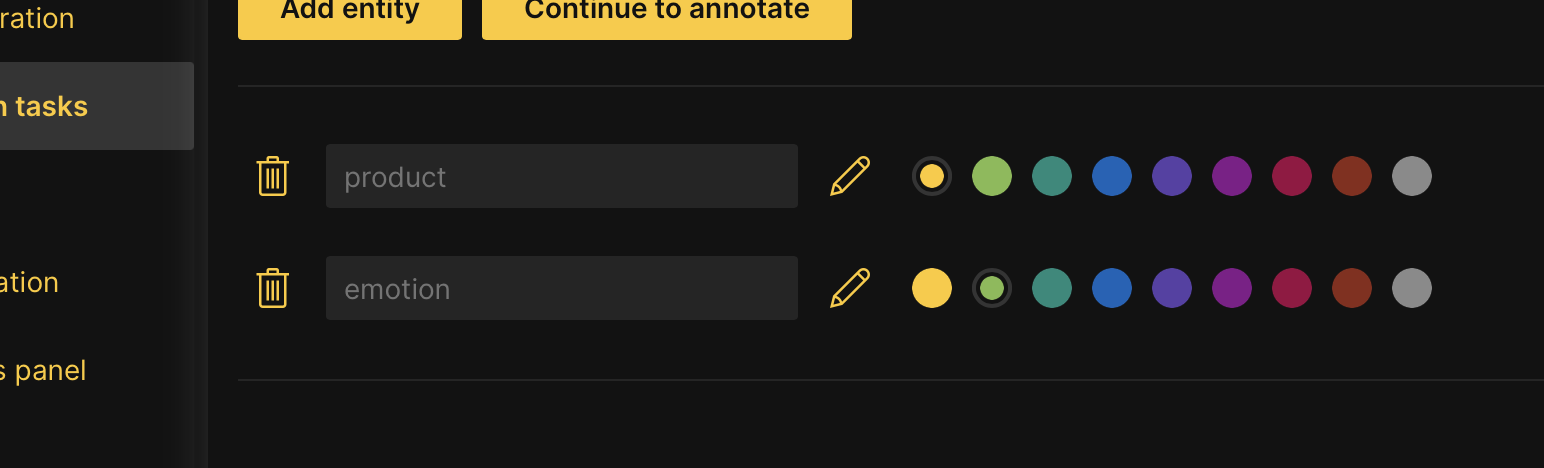

Step 3: Specify an annotation task rubric

Before we can start annotating our dataset, we need to specify an annotation task rubric. An annotation task rubric refers to the labels (for example, object classes) you want to use when annotating your dataset. For our dataset, let's define the following two entities: Product and Emotion.

Product refers to the Amazon product reviewed, while Emotion refers to one or several expressed (written) feelings during the review.

- In the New object name box, enter

product. - Click Add.

- Click Add entity.

- In the New object name box, enter

emotion. - Click Continue to annotate.

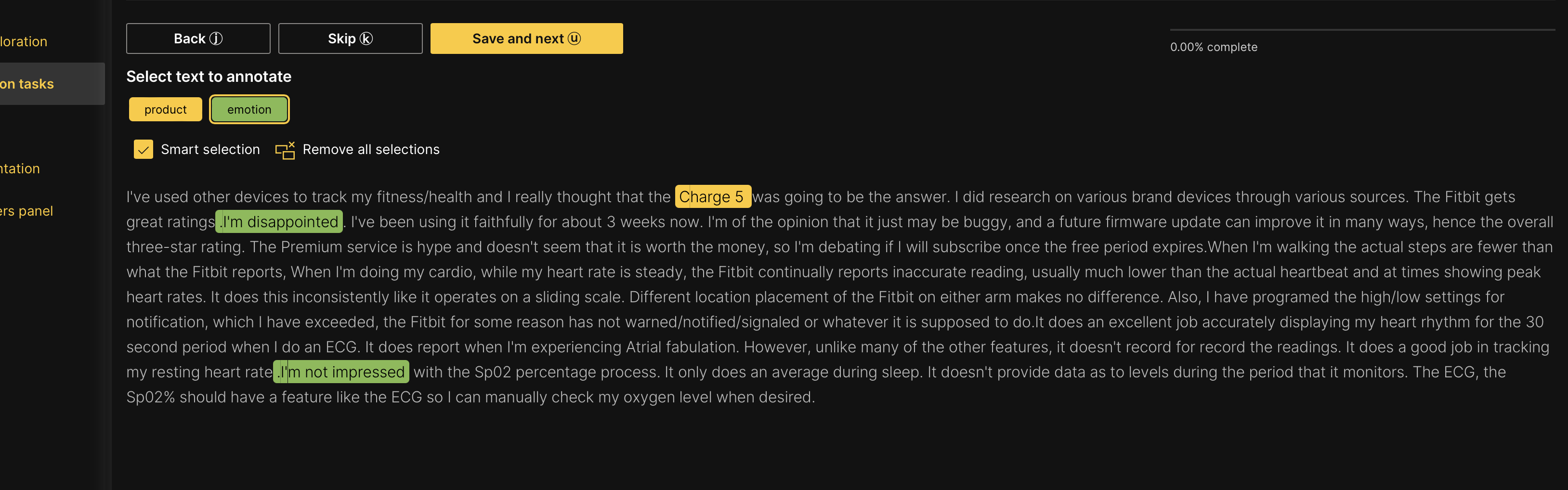

Step 4: Annotate dataset

In the Annotate tab, you can individually annotate each review (text) in the dataset. Let's annotate the first review.

- Let's start by annotating the product entities in the review. Highlight

Charge 5. - Now, let's annotate the review's emotional (emotion) entities. Click Emotion.

- Highlight

I'm disappointed.NoteYou can attribute a particular entity (Product or Emotion) to a word by clicking it.

- Highlight

I'm not impressed.

- Click Save and next.

Note

- Save and next saves the annotated review

- To skip a review to annotate later: Click Skip.

- Skipped reviews (samples) reappear after all non-skipped reviews are annotated

- Annotate all dataset samples.

note

At any point in an annotation task, you can download the already annotated (approved) samples. You do not need to fully annotate a dataset to download already annotated samples. To learn more, see Download an annotated dataset.

Summary

In this tutorial, we learned how to annotate and specify an annotation task rubric for a text-entity recognition annotation task.

Next

To learn the process of annotating and specifying an annotation task rubric for other various annotation tasks in computer vision (CV), natural language processing (NLP), and audio, see Tutorials.

- Submit and view feedback for this page

- Send feedback about H2O Label Genie to cloud-feedback@h2o.ai