A Chat session

Overview

A Chat session is an interaction between you and Enterprise h2oGPTe that consists of a series of prompts and answers.

- You can see who created each chat session on the chats and collection pages.

- When using a collection-based chat, the collection name is displayed so you can see which content the agent uses.

Components of a Chat session

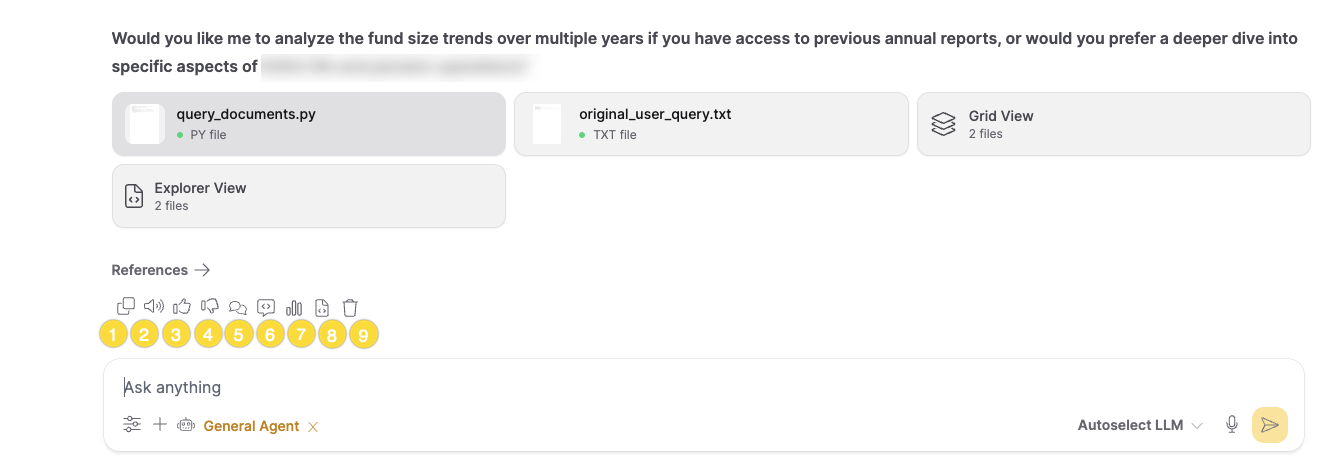

The chat response toolbar provides quick access to actions you can take on each response. The toolbar appears as a horizontal row of icons below each response.

-

Copy response

This button enables you to copy the LLM response to your clipboard.

-

Text-to-speech

This button allows you to hear responses read aloud. Click the speaker icon to convert text responses into audio. Click again to stop playback.

-

Upvote response

This button allows you to provide positive feedback on the usefulness of a response. This feedback is valuable for developers in improving the model. Your feedback is stored on the Feedback page. To learn more, see Feedback.

-

Downvote response

This button allows you to provide negative feedback on the usefulness of a response. This feedback helps developers improve the model. Your feedback is stored on the Feedback page. To learn more, see Feedback.

-

Chat history

This button opens the agent chat history panel, which displays the internal conversation and reasoning process used by agents when generating responses. This is particularly useful for understanding how agents arrived at their answers.

-

LLM Prompt (excl. images)

This button allows you to view the full LLM prompt, constructed using the RAG prompt before context, the Document context, and the RAG prompt after context. The LLM prompt is the question sent to the LLM to generate a desired response.

noteThis button is only available if you have developer settings enabled. For more information, see System Dashboard.

-

Usage stats

This button showcases the Usage Stats card, which highlights detailed information about performance and resource utilization during a Chat session. These statistics encompass various metrics to track the efficiency and cost associated with the session.

- response_time: This metric indicates the duration required for the LLM (Large Language Model) to generate a response to the user's query.

- retrieval_time: This metric refers to the duration, measured in seconds, it takes to receive a response.

- cost: This represents the expenses linked with the Chat session. It denotes the expenditure involved in processing the user's query and producing the corresponding response, measured in US dollars.

- llm_args: This refers to the arguments or parameters provided to the Large Language Model (LLM).

- num_chunks: This indicates the number of chunks the data is divided into.

- num_images: This reflects the number of images involved in generating the LLM response.

- usage: This section provides additional insights about the resources used for the LLM response.

- llm: This denotes the Large Language Model (LLM) used to create the LLM response.

- input_tokens: This indicates the number of tokens in the user's input.

- output_tokens: This reflects the number of tokens in the generated output.

- tokens_per_second: This measures the rate at which tokens are processed per second.

- origin: This specifies the method or approach used to generate the LLM response.

- cost: This represents the expense associated with the LLM response.

-

Python client code

This button displays the equivalent Python client code that replicates the query programmatically. Use this code to integrate the same query into your applications or scripts using the Enterprise h2oGPTe Python client.

noteThis button is only available if you have developer settings enabled. For more information, see System Dashboard.

-

Delete response

This button allows you to delete the LLM response from the chat session.

Interactive visualizations

When using the Interactive Visualizer prompt template, chat responses can include interactive HTML visualizations such as charts, data explorers, and animated diagrams. These visualizations appear inline within the response. You can interact with them directly. To learn more, see Interactive visualizations.

References

This section lets you view the References section, which highlights the sections of the Document from which the context was derived to generate the response. Use the references slider to focus on the highest-scoring referenced passages instead of showing every match.

Control generation with the Python client

You can control the state of an ongoing chat message generation using the following client methods. Asynchronous equivalents are also available in h2ogpte_async.py.

pause_chat(question_id): Halts streaming temporarily, allowing it to be resumed later.resume_chat(question_id): Resumes a paused stream.stop_chat(question_id): Permanently cancels the generation.finish_chat(question_id): Signals the LLM to naturally complete its current thought.

Each method returns a Result object with status="completed" on success, or raises an exception on failure.

Example:

# 1. Capture the question_id from an active stream's callback

question_id = None

def on_message(msg):

global question_id

# The system assigns the user's prompt ID to the reply_to field

if msg.reply_to and question_id is None:

question_id = msg.reply_to

print(f"Captured active question ID: {question_id}")

# Start the stream (this usually blocks, so control methods must run concurrently)

# session.query("Explain quantum computing", callback=on_message)

# ------------------------------------------------------------------

# 2. Concurrently (e.g., from a UI button handler or another thread),

# use the captured question_id to control the stream:

# ------------------------------------------------------------------

# Pause the stream

client.pause_chat(question_id)

# Resume the stream

client.resume_chat(question_id)

# Signal it to finish its current thought naturally

client.finish_chat(question_id)

# Or permanently stop it

client.stop_chat(question_id)

- Submit and view feedback for this page

- Send feedback about Enterprise h2oGPTe to cloud-feedback@h2o.ai