Import from URL

Overview

The Import from URL ingestion method allows you to import documents directly from web URLs. This method can crawl websites and extract content, making it ideal for importing web-based documents, articles, and other online content.

When to use

- Web content: When you need to import documents from websites

- Online resources: For importing articles, documentation, or reports

- Dynamic content: When content is regularly updated online

- Public documents: For importing publicly available web documents

- Research materials: When gathering content from multiple web sources

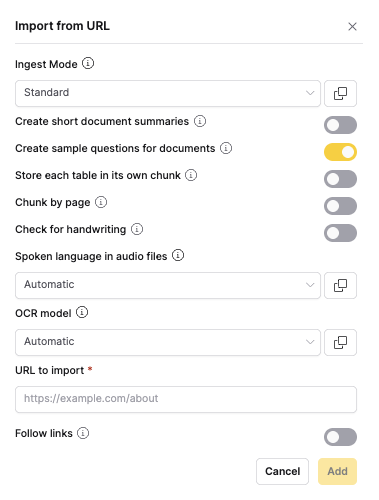

Configuration parameters

URL and crawling settings

| Option | Default | Description | Use case |

|---|---|---|---|

| URL | - (text input) | The web page URL to import from | Specify the starting point for content import |

| Follow links | Off (can be toggled on or off) | Whether to crawl linked pages from the same domain | Enable for importing entire websites or documentation sections |

| Max documents | 1 (number input) | Maximum number of pages to import during crawling | 1: Import only the specified page 5-10: Import a small section of related pages 50+: Import large documentation sites or blog series |

note

For document processing options, see the Shared Document Processing Options section in the main documentation.

Website crawling behavior

Single page import

- Follow links: Off

- Max documents: 1 (limits crawling to only the specified page)

- Result: Only the specified page is imported

- Use case: Specific articles, documentation pages, or reports

Multi-page crawling

- Follow links: On

- Max documents: Set to desired limit (controls how many pages are imported)

- Result: Multiple pages from the same domain are imported, up to the specified limit

- Use case: Entire documentation sites, blog series, or website sections

Crawling rules

- Same domain: Only pages from the same domain are crawled

- Respect robots.txt: Crawling respects website robots.txt files

- Rate limiting: Built-in delays to avoid overwhelming servers

- Duplicate detection: Automatically avoids importing duplicate content

Legal and ethical considerations

- Terms of service: Respect website terms of service and robots.txt

- Copyright: Ensure you have permission to import and use content

- Rate limiting: Avoid overwhelming servers with too many requests

- Data privacy: Be mindful of personal information that may be present

Feedback

- Submit and view feedback for this page

- Send feedback about Enterprise h2oGPTe to cloud-feedback@h2o.ai